Most Amazon sellers focus their optimization energy in the wrong places. They obsess over keyword density in bullet points, fiddle with PPC bid adjustments, and chase backend search terms — while the single most powerful lever for clicks and conversions sits right at the top of every listing, doing damage no one is measuring.

Their images.

Here’s the uncomfortable reality: a shopper who lands on your listing will form a visual impression in roughly 50 milliseconds. Before they’ve read your title, before they’ve scrolled to your bullet points, before they’ve checked your reviews — they’ve already decided whether this product looks worth their time. That snap judgment is made entirely by your images.

And yet most Amazon listings are built with images that were assembled quickly, tested never, and optimized for desktop in a world where more than 70% of Amazon traffic is now mobile. The result is a silent, invisible tax on every impression your listing receives — lower click-through rates, higher bounce rates, more abandoned carts, and ultimately, margin that quietly bleeds out without a clear culprit on your dashboard.

This isn’t another post about making sure your main image has a white background. You know that already. This is a detailed, section-by-section breakdown of what truly high-performing Amazon image stacks look like in 2026 — covering the science of sequencing, the specific mistakes that cost sellers real money, what Amazon’s Rufus AI is now extracting from your images, and how to build a testing loop that turns your image gallery into a compounding asset.

Let’s start at the beginning — with why images aren’t just a creative decision, but an economic one.

The Visual First Impression: Why Images Decide the Sale Before Buyers Read a Word

Amazon selling is, at its core, a conversion rate business. Traffic matters — but what you do with that traffic is what separates profitable listings from expensive ones. And the evidence is increasingly clear that images are the single biggest driver of whether a visitor converts or walks.

JungleScout research ranks product images as the second most critical purchase factor for Amazon buyers, sitting just behind price. That’s ahead of reviews, shipping speed, and brand reputation. When you factor in that images directly influence price perception — a professional image makes a product look premium, justifying higher prices — the argument for treating image optimization as a top-tier business activity becomes overwhelming.

The 50-Millisecond Window

Research on visual processing consistently shows that human brains form first impressions of visual content in approximately 50 milliseconds. For Amazon shoppers, that 50-millisecond window happens in the search results grid, where your hero image thumbnail competes against every other product on the page.

In that instant, a shopper’s brain is running a rapid-fire filter: Does this look professional? Does this look like what I’m searching for? Does this look worth clicking? If the answer to any of those questions is “not sure,” they scroll past. There’s no second chance in the search results — your hero image gets one shot.

Professional, high-quality images have been shown to produce conversion rates 2-3x higher than amateur or low-quality shots, according to Statista data. That’s not a marginal gain. A listing converting at 6% instead of 3% on the same traffic doubles revenue without a dollar more in ad spend.

Images as Your Silent Sales Team

The 65-70% of purchase decisions that are driven by images aren’t just about aesthetics. Images answer the questions a buyer would otherwise have to dig through text to find: What does this actually look like? How big is it? How do I use it? What’s in the box? Will it fit my life?

Every image slot in your gallery is an opportunity to answer one of those questions before doubt can take root and send the shopper elsewhere. The sellers who treat their image stack like a sales team — each image with a specific job, answering a specific objection, advancing a specific conversation — are the ones whose conversion rates hold up even in crowded categories.

The sellers who upload seven vaguely similar product photos and call it done are running a listing that’s working against them every single day.

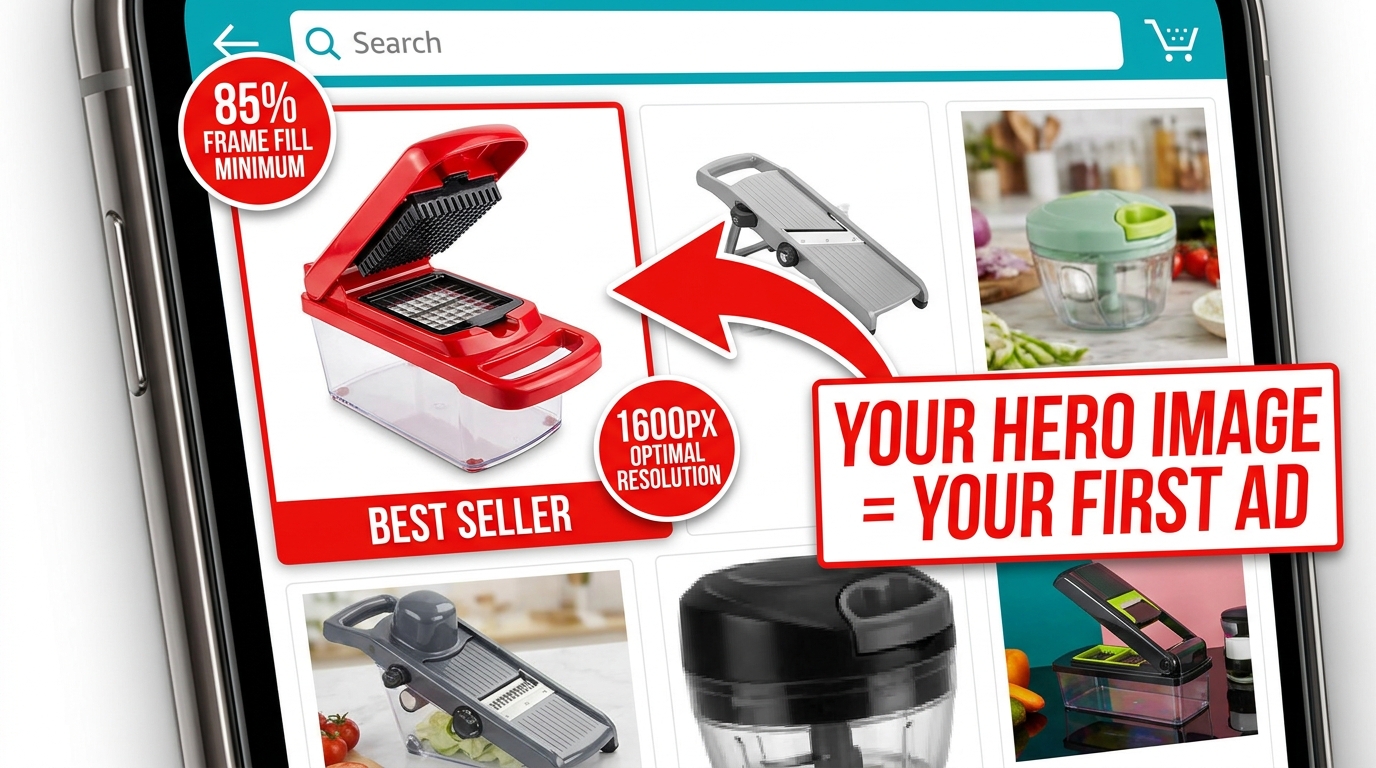

The Hero Image: Engineering a Thumbnail That Commands the Click

Your hero image — the main product shot shown in search results — is functionally an advertisement. It’s the creative that runs every time someone searches a keyword you rank for, and its job is a single, specific one: get the click. Not sell the product. Not explain the features. Get. The. Click.

Everything else in your listing exists downstream of that click. The bullet points, the A+ content, the reviews, the video — none of it matters if the hero image doesn’t earn the visit. That’s why the hero deserves a level of attention and investment that most sellers reserve for their PPC campaigns.

Amazon’s Non-Negotiable Technical Requirements

Amazon’s requirements for the main image are strict, and violating them risks listing suppression. The rules are worth internalizing, not just bookmarking:

- Pure white background: RGB 255, 255, 255 — not off-white, not light gray, not cream. Pure white.

- Product fills at least 85% of the frame. This is a minimum. 90-95% is better.

- No text, logos, graphics, watermarks, or borders overlaid on the product or background.

- Minimum 1,000px on the longest side for the site; 1,600px to enable zoom (which improves conversion); up to 10,000px maximum.

- Product must be shown outside packaging in most categories. No props or excluded accessories.

- No multiple views of the same product in the main image.

Amazon’s optimal specification is 1,600px or larger specifically because zoom functionality — the ability to hover and enlarge the image — has been shown to measurably improve sales. Don’t meet the minimum. Aim for 2,000px or higher for maximum quality at all display sizes.

What “Commanding the Click” Actually Looks Like

Within Amazon’s rules, there’s still significant room to differentiate. The best hero images share a few characteristics that go beyond technical compliance:

Angle matters more than you think. The front-facing, flat product shot is the default — and for most categories, it’s what works. But the best angle is the one that makes your product’s most compelling feature immediately visible in a 200×200 pixel thumbnail. For a travel mug, that might be the lip-seal lid. For a knife, the blade profile. Test angles if you’re unsure.

Contrast against the white background. White backgrounds make all products equal at a technical level — but visually, a product with natural contrast (dark colors, distinct edges, strong silhouette) pops far better than a light-colored product that blends into the white. If your product is white or light-colored, consider how professional lighting and shadow can create separation.

Perceived quality through photography. The difference between a $200 professional product shoot and a phone photo isn’t just resolution — it’s lighting, shadows, reflections, and depth that signal to a buyer’s brain whether this is a premium product or a cheap knockoff. Professional photography for your hero image isn’t a nice-to-have. In most categories with competitive imagery, it’s table stakes.

Dead Pixel Real Estate: The Hidden CTR Killer Most Sellers Ignore

“Dead pixel real estate” is the term used among image optimization practitioners for the empty, unused space around a product in a hero image. It’s the blank white space that surrounds a product when the shot is taken from too far away, or when the original photography dimensions weren’t optimized for Amazon’s thumbnail format.

In full desktop view, dead pixel space looks acceptable. But in Amazon’s search result grid — particularly on mobile — thumbnails are small and the competition for visual attention is fierce. Every pixel of empty white space is a pixel your product isn’t using. At thumbnail scale, a product that fills 65% of the frame looks noticeably smaller and less substantial than a competitor’s product filling 90%.

Why This Matters at the Search Results Level

At any given time on Amazon, your product thumbnail is displayed alongside 15-48 other thumbnails on a search results page. The cognitive load of choosing what to click is real — and shoppers make those micro-decisions based almost entirely on visual prominence and perceived quality.

A product with significant dead pixel space around it reads as smaller, cheaper, and less important than its neighbors. It doesn’t matter if the product is actually premium — the thumbnail is the first impression, and perception is reality in the 50-millisecond window of a search results scroll.

Optimizing for zero dead pixel space means cropping your image so the product fills 90-95% of the frame. If your original photography didn’t achieve this, it can often be corrected in post-production without a reshoot. The fix is frequently cheap. The cost of not fixing it compounds daily.

The “Dead Pixel” Opportunity in Secondary Images

The dead pixel concept also applies inversely to secondary images — where blank space can be deliberately used as “real estate” for value propositions. In infographic slots, sellers have used the white space around a product to place specification callouts, measurement indicators, and benefit bullets that technically don’t “overlay” the product itself.

This approach threads the needle between Amazon’s rules (which prohibit text overlays on the main image) and the desire to communicate quickly in the secondary slots. It’s one of the more nuanced tactics available and, when executed cleanly, can make secondary images significantly more informative at a glance.

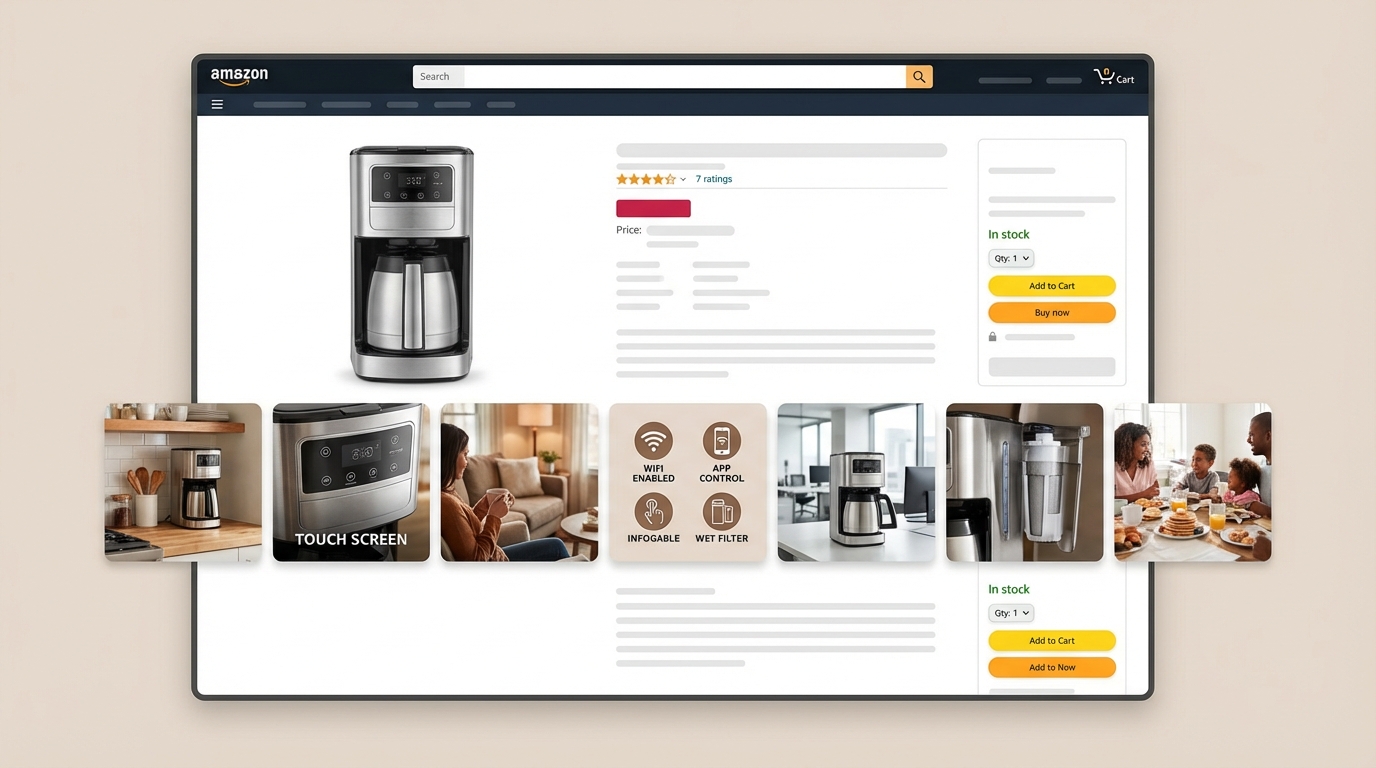

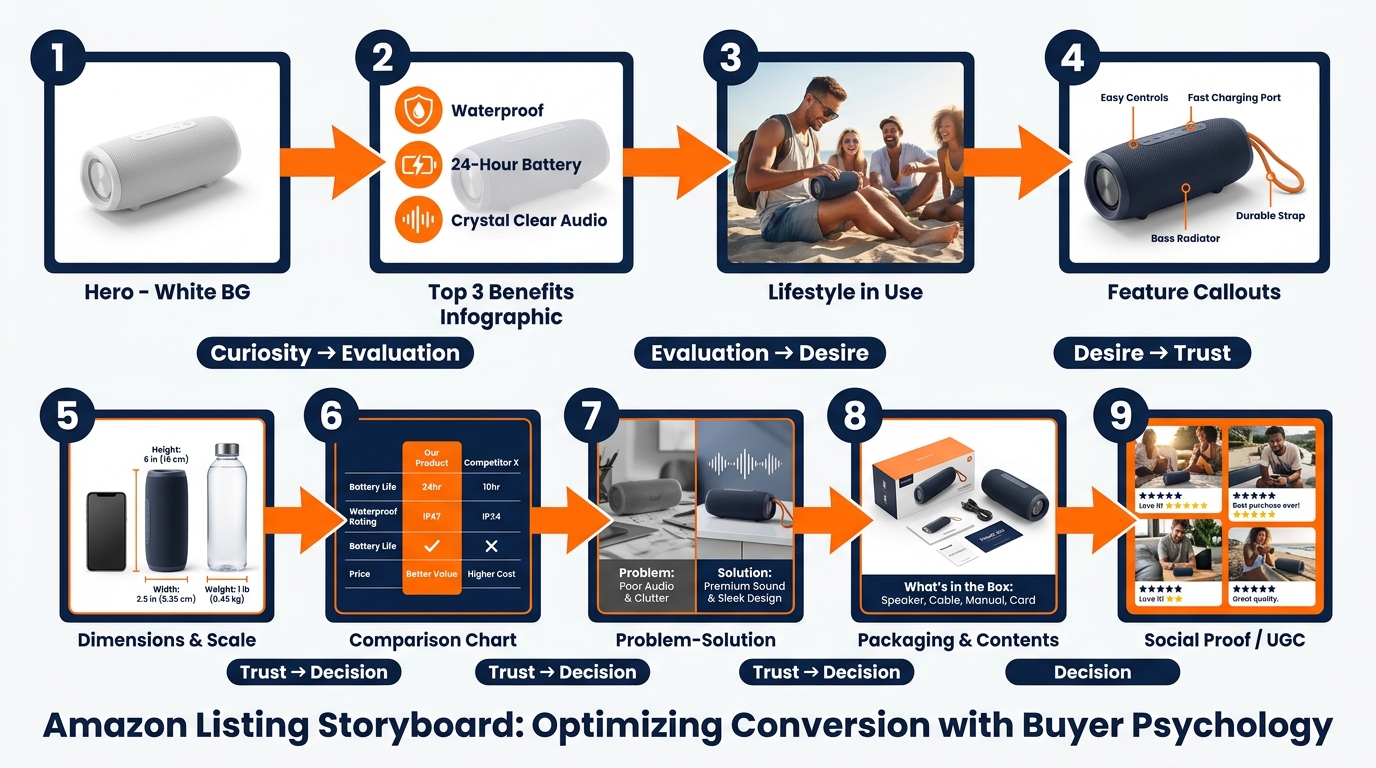

The 9-Slot Image Sequence and the Psychology Behind Each Position

Amazon allows up to nine image slots plus a video slot for most categories. The vast majority of sellers use fewer than seven, and the ones who do use all nine frequently upload images in whatever order they happen to be ready — not in a deliberate sequence designed to move a buyer through a purchase decision.

That’s a structural mistake. The image gallery is a sales funnel. Each slot corresponds to a different stage of the buyer’s cognitive journey, and a well-sequenced gallery moves shoppers from initial curiosity through evaluation, desire, objection-handling, and ultimately to the “Add to Cart” button. A randomly ordered gallery just gives shoppers more chances to find a reason to leave.

The Nine-Slot Framework

Here’s how high-converting sellers approach the 9-slot sequence:

Slot 1 — The Hero: Pure white background, maximum frame fill, professional photography. Drives the click from search results. No information beyond the product’s visual quality and form factor.

Slot 2 — The Top-3 Benefits Infographic: The buyer has clicked and is evaluating whether to stay. This slot answers: “Why this product?” Three bold, benefit-driven callouts with clean iconography. Not features — benefits. Not “1200W motor” — “Crushes ice in under 10 seconds.” This is where you address the emotional purchase driver immediately.

Slot 3 — Lifestyle in Context: Show the product being used by a person in a real environment. This slot triggers aspiration and belonging. The buyer thinks: “That could be me.” It also communicates scale, ease of use, and the product’s fit into the buyer’s life — all without a word of text.

Slot 4 — Feature Callouts with Close-Ups: Now the buyer is warming up and wants details. This slot goes deep on the product’s most important physical features — materials, components, specific design choices — with annotated close-up photography and short explanatory labels.

Slot 5 — Dimensions and Scale Reference: One of the most common causes of returns is size mismatch. Buyers imagined the product was bigger or smaller than it actually is. A dedicated dimensions image — showing the product next to a recognizable scale reference (a hand, a common household item) alongside actual measurements — prevents this objection before it becomes a return or a negative review.

Slot 6 — Comparison or Differentiation: If you have a legitimate advantage over the category standard — better capacity, more durable materials, more certifications, longer warranty — this is where to present it visually. A clean comparison chart (your product vs. “typical” competitor, not naming brands) addresses the “why not just buy the cheaper one?” objection directly.

Slot 7 — Problem-Solution Narrative: Address the specific pain point your target buyer arrived with. “Tired of blenders that can’t handle frozen fruit?” This slot validates the buyer’s frustration and positions your product as the resolution. It’s the slot most sellers skip and the one that often moves the most hesitant buyers.

Slot 8 — What’s in the Box: Show the full product contents laid out cleanly. This eliminates uncertainty (one of the primary drivers of abandoned carts) and creates positive surprise when the unboxing matches the image. It also signals quality packaging and attention to detail.

Slot 9 — Social Proof or Trust Signal: Aggregate review ratings, certification badges, sustainability credentials, or user-generated content integrated into a clean graphic. This is the final reassurance before the purchase — the “others trust this, you can too” signal that closes hesitant buyers.

Why Sequence Matters as Much as Content

The same nine images in a different order perform differently. An image that works brilliantly in slot 3 can underperform in slot 7 because it’s answering a question the buyer hasn’t asked yet. The sequence mirrors the natural progression of a buyer’s internal monologue, and disrupting that progression creates friction. Friction kills conversions.

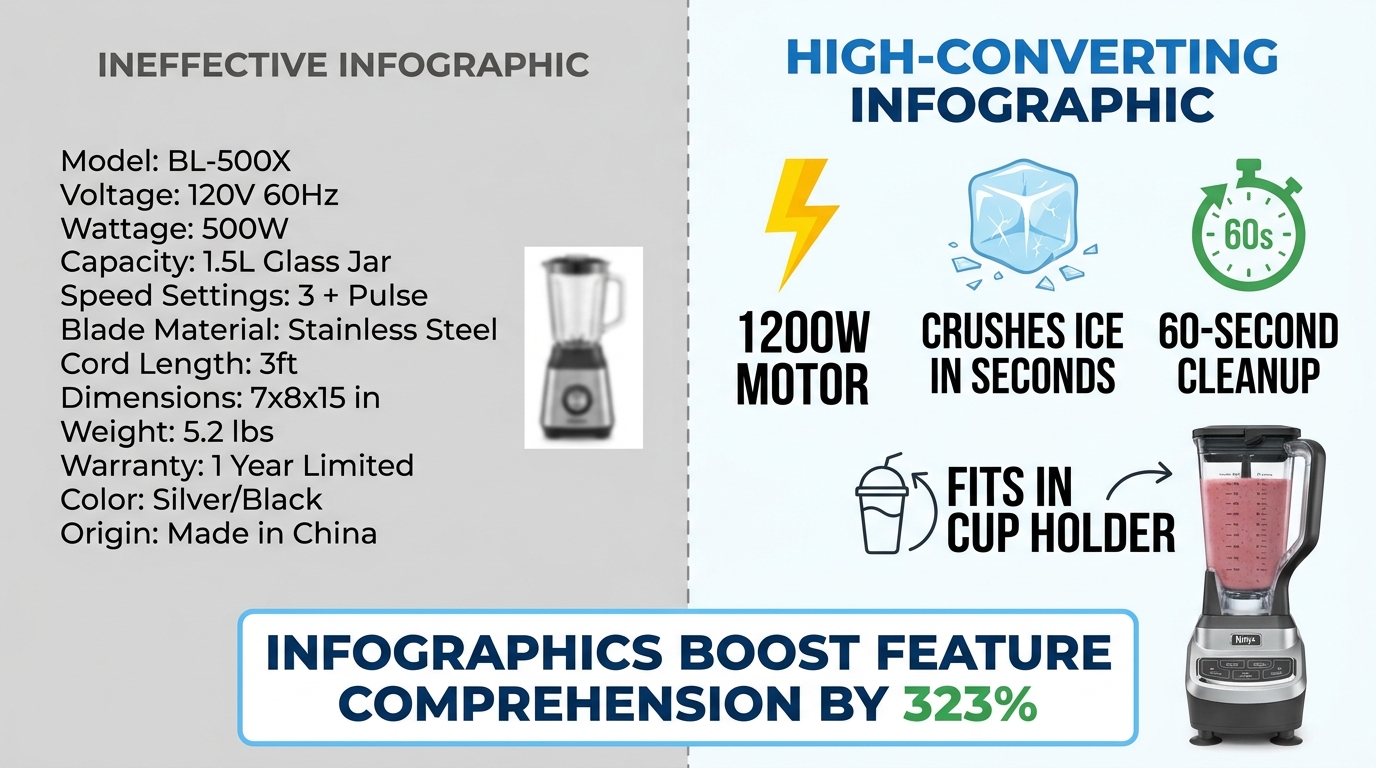

Infographics That Actually Convert: Designing for the 3-Second Mobile Scan

Infographic images — the secondary images that overlay text, icons, and callouts on or around product shots — have become a standard part of Amazon listing optimization. But “having infographics” and “having infographics that convert” are two very different things. The Amazon search results pages in most competitive categories are now full of infographic images. Many of them don’t work.

The data on infographics is compelling: adding infographic and scale images with text to a listing can improve customer understanding of product features by up to 323%, according to aggregated Amazon listing data. That’s a dramatic number. But that uplift requires the infographic to actually be readable and scannable — conditions that a surprising number of infographics fail to meet.

The Mobile Rendering Problem

Here is the core design mistake sellers make with infographics: they design them on a large desktop monitor at 1:1 scale, where text looks clear and readable, then upload them without checking how the image renders at mobile thumbnail size.

On mobile — where over 70% of Amazon shopping occurs — an image designed at 2000×2000 pixels is rendered in a space roughly 350-450 pixels wide. Text that looked fine at desktop scale becomes illegible at that compression ratio. A six-point callout font becomes microscopic. A ten-bullet feature list becomes a gray blur.

The result is an infographic that registers as “busy” or “complicated” rather than informative. Buyers swipe past it. The 323% comprehension uplift assumes the buyer can actually read the infographic — and on mobile, they often can’t.

The 3-Second Scan Principle

High-converting infographics are designed around a single constraint: a mobile shopper should be able to understand the core message within three seconds. Not absorb every detail — just get the point.

That constraint leads to several specific design rules:

- Maximum three focal points per image. One image, one message. If you’re trying to communicate five things in one infographic, you’re communicating zero of them clearly.

- Font size of at least 30-40pt on the original image file so text remains readable at mobile compression ratios. Test by shrinking your image to 400px wide before uploading and checking legibility.

- High-contrast text on a contrasting background. White text on a white product doesn’t work. Dark text on a light background or light text on a dark element — with clear visual separation — is the standard that survives mobile compression.

- Icons over text where possible. A lightning bolt icon communicates “fast” instantly. Three words of text do not. Iconographic communication is faster and more mobile-resilient than text-heavy designs.

- Benefit language, not feature language. “Fits in any standard car cup holder” beats “6.5cm diameter base.” The first is a benefit the buyer can instantly relate to their life; the second requires mental translation.

The “One Infographic Per Pain Point” Rule

Each infographic in your image stack should address exactly one buyer question or objection. Not a collection of facts about the product — one clear answer to one specific concern. “Will it last?” “How hard is it to clean?” “Is it the right size for my needs?” When an infographic tries to answer three questions at once, it answers none of them convincingly.

This single-focus discipline also makes A/B testing infographics much more actionable. When you test two versions of an infographic and one performs better, you know exactly what variable moved the needle — because each image only had one variable to begin with.

Lifestyle Photography: The Emotional Trigger That Turns Browsers Into Buyers

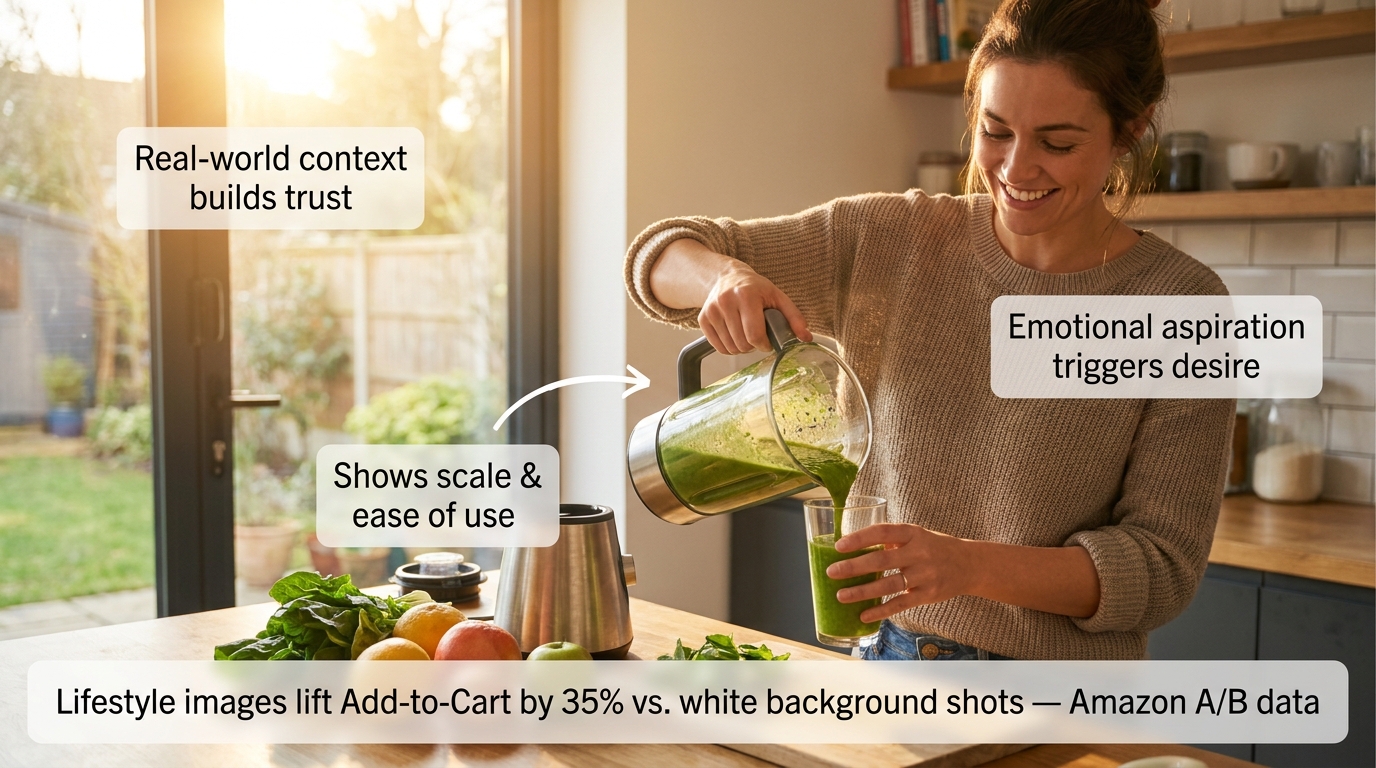

Amazon A/B testing data shows lifestyle images outperform standard white-background secondary shots by approximately 35% in Add-to-Cart actions. That’s a measurable, repeatable finding across multiple categories — and it makes intuitive sense once you understand what lifestyle images actually do psychologically.

A white-background product image answers the question: “What does this look like?” A lifestyle image answers a fundamentally different — and far more powerful — question: “What will my life look like with this in it?”

That shift from product-centric to life-centric framing triggers what psychologists call “mental simulation.” When a buyer sees a person using a product in a context they can relate to, their brain automatically begins simulating the experience of owning and using that product. Mental simulation is a key driver of desire — and desire is what converts browsers into buyers.

What Makes a Lifestyle Image Work

Not all lifestyle images trigger mental simulation effectively. The ones that do share specific characteristics:

The model reflects the target buyer. A lifestyle image of a 22-year-old fitness influencer using a blender doesn’t resonate with a 45-year-old parent buying it for family meal prep. The most effective lifestyle images feature people whose demographics, environment, and life context mirror the target customer. This requires actually knowing your buyer — not just photographing whoever was available on shoot day.

The environment is aspirationally realistic. “Aspirationally realistic” means the setting is attainable and relatable, not fantasy. A kitchen that’s beautiful but clearly someone’s actual kitchen. An office that’s clean and organized but recognizably an office. The aspiration is in the quality and atmosphere; the realism is in the believability. Pure fantasy settings (private yachts, penthouses for a $30 product) create cognitive dissonance that undermines trust.

The product is shown in active use, not posed. A product sitting on a table with a person standing next to it is a prop photo. A product being actively used — hands on the handle, product in motion, someone mid-action — is a lifestyle photo. The distinction is the difference between showing what a product is and showing what a product does.

The scale and ease of use are implicit. A lifestyle image should communicate “this is easy to use” and “this fits naturally into daily life” without stating either of those things. If the image requires the viewer to work to understand how the product is being used, it’s failing.

Mobile-Testing Your Lifestyle Images Before Publishing

68% of Amazon cart abandonments happen within 90 seconds of the first click, with mobile shoppers abandoning 2.1x faster than desktop users when images fail to communicate clearly. Before publishing any lifestyle image, view it on an actual mobile device at the size it will appear in the listing carousel. If the product isn’t immediately identifiable, if the scene reads as cluttered, or if the emotional message doesn’t land within two seconds — the image needs revision.

This test takes 60 seconds and is skipped by almost every seller. Don’t skip it.

What Rufus AI Reads in Your Images (And Why Most Sellers Are Missing It)

Amazon’s Rufus AI — the conversational shopping assistant integrated into the Amazon app and website — represents a significant shift in how product discovery works. Rufus doesn’t just match keywords. It interprets product listings holistically, including the visual content, to answer natural-language shopper queries like “What’s a good blender for someone who makes smoothies every morning?” or “Show me a water bottle that fits in a car cup holder.”

What most sellers don’t know is that Rufus uses optical character recognition (OCR) and computer vision to actively read and interpret the text and visual elements in your product images. Your infographics aren’t just for human eyes. Rufus is reading them too.

How Rufus Extracts Image Data

Through OCR, Rufus can read text overlaid on your secondary images — spec callouts, feature labels, dimension indicators, certifications. Through computer vision, it can analyze the visual content itself — identifying objects, contexts, and use cases depicted in lifestyle imagery.

This means an infographic that reads “Holds 64 oz — Fits Standard Car Cup Holders” isn’t just communicating with a human buyer scanning your gallery. It’s feeding Rufus structured attribute data that can surface your product in response to the query “What’s a large water bottle that fits in my car?” — even if those exact words don’t appear anywhere in your title or bullet points.

The implications are significant. For sellers competing in categories where listing text is already keyword-saturated, the image stack has become an additional indexable surface. The attributes you communicate visually are now functionally part of your product’s discoverable data set.

Optimizing Images for Rufus Readability

Several specific practices improve the quality of data Rufus can extract from your images:

- Use large, high-contrast, readable fonts in infographics. If Rufus’s OCR can’t parse your text — because it’s in a stylized script font, at low contrast, or rendered too small — those attributes aren’t being captured. Clean, sans-serif fonts at adequate size are the most OCR-friendly choice.

- Be specific in your callout text. “Large capacity” is vague and provides Rufus with limited searchable data. “Holds 64 oz — Fits standard cup holders” is specific and creates structured attributes that match specific queries. The more precise your callout language, the more useful it is to both Rufus and the buyer.

- Use lifestyle images that clearly depict use cases. Rufus’s computer vision interprets visual contexts. An image of your water bottle in a gym bag tells Rufus this is a gym product. An image of it in a home office tells it this is a desk product. Diversity of lifestyle contexts — multiple use scenarios across your image stack — expands the range of queries your listing can surface for.

- Include alt text on A+ Content images. A+ Content images support alt text, and Rufus reads those too. A descriptive alt text like “Woman using 1200-watt blender to make green smoothie in modern kitchen” provides far more contextual data than “product image 3.”

The Competitive Advantage Window

Awareness of Rufus’s image-reading capabilities among Amazon sellers remains low. Most listing optimization advice still focuses exclusively on keyword text. The sellers who begin optimizing their image stacks for AI readability now — while the majority of competitors haven’t — will build a structural advantage that compounds over time as Rufus’s role in product discovery continues to grow.

A/B Testing Your Images: The Data-Driven Loop That Separates Growing Listings From Stagnant Ones

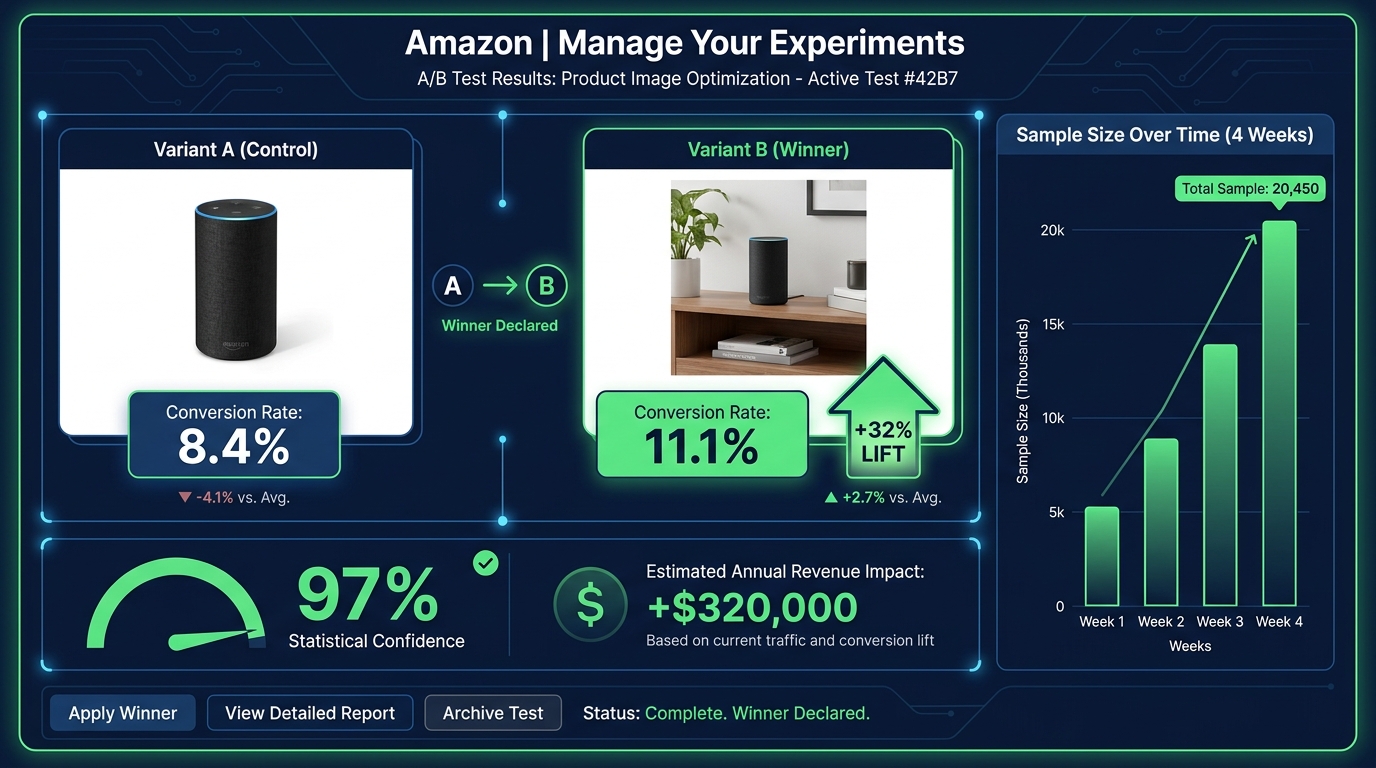

The difference between an image stack that was optimized once and an image stack that is continuously optimized is enormous — and it’s measurable. The documented case studies on Amazon image A/B testing are some of the most compelling data in the seller ecosystem.

A single image change on an eight-figure client’s listing produced a 32% conversion increase with no change in traffic. On a $1 million annual revenue baseline, that test generated an estimated $320,000 in additional revenue — from one image change. Tested to 97% statistical confidence over four weeks.

A separate test of lifestyle versus plain background images across a three-week window produced a consistent 15% conversion lift. An 18% conversion rate increase was documented in another test involving both image and title keyword adjustments.

These aren’t marketing claims. They’re documented A/B test results from Amazon’s own experiment infrastructure. The methodology is rigorous. The results are real.

Amazon’s “Manage Your Experiments” Tool

For brand-registered sellers, Amazon’s native A/B testing tool — Manage Your Experiments — is available through Seller Central. It enables you to test two versions of a main image (or other content elements) against each other simultaneously, splitting traffic between the variants and measuring conversion rate, click-through rate, and projected annual revenue impact.

The tool handles sample size and statistical significance, giving you a confidence score that indicates how reliable the result is. Tests typically require 4-6 weeks to reach meaningful confidence levels — longer for lower-traffic listings, shorter for high-volume ones.

The key best practice: test one variable at a time. If you change the main image and the background color and the badge in the same test, and conversions improve, you won’t know which change drove it. Isolating variables makes each test actionable, not just informative.

What to Test and In What Order

A rational image testing roadmap prioritizes by potential impact:

- Main image angle and composition — highest impact, directly affects CTR from search results. Test your current hero image against a version with tighter crop, different angle, or stronger visual contrast.

- Slot 2 infographic versus lifestyle — determines whether the “Why this product?” question is best answered with data or emotion for your specific buyer. Category and product type influence the answer differently.

- Lifestyle image subject demographics — test a lifestyle image featuring a buyer who matches your target demographic vs. a more generic model. The specificity uplift can be significant in niche categories.

- Infographic design variations — test a text-heavy infographic against an icon-forward one for the same content. Mobile rendering often favors icons.

- Slot order permutations — once content is optimized, test whether reordering slots improves flow. Slide the comparison chart from slot 6 to slot 3 and measure the effect.

The Continuous Testing Mindset

The most important shift isn’t tactical — it’s cultural. Image testing shouldn’t be a one-time project. High-performing sellers run image experiments every 3-4 weeks, rotating through their image slots systematically. The result isn’t a single 32% uplift; it’s a compounding series of 5-15% improvements that, over 12 months, can double a listing’s conversion rate.

That’s not hypothetical. It’s what continuous testing looks like at scale.

Video in the Image Stack: Why It’s No Longer Optional

Amazon provides a dedicated video slot alongside the image gallery on product detail pages. For most categories, this slot can host a product video in the main image carousel — visible before the listing’s A+ content, before reviews, before anything below the fold.

Video is no longer a differentiator in 2026. It’s expected. Listings with videos see higher engagement metrics across the board: more time on page, lower bounce rates, and conversion rates that consistently outperform video-absent listings in the same category. The aggregated data on listings using at least six images plus video shows conversion lifts in the range of 20-50% compared to image-only listings.

What Type of Video Converts

Not all product videos are equal. The videos that perform best on Amazon share a clear structure that mirrors the psychological image sequence described earlier: problem → product introduction → demonstration → result → call to action.

Amazon video best practices for 2026:

- Keep it under 60 seconds. The median attention span for an Amazon product video is under 45 seconds. Videos longer than 90 seconds see significantly higher drop-off rates before the key demonstration moments. Front-load your strongest content.

- Design for silent viewing. A large portion of mobile shoppers view videos without sound. Captions and on-screen text should convey the full message without audio dependency. Key selling points should appear as text overlays at the moment they’re demonstrated.

- Show the product being used within the first five seconds. Don’t spend time on brand intros, logo animations, or ambient footage before showing the product in action. Five seconds is approximately when mobile viewers make the swipe-or-stay decision.

- Film in 9:16 vertical format for mobile priority. Amazon’s mobile carousel renders vertical video more effectively than horizontal. Given that mobile represents over 70% of traffic, vertical formatting should be the primary production orientation.

Video as an Objection-Handling Tool

The single most valuable function of a product video on Amazon is objection handling. Text and images can describe a product’s ease of use; video can prove it. Text can claim durability; video can demonstrate a stress test. Text can say “easy to assemble”; video can show the assembly completed in 90 seconds by an ordinary person.

When you identify the top 3 objections holding buyers back from converting on your listing — look at your reviews and Q&A for clues — and build your video around directly addressing those objections with demonstration, you create a video that sells rather than just showing. The difference in conversion impact is substantial.

The Mobile-First Image Audit: How to Stress-Test Your Listing Right Now

Everything discussed in this post converges on a single practical starting point: you cannot optimize what you haven’t audited. Most sellers have never actually evaluated their listings the way their buyers experience them — which is on a 6-inch phone screen, in a search results grid, scrolling fast, often in a noisy environment with split attention.

Here is a systematic mobile-first image audit you can conduct in under 30 minutes, right now, using only your phone and a competitor’s listing for reference.

The Five-Point Mobile Audit Checklist

1. The Scroll Test. Open Amazon on your phone and search one of your primary keywords. Scroll the results at normal speed without stopping. Note whether your listing’s thumbnail catches your eye before you scroll past it. If you have to actively look for your product in the grid, your hero image isn’t earning the click from cold traffic.

2. The Thumbnail Fill Test. Without clicking on your listing, look at your hero image thumbnail in the search results grid. What percentage of the thumbnail space does the product fill? Compare it to the two or three most visible competitor thumbnails. If your product looks smaller or leaves more empty space, you have a dead pixel problem.

3. The 3-Second Infographic Test. Click into your listing and swipe to your infographic images. Set a timer for three seconds and look at each one. What’s the one thing you understood from it in that window? If you can’t answer that question — if the image required more than three seconds to extract a single clear message — it’s underperforming for mobile buyers.

4. The Lifestyle Relatability Test. Look at your lifestyle images with fresh eyes. Does the person in the image look like your target buyer? Is the environment recognizable to that buyer? Is the product being used — not just displayed? If any of those answers is no, that image slot is working below its potential.

5. The Sequence Logic Test. Swipe through your full image gallery as if you’ve never seen the product before. Does each image answer the next logical question in a buying journey? Or do you find yourself confused about why a particular image appears when it does? Note the specific slot where the sequence feels disjointed — that’s your first optimization priority.

Competitive Benchmarking: What the Category Leaders Are Doing

For each of the five tests above, repeat them on the top-selling listing in your category. Document what their hero image composition looks like, what their slot 2 image communicates, how they use lifestyle photography, and what their infographic design choices are. Not to copy — to benchmark.

Understanding where the category standard sits tells you whether you’re above, at, or below the visual baseline buyers expect when they search your category. Being below the baseline means you’re losing conversions to competition passively, every day. Being above it means your images are a competitive moat.

In most categories, a thorough audit reveals at least three immediately actionable improvements — dead pixel space to close, infographic text to increase, lifestyle images to retarget — that can be addressed without a new photo shoot. Start there.

The Compounding Effect of a Fully Optimized Image Stack

Individual image improvements tend to produce individual results. A hero image fix produces a CTR gain. A better slot 2 infographic reduces early bounces. A more targeted lifestyle image improves Add-to-Cart rates. Each gain is real and valuable. But the full value of image optimization isn’t the sum of individual improvements — it’s the compounding effect of all of them working together.

A listing with a high-converting hero image earns more clicks. More clicks mean more sessions. Better secondary images mean more of those sessions convert. Higher conversion rates improve your organic ranking algorithm, which improves your search placement, which produces still more organic traffic. Better images reduce return rates, which improves your seller metrics, which feeds back into ranking signals. Positive reviews from buyers whose expectations were set accurately by your images reinforce social proof, which improves conversion for future buyers.

This is the compounding flywheel — and it starts with images, not ads.

The True Cost of Unoptimized Images

Every day a listing runs with a dead pixel problem in the hero image, it’s losing a percentage of the clicks it should have earned. Every day an infographic is rendering as unreadable text on mobile, it’s failing to move buyers past the evaluation stage. Every day a lifestyle image features the wrong demographic, it’s failing to trigger the mental simulation that drives desire.

These aren’t theoretical losses. They’re real buyers who came close, evaluated, and went elsewhere — not because the product was wrong for them, but because the visual presentation didn’t make the case clearly enough at the moment it mattered.

The cost of a professional product photography session for a full 9-image stack ranges from a few hundred dollars to $2,000 depending on category and complexity. The revenue impact of a 15-32% conversion improvement on a listing doing $100,000 a year is $15,000-$32,000 annually. That math works at almost any traffic level.

Actionable Takeaways: Where to Start This Week

If you take nothing else from this piece, start with these five actions:

- Run the mobile scroll test on your primary keyword today. If you can’t find your own listing in the first seconds of scrolling, your hero image needs work before anything else.

- Check your hero image’s frame fill. Open your main image in an image editor and measure the product’s footprint. If it’s below 85%, crop and reupload. This is a 20-minute fix with measurable CTR impact.

- View every infographic image at 400px wide. Screenshot it, shrink it, and read it. What survives? What becomes illegible? Redesign around what remains readable at that size.

- Fill every available image slot. If you’re running fewer than seven images, filling the remaining slots with a properly sequenced set of lifestyle, infographic, and detail images should be your first priority. 6+ images consistently outperform shorter galleries across documented data.

- Set up one A/B test this month. Brand-registered sellers have access to Manage Your Experiments for free. Start with a hero image variant — the highest-impact single test available. Give it four weeks and let the data decide.

The sellers who treat their image stack as a living, continuously tested asset — not a one-time creative project — are the ones who build listings that compound in performance over time. In a marketplace where traffic is expensive, margins are compressed, and competition deepens every quarter, that compounding effect isn’t a nice outcome. In 2026, it’s the difference between a listing that grows and one that slowly loses ground.

Your images are already either earning money or losing it. Now you know which questions to ask to find out which one.