On April 28, 2026, IBM launched something that the developer tooling market hadn’t seen from a major enterprise vendor before: a platform specifically designed not just to accelerate software development, but to regulate its costs across every stage of the lifecycle. The product is called IBM Bob, and while the announcement generated the usual wave of press coverage, most of the reporting focused on the productivity numbers and missed what makes the platform structurally different from every AI coding assistant that came before it.

The distinction matters for engineering leaders and CTOs trying to justify AI spending in a market already crowded with tools promising 10x developer productivity. Bob isn’t a code completion engine with an enterprise plan bolted on. It is an agentic orchestration platform built to govern the entire software development lifecycle — from the first planning conversation through deployment and ongoing operations — with cost regulation as a first-class architectural concern, not an afterthought.

This article takes a detailed look at what IBM Bob actually does, where its cost regulation logic lives, how its real-world deployments have performed, and — critically — where its limitations are. If you’re evaluating Bob for your engineering organization, or trying to understand where it fits relative to GitHub Copilot, Cursor, or other tools already in your stack, the picture is more nuanced than IBM’s launch materials suggest. That nuance is worth understanding before you commit budget.

We’ll work through the full picture: the problem Bob was architected to solve, the mechanisms behind its cost logic, the governance layer that separates it from pure productivity tools, and the honest assessment of what it can and cannot do for engineering organizations today.

The Problem IBM Bob Was Actually Built to Solve

To understand IBM Bob’s design choices, you first need to understand the specific economic problem it was engineered around. That problem isn’t a shortage of capable AI coding assistants — there are plenty of those. The problem is structural waste inside enterprise software development organizations, and it’s been present long before AI tools entered the conversation.

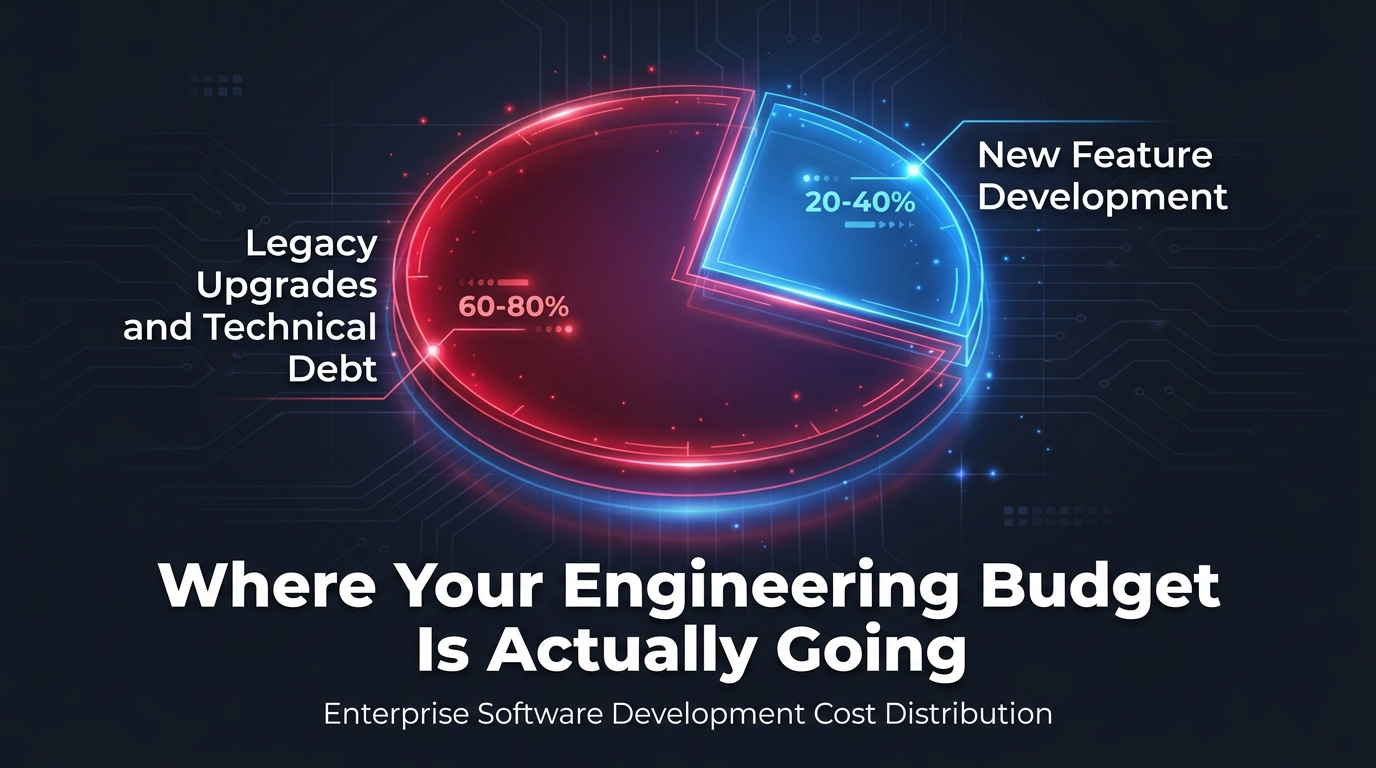

The 60-80% Budget Trap

Across enterprise organizations, legacy systems and technical debt consume between 60 and 80 percent of engineering budgets. That statistic, which IBM cites as a core part of Bob’s rationale, reflects a well-documented reality: the majority of software engineering spend in mature organizations goes not toward building new capability, but toward maintaining, upgrading, patching, and extending systems that were built in a different era under different architectural assumptions.

The implications are significant. An organization spending $10 million per year on engineering is effectively spending $6–8 million just to keep the existing system functional and compliant — leaving only $2–4 million for the new features, services, or platform improvements that leadership actually cares about. This isn’t a failure of individual engineers. It’s a systemic imbalance baked into the way enterprise software accumulates complexity over time.

Fragmentation Makes It Worse

The second dimension of the problem is tooling fragmentation. Enterprise development environments typically involve separate tools for planning, separate environments for coding, separate systems for testing and QA, separate deployment pipelines, and separate monitoring stacks. Each stage has its own context, its own interface, and its own cost center. When AI tools enter this environment, they typically plug into one stage — usually coding — without addressing the handoffs between stages where time and cost accumulate.

IBM’s research and internal experience pointed toward a consistent finding: the cost of software delivery isn’t primarily a coding problem. It’s a coordination problem — between stages, between roles, and between the new feature work and the legacy maintenance burden running in parallel. That diagnosis is what drove Bob’s architecture toward full-lifecycle orchestration rather than point-solution productivity.

Technical Debt as a Hidden Multiplier

Research consistently shows that ignoring technical debt in AI business cases causes an 18–29% decline in ROI. Conversely, enterprises that proactively account for and manage technical debt when building AI cases achieve up to 29% higher ROI on those investments. The implication for Bob’s positioning is important: the platform wasn’t built to boost individual developer output metrics. It was built to attack the structural cost drag that makes those metrics largely irrelevant to actual budget outcomes.

What IBM Bob Actually Is — Beyond the Launch Announcement

IBM describes Bob as an “AI-first development partner,” which is technically accurate but undersells the architectural specificity. Bob is an agentic AI orchestration platform that embeds specialized AI agents across each stage of the software development lifecycle, coordinates their work through a multi-model routing layer, and enforces governance rules across all of those interactions — with built-in cost visibility at every step.

Agentic Modes and Role-Based Personas

At the interaction layer, Bob operates through persona-based modes tailored to specific roles in the development organization. An architect interacting with Bob gets a different set of capabilities, prompts, and agent workflows than a security engineer or a backend developer. These aren’t just UI skins — the underlying agents and the models they route to are configured differently based on the task context and role requirements.

This persona-based architecture solves a real usability problem with generic AI coding assistants: the same tool often produces radically different quality outputs depending on how specific and well-structured the prompt is. By pre-configuring role-appropriate workflows, Bob reduces the variance in output quality and ensures that governance requirements specific to each function (security review for the security engineer, dependency analysis for the architect) are surfaced automatically rather than left to the individual user to remember.

Reusable Skills: The Institutional Knowledge Layer

One of Bob’s more technically interesting features is its reusable skills system. Skills are instruction sets — essentially governed workflow templates — that can be loaded per conversation, shared across teams, and versioned (including via Maven repositories for Java/Quarkus environments). They act as an institutional knowledge layer, encoding the organization’s preferred approaches to common tasks like code reviews, API modernization, or security remediation into reusable, auditable assets.

The practical value here is significant. Instead of each developer prompting Bob differently for the same recurring task, skills ensure that the AI applies consistent standards across the team. They also make best practices portable: a skill developed by a senior architect for a particular modernization pattern can be deployed across the engineering organization without requiring that architect’s direct involvement in every instance.

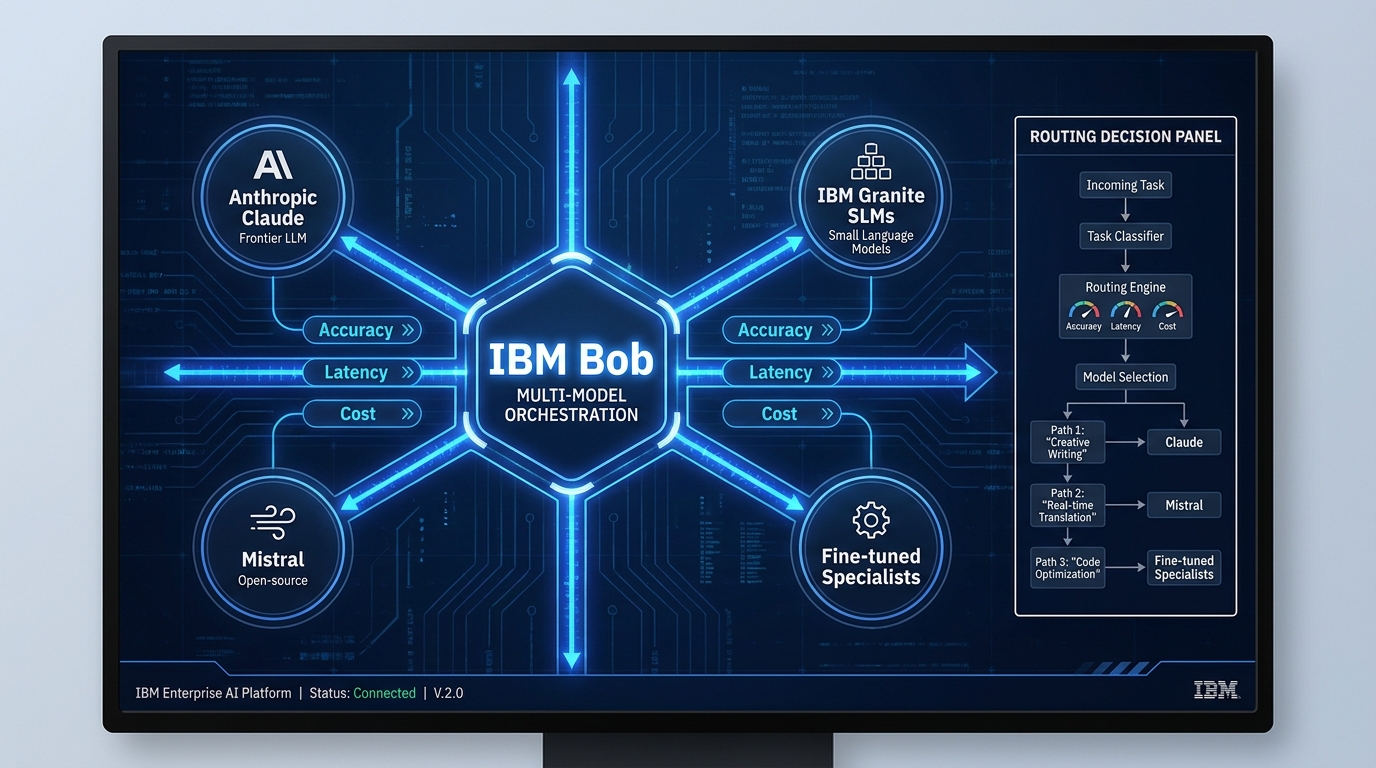

BobShell: The CLI and Auditability Layer

BobShell is Bob’s command-line interface component, and it does something that matters more in regulated industries than it might initially appear to: it makes every AI-assisted action traceable and auditable. In enterprise environments operating under SOC 2, HIPAA, financial services compliance frameworks, or government procurement requirements, the inability to audit what an AI system did and why is often a disqualifying factor. BobShell addresses this by creating a structured, logged record of agentic actions taken during development workflows.

This isn’t just a compliance checkbox feature. Auditability also supports internal cost attribution — enabling engineering leaders to see where AI-assisted work is concentrated, where it’s producing the most acceleration, and where it’s being underused. That visibility is a prerequisite for managing AI tooling costs intelligently, which brings us to the core of Bob’s cost regulation architecture.

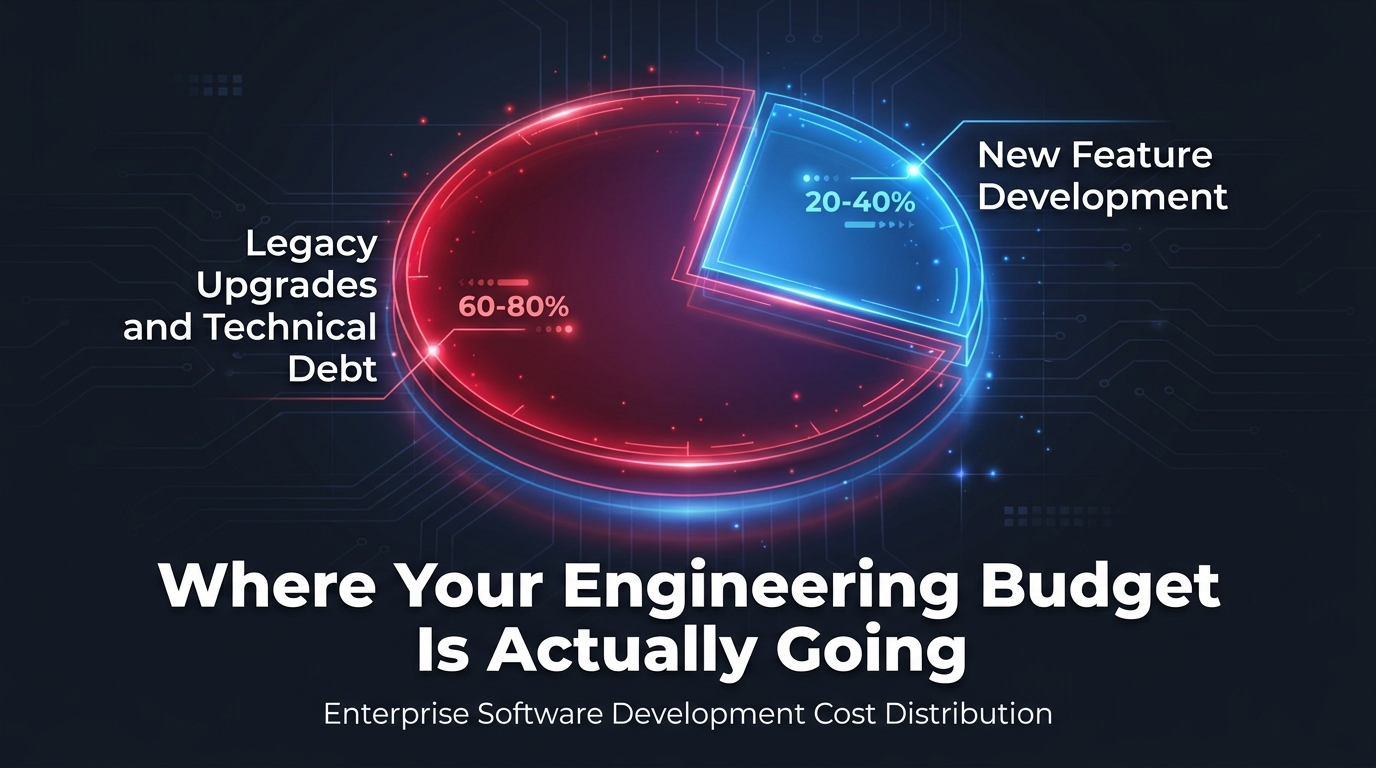

Multi-Model Orchestration: Where the Cost Logic Actually Lives

The most architecturally significant feature of IBM Bob — and the one most underreported in launch coverage — is its multi-model orchestration layer. This is the mechanism through which Bob actually regulates costs rather than simply tracking them.

Dynamic Task Routing

Bob draws from a diverse pool of AI models: Anthropic Claude (a frontier LLM for complex reasoning tasks), Mistral (open-source, lower cost for appropriate use cases), IBM Granite small language models (optimized for specific enterprise tasks), and specialized fine-tuned models for narrow functions like next-edit prediction and security vulnerability screening. The orchestration layer dynamically routes each task to the most appropriate model based on three criteria: accuracy requirements, latency requirements, and cost.

This routing logic is what makes Bob categorically different from tools like GitHub Copilot, which runs tasks through a single underlying model regardless of task complexity or cost sensitivity. If a task requires only lightweight code suggestion or a simple pattern match, routing it through a frontier LLM like Claude wastes token budget. Bob’s orchestration layer makes that distinction automatically — using smaller, faster, cheaper models for tasks they can handle adequately, and reserving frontier model capacity for tasks that genuinely require it.

Pass-Through Pricing and Cost Transparency

Bob uses a pass-through pricing model, meaning the cost of the underlying model inference is passed directly to the user or organization rather than bundled into an opaque monthly fee. This model, combined with the Bobcoin usage-credit system (discussed in detail in the pricing section below), gives engineering leaders unprecedented visibility into where AI compute spend is actually going within their SDLC.

In practice, this means you can see that a particular agent workflow consumed 12 Bobcoins (approximately $6) in frontier LLM calls versus 2 Bobcoins ($1) in a lighter-weight model run — and you can assess whether the output quality differential justified the cost differential. That’s a meaningfully different conversation than the one you can have with flat-rate-per-seat tools, where there’s no mechanism to connect spend to task outcomes.

Why This Matters for Budget Management

The pass-through, consumption-based model creates natural cost discipline in a way that per-seat licensing does not. With a flat per-seat tool, there’s no cost signal when a developer uses an expensive model for a task that a cheaper one would handle fine. With Bob’s model, every workflow decision carries a cost signal — which, when surfaced to engineering leads through Bob’s reporting layer, creates accountability for how AI compute is consumed across the team.

This is a deliberate design philosophy, not just a pricing decision. IBM’s position is that AI tools in enterprise environments should be legible to finance and procurement stakeholders, not just to developers. The pass-through model and Bobcoin system are the mechanisms that make that legibility possible.

The Governance and Security Architecture

For most enterprise organizations evaluating AI development tools in 2026, governance and security aren’t optional features — they’re table stakes. IBM Bob’s governance architecture is one of the most detailed among current AI coding and development platforms, and understanding its components helps clarify where the platform is and isn’t suitable for specific organizational contexts.

Prompt Normalization and Data Scanning

Before any prompt reaches an external model, Bob applies prompt normalization — a preprocessing step that standardizes prompt structure and strips out patterns likely to produce inconsistent or policy-violating outputs. This operates alongside sensitive data scanning, which identifies and flags (or removes) personally identifiable information, credentials, or other sensitive content before it leaves the organization’s environment. For organizations operating under GDPR, HIPAA, or sector-specific data handling regulations, this layer addresses one of the core compliance concerns with using frontier LLMs in production development workflows.

Real-Time Policy Enforcement and AI Red-Teaming

Bob’s policy enforcement layer operates in real time, applying configurable organizational policies to agentic actions as they execute. This means that if an organization has policies around which external APIs agents are permitted to call, which data stores they can access, or what kinds of code patterns they’re permitted to generate, those policies are enforced at the point of action rather than reviewed after the fact.

The platform also includes automated AI red-teaming — a practice in which the system attempts to identify vulnerabilities in AI-generated code and governance configurations before they reach production. For security-sensitive environments, this moves security review from a manual, post-generation process to an automated, continuous one integrated into the development workflow itself.

Human-in-the-Loop Checkpoints

One of Bob’s governance design choices worth highlighting is its configurable approach to human oversight. Rather than requiring human approval for every agentic action (which would eliminate the efficiency benefits) or auto-approving everything (which would create governance risk), Bob allows organizations to configure approval requirements by task type. Routine, well-understood workflows can run autonomously. Higher-risk actions — code changes to production infrastructure, modifications to security-sensitive components, actions involving regulated data — can be routed to a human approval checkpoint before execution.

This graduated approach to oversight reflects an important operational reality: the right level of human control depends on the task, the risk profile of the environment, and the maturity of the team’s experience with AI-assisted work. Bob’s configurability here is a meaningful differentiator from tools with one-size-fits-all approval models.

Role-Based Agents Across the Full SDLC

IBM Bob’s architecture spans seven distinct phases of the software development lifecycle: discovery, planning, design, coding, testing, deployment, and operations. Specialized agents operate within each phase, coordinated by the orchestration layer rather than managed individually by developers. Understanding what each phase’s agents actually do reveals where the most concrete value accumulates.

Discovery and Planning Agents

The discovery phase is where Bob does something most AI coding tools simply don’t touch: it analyzes existing codebases, dependency structures, and architecture documentation to generate an understanding of the current system state before any new work begins. For legacy modernization projects — which, as noted, represent 60–80% of enterprise development budgets — this baseline analysis is foundational. The APIS IT case study (covered in the next section) illustrates how dramatically this phase alone can compress project timelines when it’s automated effectively.

Planning agents translate discovery outputs into structured development plans, breaking work into agent-executable tasks with dependency awareness. This is the phase where reusable skills are most often invoked, since planning patterns for common modernization scenarios (Java version upgrades, API style migrations, mainframe refactoring) can be encoded as skills and applied consistently across projects.

Design and Coding Agents

Design agents assist with architectural decisions, generating diagrams, evaluating design options against organizational standards, and producing technical specifications. Coding agents are the component most familiar to developers already using AI tools — they generate code, suggest edits, and complete functions — but within Bob’s ecosystem, coding agents operate with the context of the full plan and governance requirements established in prior phases rather than in isolation.

The next-edit prediction model is active during the coding phase, providing a specialized fine-tuned variant optimized for anticipating the developer’s next intended change based on the surrounding context. This is distinct from general code completion and is designed to reduce the friction of agentic coding in complex, multi-file change scenarios.

Testing, Deployment, and Operations Agents

Testing agents generate test cases, establish coverage baselines, and run regression suites — a phase where the Blue Pearl case study produced one of its most striking results (92% regression test coverage established from zero, which we’ll examine in detail). Deployment agents manage pipeline configuration and coordinate the handoffs between development and production environments. Operations agents support ongoing monitoring, incident triage, and the continuous flow of feedback from production back into the development cycle.

The IBM Instana team, which uses Bob internally, reported a 70% reduction in time spent on selected operational tasks — a figure that, while dramatic, reflects the kind of high-repetition, process-intensive work where agentic automation consistently produces its best results.

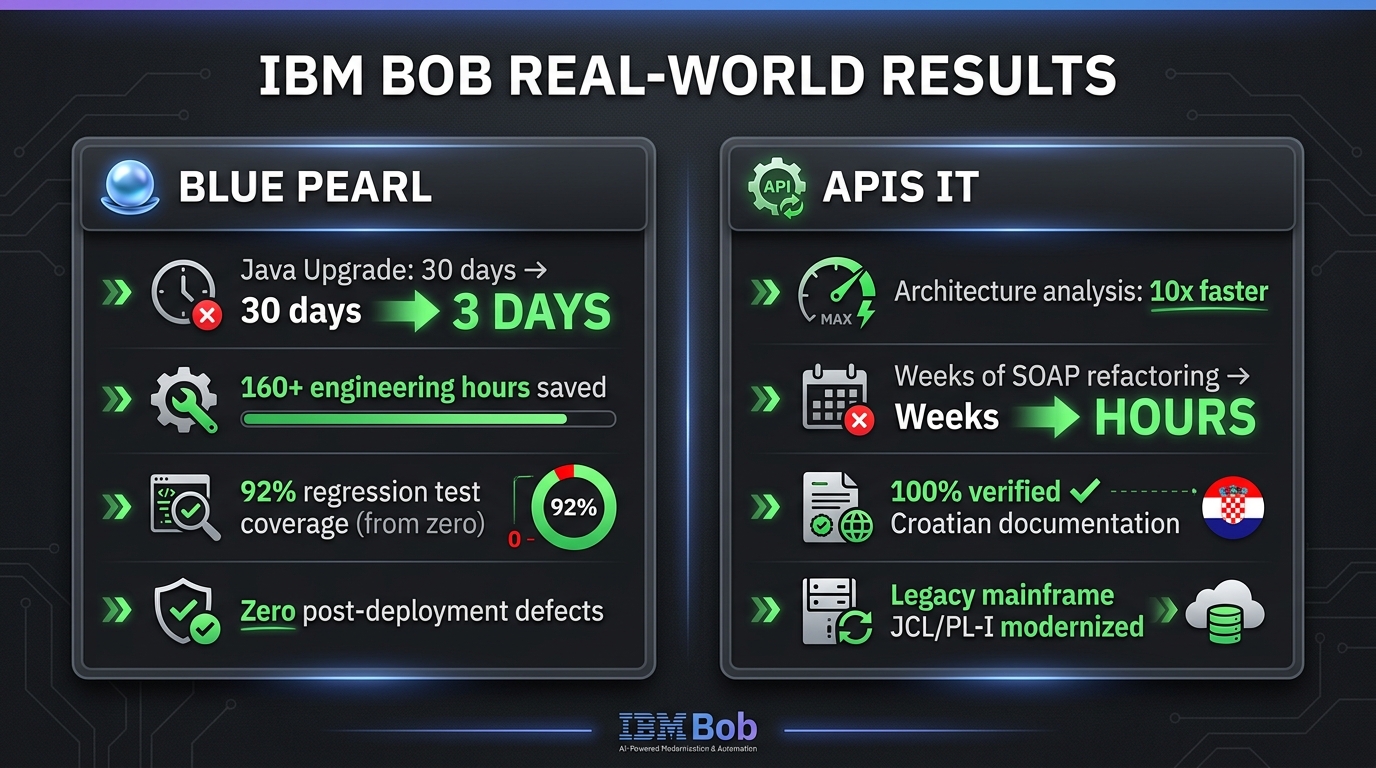

Real-World Results: Blue Pearl and APIS IT

IBM’s launch of Bob was accompanied by two detailed case studies — Blue Pearl and APIS IT — that provide the most concrete picture of what the platform produces in production deployments. Both are worth examining in detail, because the specific numbers tell a more nuanced story than the headlines suggest.

Blue Pearl: Java Modernization in Three Days

Blue Pearl, a cloud solutions firm, used IBM Bob to modernize their BlueApp platform from a legacy Java version to Java 25 LTS. The nature of this task is worth understanding clearly: a major Java version upgrade isn’t simply a recompilation. It involves identifying deprecated API usage across the entire codebase, updating or replacing those calls, resolving dependency conflicts with third-party libraries and vendor integrations, establishing a regression test baseline, validating that the upgraded application performs equivalently to the original, and confirming that no security vulnerabilities have been introduced in the process.

For a moderately complex enterprise codebase, this work typically takes four to six weeks of senior engineering time. Blue Pearl completed the equivalent work in three days using Bob — a roughly 90% compression in elapsed time. The supporting numbers reinforce why that compression was achievable: 127 deprecated API calls were identified and resolved across the codebase and external vendor integrations (a task that is painstaking to do manually and highly automatable with the right agents), 92% regression test coverage was established from a starting point of zero existing tests, the upgraded application showed 15% faster response times, and zero CVE-bearing dependencies remained in the released build.

The 160+ engineering hours saved represents not just reduced cost on this project, but freed capacity redirected toward new feature development — the 20–40% of budget that was previously crowded out by modernization work.

APIS IT: Mainframe Modernization for Government Systems

The APIS IT case study involves a fundamentally harder problem. APIS IT is a Croatian IT provider managing critical national government systems — systems built on mainframe technology using JCL/PL/I, EGL/CICS, and COBOL, often with decades-old undocumented business logic that exists only in the institutional memory of engineers who may no longer be with the organization.

IBM Bob’s discovery and documentation agents produced 100% operator-verified documentation in Croatian for JCL/PL/I jobs that had previously been entirely undocumented — a task that is both critically important for modernization and extraordinarily time-consuming to do manually. For a 20-year-old EGL/CICS system, Bob delivered 10x faster multi-format architecture analysis and process documentation compared to manual methods.

The modernization work itself showed equally striking compression: SOAP service refactoring to .NET 8 REST APIs — work that previously took weeks — was completed in hours. File counts and dependency complexity were reduced by 30–50% in the refactored systems. For a government IT context where compliance, accuracy, and auditability are non-negotiable, the combination of speed and verification quality is what makes these results meaningful rather than just impressive.

What the Case Studies Actually Prove

It’s important to read these results carefully. Both case studies are legacy modernization scenarios — the exact category of work that consumes 60–80% of enterprise engineering budgets and where Bob was most specifically designed to perform. They are not evidence of general-purpose productivity improvement across all development contexts. The results are real, but the applicability varies significantly depending on whether your engineering challenges look more like Blue Pearl and APIS IT or more like greenfield product development.

IBM’s Own 80,000-Employee Deployment: What the Internal Data Shows

IBM’s internal deployment of Bob is the largest controlled dataset available on the platform’s performance, and it’s more methodologically interesting than most vendor self-reported productivity figures. IBM began with a 100-developer pilot in June 2025, specifically structured to generate reliable performance data before broader rollout. That pilot ran under controlled conditions, measuring productivity gains across three distinct categories of work: new feature development, security remediation, and modernization tasks.

The 45% Productivity Figure: Context Matters

The headline result — an average 45% productivity gain across surveyed users — deserves careful interpretation. Forty-five percent is an average across three very different task categories. Modernization tasks, which are the most automatable, likely drove that average up. New feature development, which involves more creative and contextually specific work, likely contributed a lower figure. Security remediation sits somewhere in between, with highly structured vulnerability classes responding well to automation and novel attack patterns requiring more human judgment.

IBM’s decision to report an average across these three categories, rather than breaking them out separately, is a methodological choice that makes the number less useful for organizations trying to forecast the productivity impact in their specific context. If your engineering work is primarily greenfield development, a 45% average that includes heavy modernization workloads is probably an overestimate of what you’d see. If your work is heavily weighted toward maintenance and legacy system management, it may be an underestimate.

The IBM Instana Team Data Point

The more granular data point from IBM’s internal deployment comes from the Instana team, which reported a 70% reduction in time on selected operational tasks. Instana is IBM’s observability platform — a highly technical product with complex monitoring and alerting workflows. A 70% time reduction on specific operational tasks within that context is a meaningful signal about where Bob’s agentic automation produces its sharpest results: high-repetition, well-defined processes within technically complex systems.

The scale of deployment — 80,000+ employees using the platform globally — also provides real-world evidence of Bob’s ability to operate at enterprise scale without the reliability and performance degradation that often affects AI tools when moved from pilot to production. That operational track record at scale is itself a differentiator in a market where many enterprise AI tools have strong pilot results but struggle with production deployment consistency.

Pricing Model: Bobcoins, Pass-Through Pricing, and What to Actually Budget

IBM Bob’s pricing model is distinctive and worth understanding in detail, both for budget planning and for understanding what the consumption-based approach signals about the platform’s design philosophy.

The Bobcoin System Explained

Bobcoins are consumption credits priced at approximately $0.50 each. They function as the unit of measurement for AI compute consumed through the platform, with different task types consuming different amounts. Lightweight operations like code suggestion or simple refactoring consume fewer Bobcoins per interaction. Complex agentic and CLI workflows through BobShell — the kind that coordinate multiple agents across multiple SDLC stages — consume more, typically 5–10 Bobcoins per run for complex operations.

The current pricing tiers are structured as follows: a free 30-day trial includes 40 Bobcoins; the Pro tier is $20 per month with 40 Bobcoins included; the Pro+ tier is $60 per month with 160 Bobcoins plus a $9 support fee; and the Ultra tier is $200 per month with 500 Bobcoins plus a $30 support fee. Enterprise organizations can purchase 1,000 Bobcoin packs at $500, implying a discount to the retail rate for high-volume users. Additional Bobcoins can be purchased at approximately $0.50 each across tiers.

What Pass-Through Pricing Means in Practice

The pass-through element of the pricing model means that the cost of underlying model inference — when Bob routes a task to Anthropic Claude or IBM Granite — is reflected in Bobcoin consumption rather than bundled into a flat fee. This creates a direct line between task complexity, model selection, and cost, which is the mechanism through which Bob enables actual cost regulation rather than just cost visibility.

For engineering leaders used to per-seat licensing for tools like GitHub Copilot ($39/user/month) or Cursor ($40/user/month), the consumption-based model requires a different budgeting approach. A team of 20 developers on GitHub Copilot Enterprise costs a predictable $780 per month regardless of how intensively or casually each developer uses the tool. The equivalent Bob deployment will vary based on actual usage patterns — potentially lower for light users, potentially significantly higher for teams running complex multi-stage agentic workflows regularly.

Budgeting Guidance for Organizations Evaluating Bob

For organizations planning a Bob deployment, the 30-day free trial (40 Bobcoins) is the right starting point — not to evaluate Bob’s features, but to establish an actual usage baseline from which to project ongoing costs. Running a controlled pilot with a defined set of workflows, measuring Bobcoin consumption per developer per week, and extrapolating to the full team provides a far more reliable cost forecast than any vendor estimate. The first pilot group should include a mix of task types: some legacy modernization work (where consumption will be higher due to complex agent orchestration) and some routine coding tasks (where consumption will be lower).

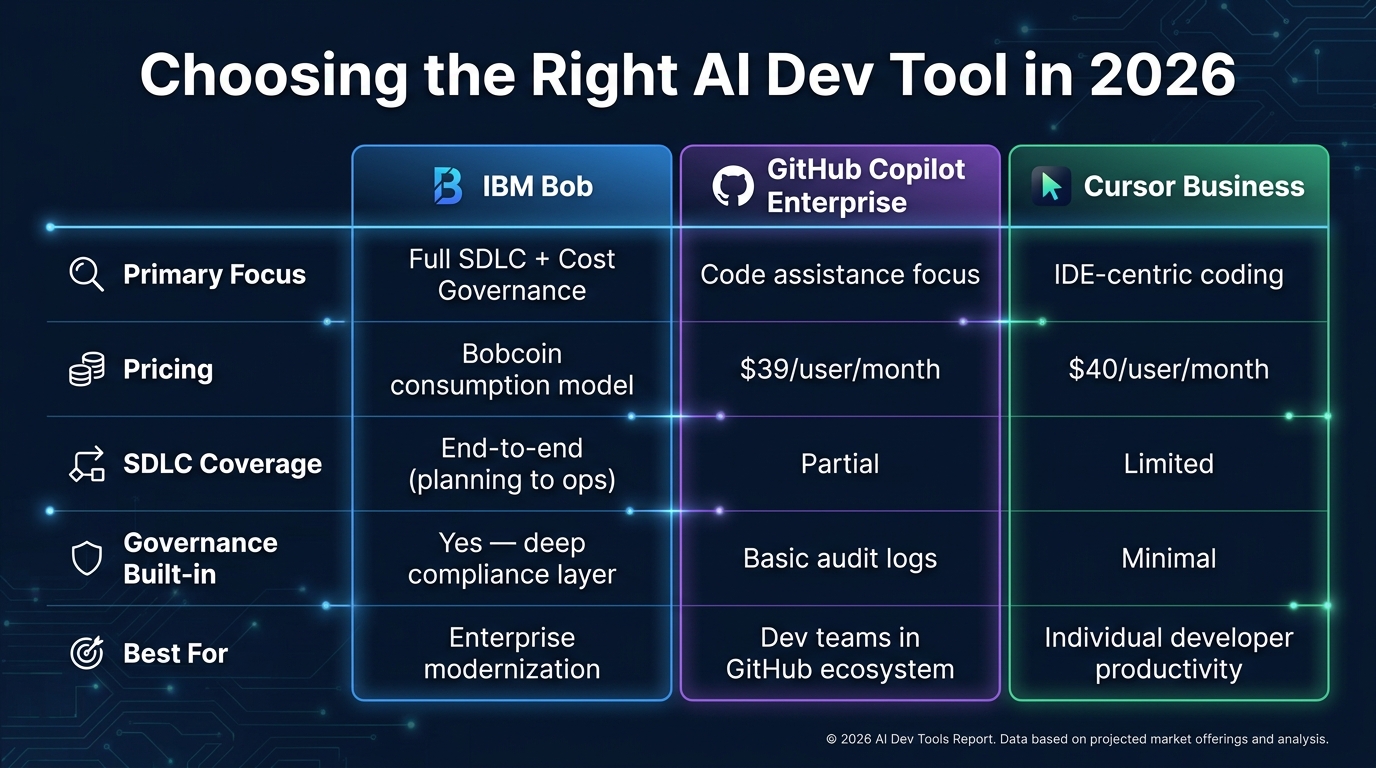

IBM Bob vs. GitHub Copilot and Cursor: Where Each Actually Belongs

The most practically useful comparison for engineering leaders evaluating Bob isn’t about which tool is “better” — it’s about which tool is designed to solve which problem. These three platforms occupy genuinely different positions in the market, and the use cases where each excels don’t overlap as much as vendor positioning might suggest.

GitHub Copilot Enterprise: The Coding Layer Standard

GitHub Copilot Enterprise ($39/user/month) is the most widely deployed AI coding assistant in enterprise environments as of 2026. Its strengths are clear: tight GitHub integration, IP indemnity coverage, fine-tuned models trained on organizational codebases, SAML SSO, audit logs, and strong code completion quality across a broad range of languages. Its scope is intentionally narrow — it focuses on the coding stage of development and does it well. It doesn’t attempt to orchestrate planning, automate testing generation, or manage deployment pipelines.

For organizations where the primary bottleneck is individual developer coding velocity and the existing tooling infrastructure handles other SDLC stages adequately, Copilot Enterprise remains a well-proven option with predictable costs and broad developer familiarity.

Cursor Business: The IDE-Centric Development Experience

Cursor ($40/user/month for Business) is an IDE-first product that has built a strong following among developers who want a deep, context-aware coding experience within a specialized editor environment. Cursor’s strength is the quality and coherence of its in-editor AI assistance, particularly for complex multi-file changes within a single project context. Like Copilot, it doesn’t attempt to extend into pre-coding planning or post-coding testing and deployment stages.

Cursor is often the tool of choice for individual developers and smaller engineering teams where personal productivity is the primary metric and cross-team governance requirements are minimal. The per-seat pricing is competitive with Copilot, though enterprise governance features are less mature.

IBM Bob: The Governance-First SDLC Platform

Bob’s design center is fundamentally different from both of the above. It is not primarily trying to accelerate individual developer coding velocity — though it does that as part of its scope. It is trying to regulate cost and enforce governance across the full development lifecycle, including the stages (discovery, planning, testing, deployment, operations) that Copilot and Cursor don’t address at all.

The organizations where Bob has the clearest value proposition are those with significant legacy modernization workloads, regulatory compliance requirements that demand audit trails for AI-assisted development, hybrid cloud environments where deployment governance is complex, and engineering budgets that are visibly dominated by maintenance rather than new development. For those organizations, Bob addresses a category of cost that Copilot and Cursor are architecturally unable to touch.

The organizations where Copilot or Cursor might remain the better choice are those with primarily greenfield development work, small teams with minimal governance overhead, or organizations where the SDLC toolchain is already well-integrated and the specific bottleneck is individual coding velocity. In those contexts, Bob’s additional complexity and consumption-based cost model may not produce proportional returns.

What IBM Bob Can’t Do — And What You Still Own

No honest evaluation of a platform like Bob is complete without an equally clear-eyed look at its limitations. The launch materials, predictably, don’t lead with these — but for engineering leaders making deployment decisions, they’re essential context.

Bob Is Not a Substitute for Engineering Leadership

Bob’s agentic workflows automate well-defined processes within a governed framework. They do not substitute for engineering judgment on questions that are genuinely ambiguous: architectural decisions with long-term implications, tradeoffs between performance and maintainability, risk assessments for novel deployment patterns, or the strategic sequencing of technical debt remediation against feature delivery commitments. These remain human responsibilities, and Bob’s governance design (with its human-in-the-loop checkpoints) explicitly preserves that responsibility rather than obscuring it.

Quality Depends on Skill Definitions

The reusable skills system is only as good as the skills that have been defined. During early deployment, before a library of high-quality organizational skills has been built and validated, Bob’s output quality will be more variable than it will be once that library matures. This means initial deployment requires investment in skill definition — not just tool configuration — and teams that underinvest in this phase will likely see disappointing results relative to organizations that take it seriously.

On-Premises Deployment Is Planned, Not Current

As of the April 2026 general availability launch, Bob is delivered as SaaS. On-premises deployment is planned but not yet available. For organizations in sectors with strict data residency requirements that preclude SaaS-based AI tools — certain government agencies, defense contractors, and highly regulated financial institutions — this is a current limitation that may delay or prevent adoption until the on-premises option reaches availability.

Consumption-Based Costs Can Surprise Unprepared Teams

The same pass-through pricing model that enables cost regulation can produce budget surprises for teams that deploy Bob without establishing consumption baselines first. Complex agentic workflows run at high frequency by a large developer team can accumulate Bobcoin consumption faster than flat-rate pricing comparisons would suggest. Organizations that begin deployment without the 30-day pilot baseline-setting process described earlier risk budget overruns that undermine the cost regulation argument for the platform.

How to Evaluate Whether IBM Bob Makes Sense for Your Organization

Given the complexity of the platform and the specificity of the contexts where it produces its best results, the evaluation process for IBM Bob should be more structured than the typical AI tool pilot. Here is a practical framework for engineering leaders considering deployment.

Step 1: Audit Your Current Budget Distribution

Before engaging with IBM’s sales process, audit your engineering budget distribution across maintenance/legacy work versus new development. If your split is close to the 60–80% maintenance figure IBM cites as the target problem, the ROI case for Bob is potentially strong. If your split is closer to 40–60% maintenance, the case is more nuanced and depends heavily on which specific legacy workloads Bob’s modernization agents handle well. If your work is primarily greenfield, the case is weakest and Copilot or Cursor may serve you better at lower cost and complexity.

Step 2: Map Your Governance Requirements

Inventory the compliance and governance requirements that apply to your development environment. If you operate under frameworks that require audit trails for code generation, data handling controls for AI-assisted processes, or configurable human oversight for production deployments, those requirements strengthen the case for Bob’s governance architecture over the lighter-touch compliance features of Copilot or Cursor. If your governance requirements are minimal, the governance premium built into Bob may not justify the additional cost and operational complexity.

Step 3: Run the 30-Day Consumption Baseline Pilot

Use the free trial period deliberately. Select 5–10 developers who represent different workflow types in your organization, assign them specific tasks that mirror your real workload distribution, and measure Bobcoin consumption per workflow type and per developer per week. Use that data to project costs at full team scale before committing to a paid tier. This baseline is also the foundation for your ROI calculation: compare Bobcoin cost per workflow against the current engineering hours required for the equivalent work without Bob.

Step 4: Invest in Skill Library Development Before Broad Rollout

Assign your most senior engineers to build and validate the initial reusable skills library for your most common workflows before rolling Bob out broadly. This investment in the skills layer is what determines whether the broad rollout produces consistent, high-quality outputs or variable results that erode developer confidence in the platform. The skills library is the compounding asset that makes Bob increasingly valuable over time — but only if it’s built deliberately and maintained as workflows evolve.

Step 5: Define Human-in-the-Loop Thresholds Before Deployment

Work with your security, compliance, and engineering leadership to define the specific task types and risk thresholds that require human approval checkpoints before Bob rolls them out autonomously. This configuration work should happen before developers begin using the platform in production — retrofitting oversight requirements after deployment is technically possible but operationally disruptive and creates compliance exposure during the gap period.

The Bigger Question: Is This the Direction Enterprise Development Is Heading?

IBM Bob’s architecture reflects a specific thesis about where enterprise software development is going: toward governed, multi-agent orchestration across the full lifecycle, with cost regulation and auditability as built-in platform properties rather than add-ons. Whether or not Bob specifically becomes the dominant platform in this space, the thesis itself is almost certainly correct.

The economic pressure driving that direction is real and well-documented. Engineering budgets dominated by legacy maintenance are unsustainable at a time when competitive differentiation depends on new capability delivery. The regulatory and governance requirements applying to AI-assisted development are intensifying, not easing. And the fragmented, tool-per-stage approach to the SDLC has well-known coordination costs that compound as organizations scale.

Bob is IBM’s answer to those pressures, built by an organization that has both the enterprise credibility to navigate complex procurement and compliance environments and the technical depth (Granite models, watsonx infrastructure, IBM Consulting’s modernization practice) to deliver substantive capability at the stages of the lifecycle where other vendors don’t operate. The April 28, 2026 launch and the internal deployment at 80,000+ IBM employees make it one of the most comprehensively deployed AI SDLC platforms currently available — not a concept, not a beta, but a production system with a documented track record.

Whether it’s the right platform for your organization depends on where your engineering costs actually live, what your governance requirements demand, and how seriously you’re willing to invest in the skills and configuration work that determines whether agentic platforms produce consistent value or expensive noise. The answers to those questions — not the platform’s launch headlines — are where the evaluation should start.

Key Takeaways for Engineering and Technology Leaders

- IBM Bob targets the 60–80% of enterprise engineering budgets consumed by legacy maintenance and modernization — the category of cost that point-solution coding assistants are architecturally unable to address.

- Multi-model orchestration is the core cost regulation mechanism, dynamically routing tasks to models based on accuracy, latency, and cost rather than sending everything to expensive frontier models by default.

- Pass-through pricing via Bobcoins creates genuine cost visibility — a different model from per-seat flat-rate tools that obscure the relationship between usage and spend.

- Blue Pearl and APIS IT results are real but specific — the clearest returns are in legacy modernization scenarios, not general-purpose development acceleration.

- The skills library is the compounding investment — the platform’s long-term value is determined by the quality of the reusable skills defined during early deployment, not the tool itself.

- Bob, Copilot, and Cursor occupy different positions in the market. They are not direct substitutes. Choose based on where your engineering cost and governance challenges actually live, not on feature comparison matrices.

- Run a structured 30-day consumption baseline pilot before committing to production deployment. The consumption-based pricing model makes this baseline essential for accurate cost projection.

- On-premises deployment is planned but not yet available — organizations with strict data residency requirements should factor this into timing decisions.