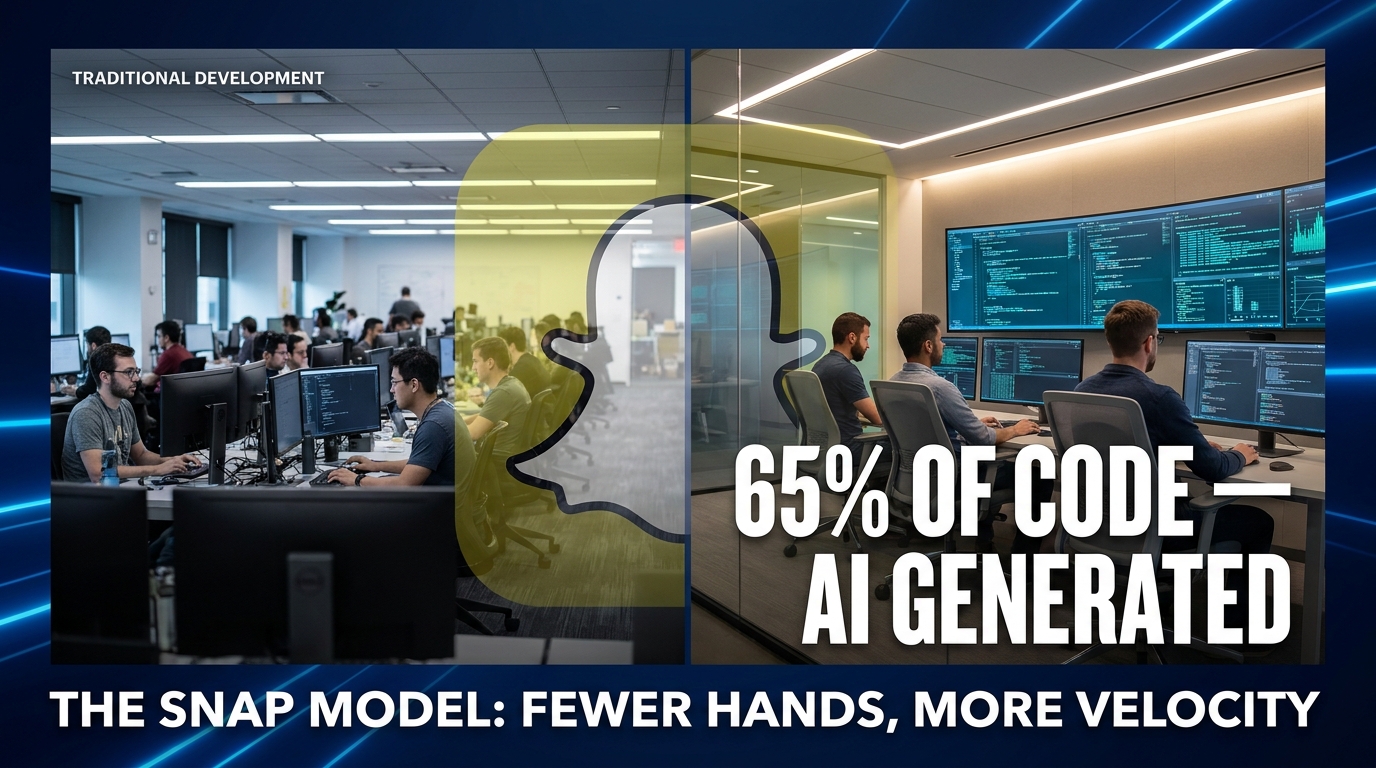

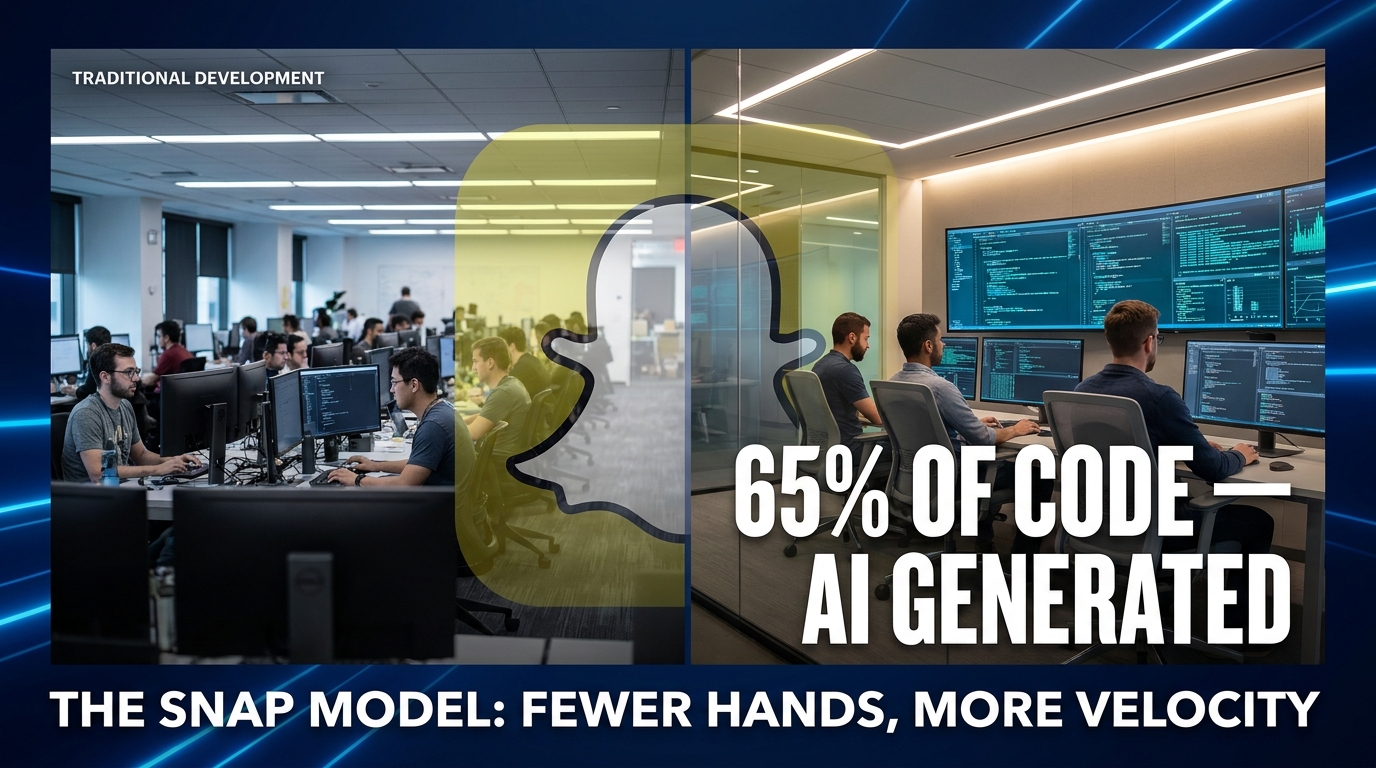

On the morning of April 15, 2026, Evan Spiegel sent a memo to Snap’s global workforce that would ripple through every engineering leader’s inbox within hours. One thousand jobs — 16% of the company’s entire headcount — were being eliminated. Three hundred additional open roles were closed before the first applicant ever interviewed. The reason Spiegel cited wasn’t a revenue miss, a strategic pivot, or a board mandate to cut burn. It was something far more consequential: artificial intelligence now generates 65% of all new code written at Snap.

He called it a “crucible moment.” The market called it an 8% stock pop. The engineering world called it a warning shot.

But here’s what got lost in the noise of the layoff headlines: the actual mechanics of how Snap got to 65% AI-generated code, why that number matters far more than the layoff count, and — critically — what it would take for a mid-sized engineering team to replicate that kind of output without the collateral damage of mass restructuring.

This isn’t a story about job cuts. It’s a story about a fundamental rewiring of how software gets built. If you run, manage, or work inside an engineering organization in 2026, Snap’s April announcement is the most important competitive benchmark you haven’t fully stress-tested yet. Here’s what it actually means — and what you should do about it.

The Numbers Behind the Headlines: Snap’s 65% Stat Unpacked

Sixty-five percent sounds dramatic. But context matters enormously here, and the industry data around it tells a story that most breathless news articles ignored entirely.

Where Snap Fits in the Broader Industry Picture

According to 2026 market research, 41% of all enterprise code is now AI-generated across the industry, up from roughly 20% in early 2024. The AI coding tools market has grown to $12.8 billion in 2026 — more than double its $5.1 billion valuation in 2024. Eighty-two percent of developers now use AI tools weekly, and among elite-tier engineering teams, AI-assisted code share sits between 60% and 75%. Snap, at 65%, isn’t an outlier. It’s a bellwether: a large-scale proof that what top-performing teams achieve individually can be institutionalized company-wide.

What makes Snap’s 65% figure different from a developer who just leans heavily on autocomplete is scope. The AI generation isn’t limited to boilerplate or unit tests. According to details from Spiegel’s memo and subsequent reporting, AI-generated code is running across Snapchat+ subscription features, the advertising platform’s infrastructure, Snap Lite builds, and core backend engineering tasks. This is production-grade, revenue-critical code — not a side experiment.

The Financial Architecture of the Decision

The math Snap is working with is brutal and clear. Prior to the April restructuring, Snap employed approximately 5,261 full-time staff globally. With 1,000 jobs cut and 300+ open roles closed, the company targets over $500 million in annualized cost savings by the second half of 2026. At the same time, Snap absorbed $95–130 million in pre-tax charges in Q2 2026, primarily from severance. That’s the short-term cost of a long-term structural shift toward net-income profitability.

For engineering leaders watching from the outside, the question isn’t whether Snap’s trade-off was the right one ethically. The question is whether the productivity math actually works — and the evidence suggests that for Snap’s specific operating context, it does. The company has not reported a corresponding slowdown in product velocity. Snapchat+ sits at 24 million subscribers and climbing. Ad platform performance metrics are improving. The lights are on, and the team is smaller.

What “AI-Generated” Actually Means

One nuance worth drawing sharply: “AI-generated” does not mean “AI-autonomous.” At Snap’s scale and in 2026’s tooling landscape, AI-generated code still requires human engineers to prompt, review, test, and approve it. The workflow isn’t engineers watching a robot build a product. It’s engineers functioning as directors and architects — writing specifications, evaluating outputs, catching edge cases, and steering system design — while AI agents handle the volume work of implementation. The 65% number represents the authorship share of code, not the supervision share. That distinction matters enormously when you start thinking about how to replicate the model.

Small Squads, Big Output: How Snap’s Organizational Strategy Actually Works

Inside the memo and the subsequent investor context that emerged in the weeks following the announcement, the operational concept Snap keeps returning to is “small squads.” This is more than a headcount euphemism. It’s a specific thesis about how teams at software companies should be organized when AI tools are operating at their current capability level.

The Small Squad Model: What It Looks Like in Practice

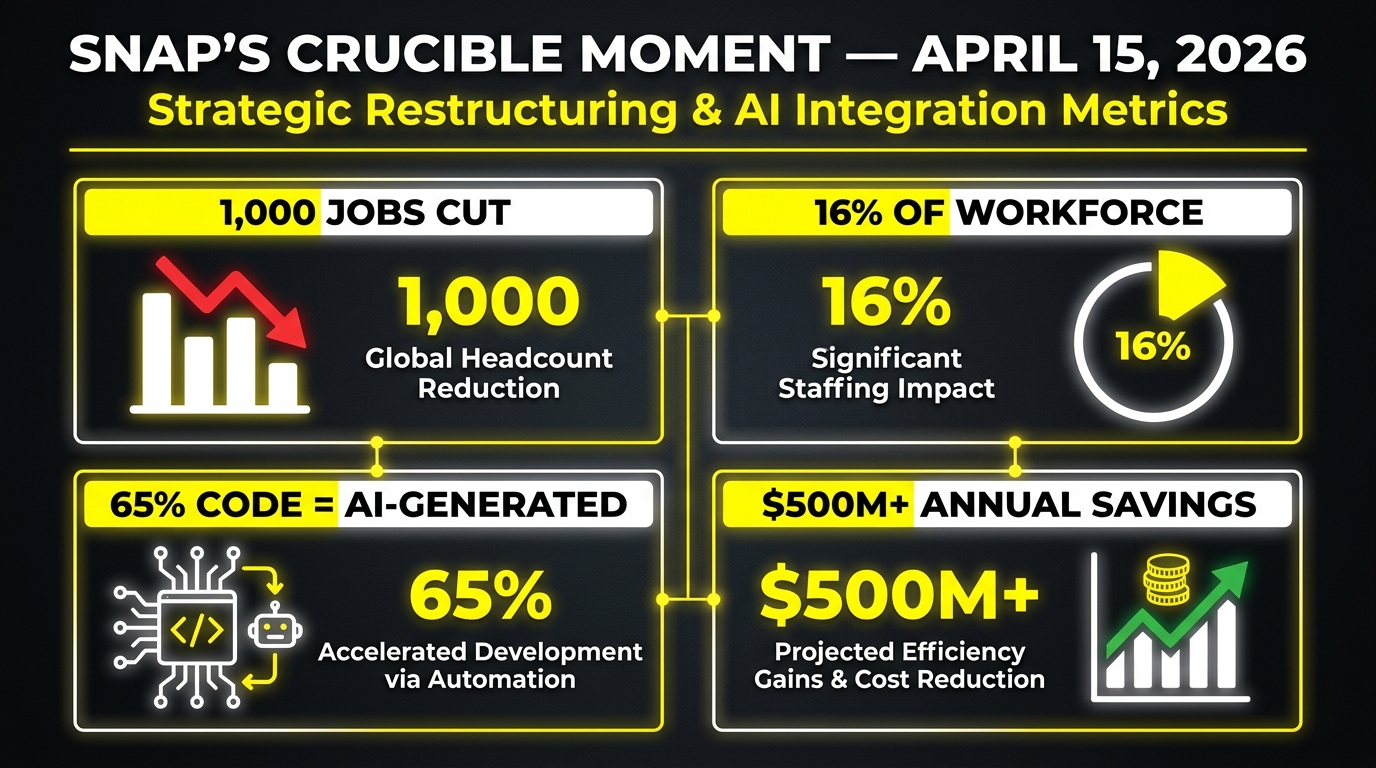

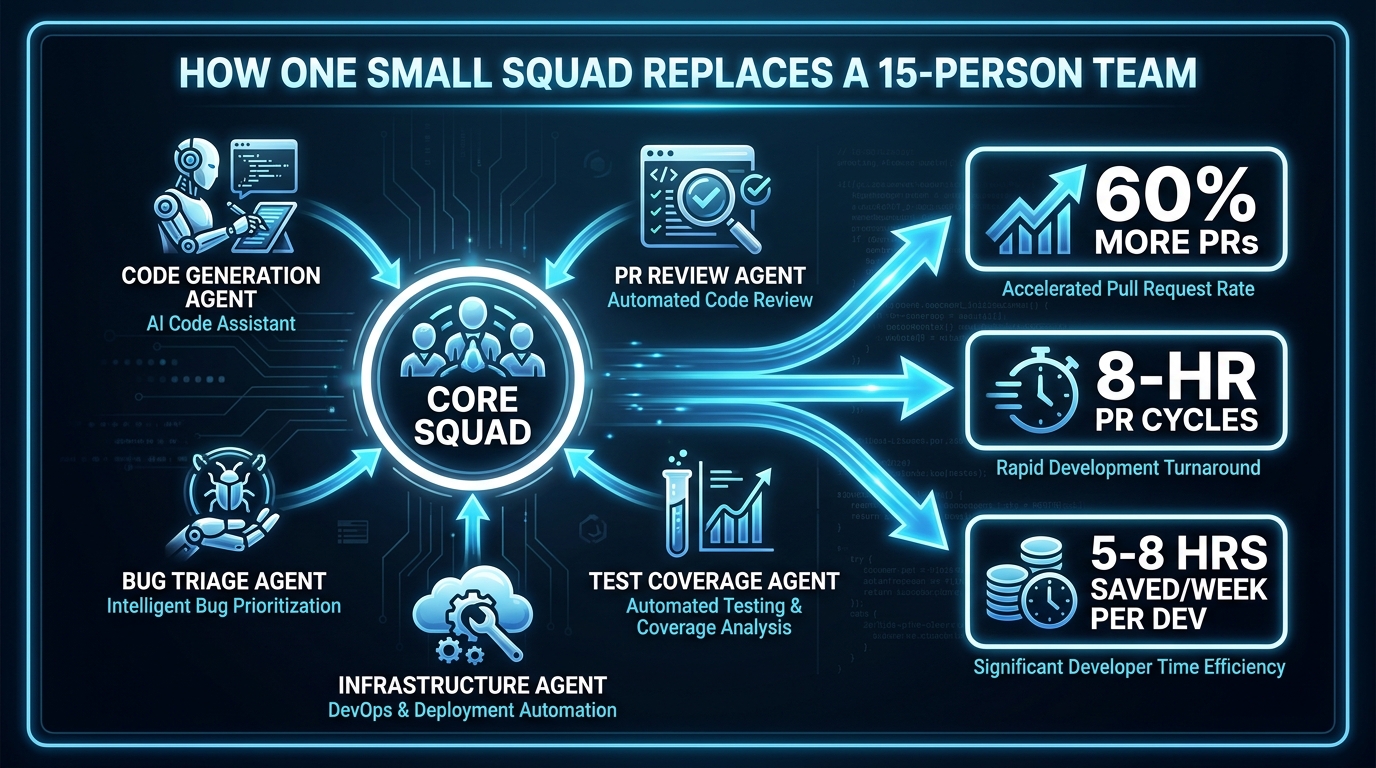

A traditional Snap product squad might have included four to six engineers, a product manager, a designer, and potentially a data analyst — perhaps eight to ten people total driving a feature area. Under the small squad model, that same feature area might be staffed with two to three senior engineers and a product lead, with AI agents operating as persistent collaborators on code generation, PR review, bug triage, and test coverage.

Industry benchmarks support the viability of this structure. Elite-tier teams using AI coding tools in 2026 are achieving 60% more pull requests per engineer, with PR cycle times under eight hours compared to multi-day turnarounds in non-AI workflows. Individual developers are reclaiming five to eight hours per week that were previously consumed by repetitive implementation work. When you stack those gains across a small, highly senior team, the throughput math competes credibly with a much larger junior-heavy squad.

The Role of Spec-Driven Engineering

One of the less-reported keys to making small squads actually work at scale is what engineers and consultants are calling spec-driven engineering. AI coding agents perform exponentially better when they receive precise, well-structured specifications rather than loose prompts. This means that in a true small-squad model, engineers are spending significantly more time upfront writing rigorous technical specs — defining inputs, outputs, edge cases, architecture constraints, and acceptance criteria — before AI agents begin generating code.

This shift fundamentally changes who is valuable on an engineering team. The developer who was previously valued for writing 500 lines of feature code per day becomes less central. The developer who can architect a system clearly enough to write a specification that AI can execute reliably becomes irreplaceable. Snap’s decision to primarily target product managers and partnership roles in the April layoffs — rather than senior engineers — is consistent with this dynamic.

AI Agents Across the Full SDLC

Snap’s efficiency gains aren’t limited to code generation at the implementation layer. Across the software development lifecycle (SDLC), AI tools are compressing timelines at multiple stages. Teams using integrated AI workflows in 2026 report 47% faster pull request reviews and 62% faster bug triage. Test generation — historically one of the most time-consuming and lowest-prestige tasks in software engineering — has been largely handed to AI agents. Infrastructure configuration, documentation drafting, and even code refactoring are all areas where AI authorship has meaningfully replaced human hours. The small squad isn’t smaller because it’s doing less. It’s smaller because AI has absorbed the volume work, leaving the humans to do the high-judgment work.

The Tool Stack Driving It All: Cursor, Claude Code, GitHub Copilot, and Windsurf

Snap hasn’t publicly named every tool in its AI coding stack, but reporting and industry context make the likely composition reasonably clear. Understanding which tools drive the 65% figure — and how they differ — is critical for any team trying to replicate the model rather than just benchmark against it.

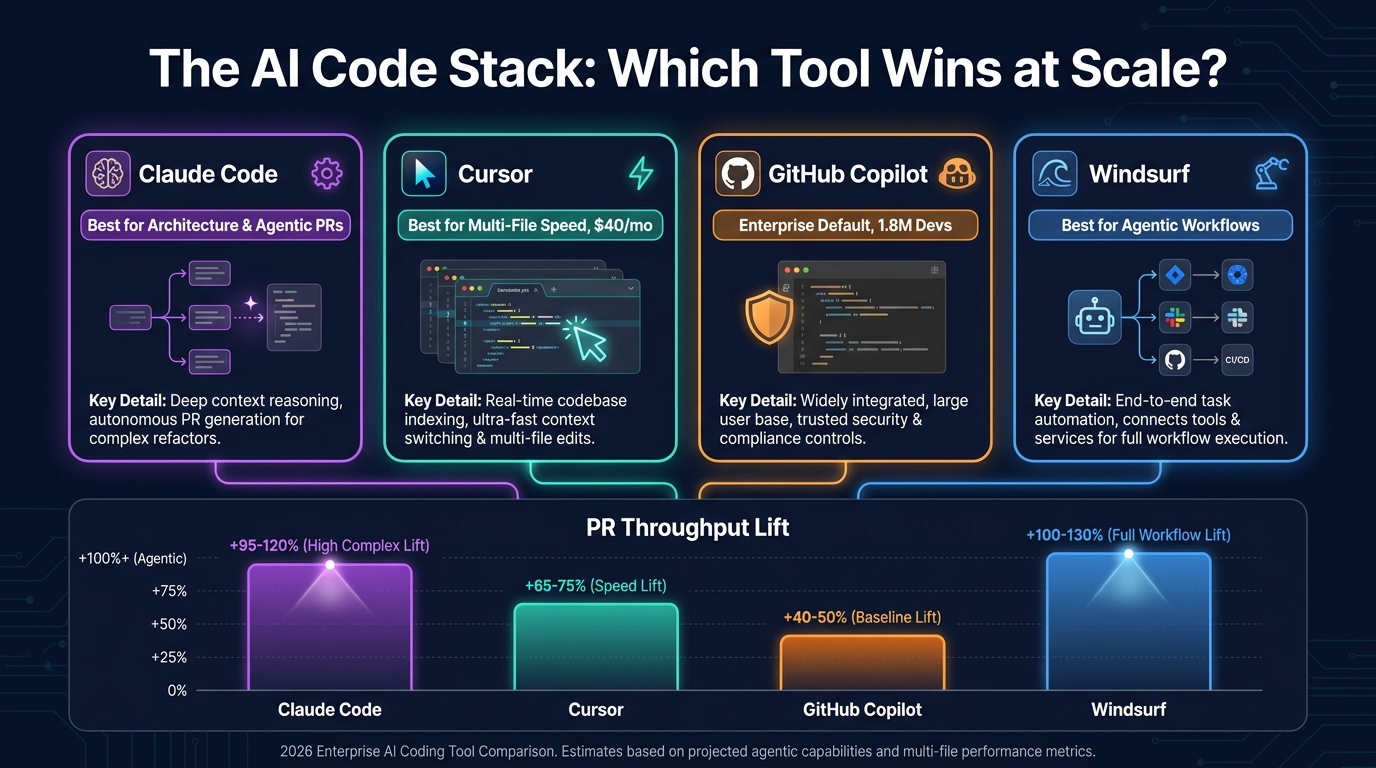

Claude Code: The Architecture Leader

As of early 2026, Claude Code (Anthropic’s coding-focused AI) has emerged as the market leader for complex, architectural-level coding tasks. Ninety-five percent of engineers using it report doing so weekly for at least half their work. Its strength is agentic pull requests — situations where the AI doesn’t just autocomplete a line but autonomously generates, tests, and submits a full PR based on a specification. For companies like Snap where the engineering team is doing complex, multi-system work on advertising infrastructure and consumer apps simultaneously, Claude Code’s ability to handle architectural changes without requiring constant human hand-holding makes it uniquely suited to the small-squad model.

Cursor: The Throughput Engine

Cursor reached $1 billion in annual recurring revenue in 2025 — a figure that would have seemed impossible for a developer tool a few years prior — and its growth trajectory has continued into 2026. Its edge is raw throughput on multi-file editing. Where some AI tools struggle with context across a large codebase, Cursor maintains coherence across multiple files simultaneously, making it particularly effective for refactoring sessions, cross-module feature work, and high-velocity iteration cycles. Enterprise teams report 60% more PRs per engineer per week when Cursor is the primary tool. At $40 per user per month for the Business tier, it’s also one of the better-value options at team scale — the ROI math tends to close quickly against the cost of a single additional engineering hire.

GitHub Copilot: The Enterprise Default

With 1.8 million developers and more than 50,000 organizations using it in 2026, GitHub Copilot remains the default AI coding tool for enterprises that need SOC 2 compliance, deep GitHub integration, and organization-wide governance from day one. Ninety percent of the Fortune 100 uses it. It’s not the highest-ceiling option in the stack — its autocomplete-focused design means it generates less autonomous output than Claude Code or Cursor — but for teams that need to start somewhere with low friction and auditable usage, Copilot is the practical foundation. Many high-performing teams run Copilot organization-wide as a baseline and use Cursor or Claude Code for more complex work.

Windsurf: The Agentic Workflow Specialist

Windsurf (formerly Codeium’s premium tier) has carved out a distinct position in 2026 as the tool best suited for agentic workflows — situations where you want an AI agent to complete an extended, multi-step engineering task with minimal interruption. This is particularly relevant for the kind of infrastructure work Snap is doing: setting up data pipeline configurations, managing deployment scripts, and handling the operational engineering tasks that are important but don’t require a senior engineer’s creative judgment. Teams using Windsurf in agentic mode report some of the most significant time savings on the infrastructure side of the SDLC.

The Multi-Tool Reality

The practical reality for most engineering teams is that no single tool wins across every use case. Best practice in 2026 involves selecting one to two primary coding agents paired with an analytics platform to track ROI, then layering specialist tools for specific workflow stages. The anti-pattern to avoid is tool proliferation — every engineer running a different AI tool with no standardization, no shared prompt libraries, and no common measurement framework. That approach produces anecdote rather than compound organizational learning.

Infrastructure Beyond Code: Snap’s GPU and Data Processing Transformation

The AI-generated code story at Snap doesn’t exist in isolation. It’s part of a broader engineering infrastructure transformation that has been running in parallel — and understanding both threads explains why Snap’s efficiency gains are structural rather than cosmetic.

The NVIDIA cuDF Deployment

Alongside its AI coding adoption, Snap deployed NVIDIA cuDF on Apache Spark via Google Cloud, using GPU acceleration to fundamentally change how its data infrastructure operates. The results are striking: 4x faster runtime for petabyte-scale data processing and 76% reduction in daily processing costs. The GPU requirement for A/B testing dropped from 5,500 concurrent units to 2,100 — a 62% reduction in compute footprint for the same analytical output.

For context, Snap runs over 6,000 metrics per A/B test. The ability to process petabyte-scale datasets in hours rather than days isn’t just an infrastructure win; it directly enables the small-squad model. A team of four engineers running hundreds of product experiments needs to get results fast. When data processing takes days, you need more analysts to manage the pipeline. When it takes hours, you don’t.

Why Infrastructure Efficiency Enables Headcount Efficiency

This is the part of Snap’s story that tends to get separated from the AI coding narrative but belongs with it. The $500 million in annualized savings Snap is targeting comes from a combination of headcount reduction and infrastructure cost reduction running simultaneously. Engineering teams that are trying to replicate Snap’s model by only adopting AI coding tools — without also rethinking their data infrastructure, compute costs, and operational overhead — will capture only a fraction of the available efficiency.

The real lesson from Snap isn’t “replace engineers with AI.” It’s “build an engineering organization where every layer — human, code, infrastructure, and data — is running at its most efficient configuration simultaneously.” The AI coding adoption is the most visible layer, but it’s one of four or five levers being pulled in concert.

What the “AI Washing” Critics Get Right (and Wrong)

The April announcement triggered an immediate and pointed debate in the tech industry. Critics — many of them engineers who had just watched colleagues receive termination notices — argued that Snap’s AI-generated code framing was “AI washing”: using AI’s momentum as a palatable narrative for what is ultimately a financial restructuring dressed up in technology language.

The Strongest Version of the Criticism

The critique has real merit in several areas. First, trackers noted that a significant portion of Snap’s April cuts targeted product managers and partnership roles — not software engineers. If 65% of code is AI-generated and the layoffs are primarily in non-engineering functions, the causal chain between “AI codes more” and “these specific people lose their jobs” is less direct than Spiegel’s memo implied.

Second, the AI-washing concern is broader than Snap. Analysis of tech layoffs through mid-April 2026 found approximately 99,283 job cuts across the sector, with 47.9% attributed to AI based on public company statements — but those attributions were based on what executives said, not on verified productivity data. Block (formerly Square), under Jack Dorsey, attracted significant criticism in February 2026 when it cited “intelligence tools” to justify 4,000 layoffs, despite the company having over-hired significantly during the COVID boom and experiencing a 40% stock drop unrelated to AI productivity.

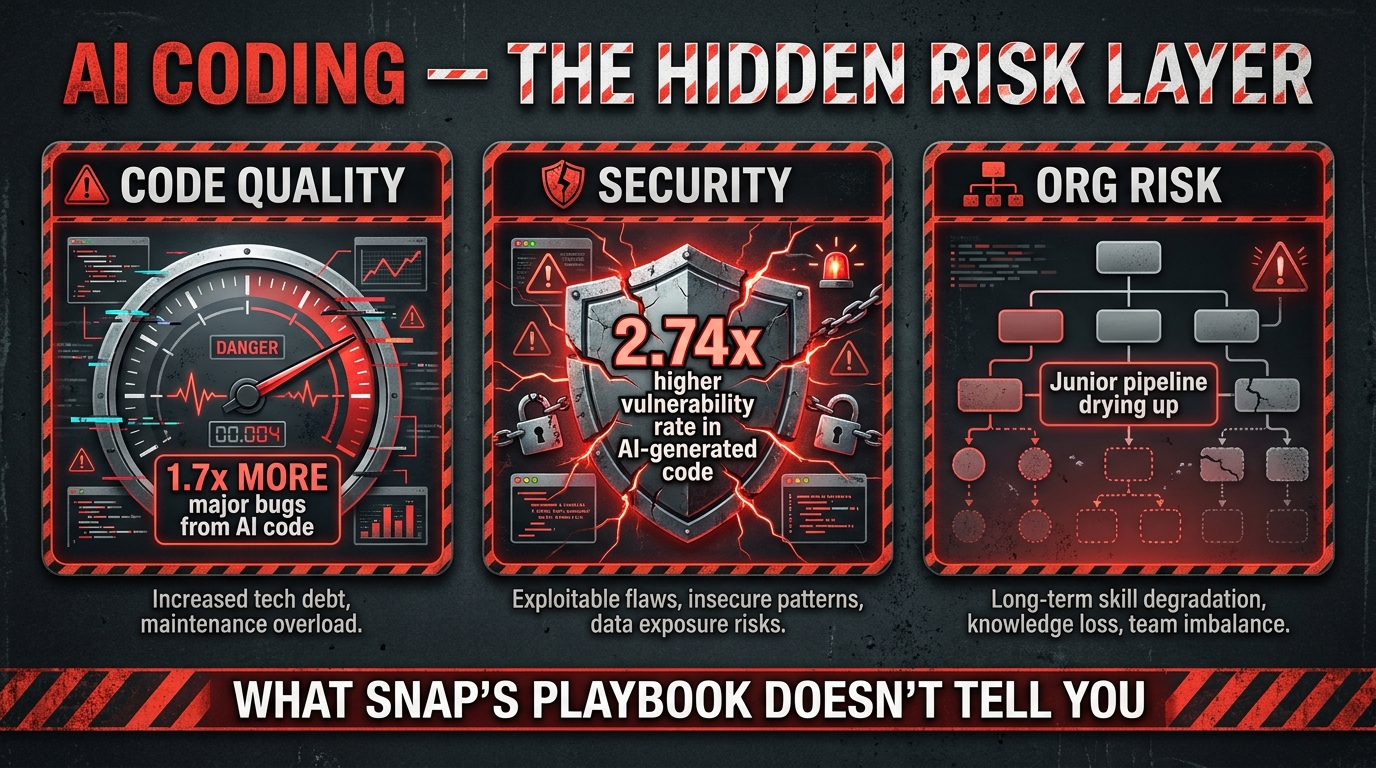

Third, the quality risks in AI-generated code are real and documented. Research in 2026 found that AI-generated code produces 1.7 times more major bugs and carries a 2.74 times higher vulnerability rate than human-written code under equivalent conditions. Companies rushing to hit a headline AI-code percentage without robust review infrastructure are trading a headcount problem for a code quality problem — which tends to be more expensive to fix downstream.

What the Critics Get Wrong

That said, dismissing Snap’s transformation as pure financial theater ignores the substantive engineering reality. The productivity gains from AI coding tools are well-documented and measurable — not theoretical. GitHub’s own research has consistently shown 15–34% productivity improvements from Copilot at scale. Cursor data shows 60% more PRs per engineer per week. Claude Code’s adoption rate among professional engineers (95% weekly usage for half of all work) reflects genuine utility, not marketing.

More importantly, the companies that dismiss the AI coding shift as hype are the ones most likely to find themselves at a serious competitive disadvantage within 18 months. Whether the specific framing around any given layoff announcement is honest or performative, the underlying productivity dynamics are real. Skepticism about the narrative is warranted. Skepticism about the technology is not.

The Playbook for Replicating Snap’s Approach at Your Company

Most engineering leaders reading about Snap’s 65% figure are not running a 5,000-person tech company with the capital to absorb $95–130 million in severance charges. The question isn’t how to replicate Snap’s restructuring. It’s how to replicate the capability that enabled it — an engineering organization genuinely running at higher output per person — regardless of your current team size or structure.

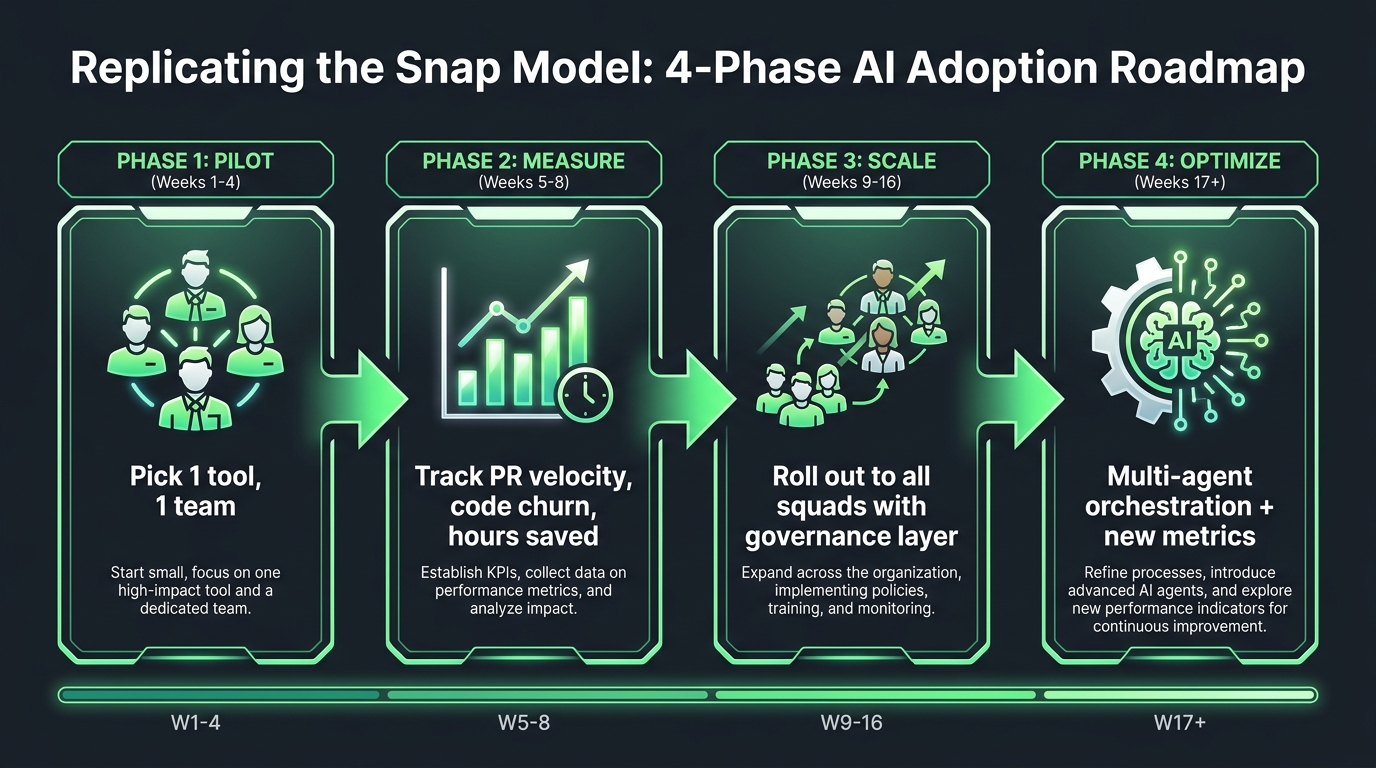

Phase 1: The Constrained Pilot (Weeks 1–4)

Start with one team, one tool, and a clearly defined measurement framework before touching anything else. Select a squad of three to five engineers who are already technically strong and open to changing their workflow. Deploy a single AI coding tool — Claude Code or Cursor for most teams; GitHub Copilot for organizations with strict compliance requirements. The goal in this phase is not productivity transformation. It’s baseline measurement. Track PR throughput, cycle time, and hours spent on implementation-level tasks before AI assistance. You need a before picture to measure against.

Run this for four weeks with deliberate note-taking. What kinds of tasks is the AI handling well? Where does it slow the team down with bad suggestions or require extensive review? What does the code review burden look like on the output side? The answers to these questions will shape your Phase 2 deployment far more than any vendor benchmark can.

Phase 2: Establish the Measurement Infrastructure (Weeks 5–8)

Before scaling, build the measurement layer. This is the most commonly skipped step in AI coding deployments — and the most commonly regretted omission. You need visibility into:

- AI code percentage — how much of merged code originated from AI suggestions

- PR cycle time — time from first commit to merge

- Code churn rate — how often newly written code is deleted or significantly rewritten within 30 days, a proxy for code quality

- Bug introduction rate in AI-generated versus human-written code

- Developer time savings — direct survey or time-tracking tool data

The industry benchmark for code churn in AI-generated code is 5.7–7.1%, compared to 3–4% for experienced human developers. If your team’s AI-generated code churn is running higher, you have a prompt quality problem, a review process problem, or both — and you need to diagnose it before scaling the workflow to your full organization.

Phase 3: Scaled Rollout with Governance (Weeks 9–16)

Roll out across all engineering squads, but with a governance layer in place from day one. This includes: a standardized prompt library for common development patterns at your company; a code review protocol that specifically addresses AI-generated code (who reviews it, with what checklist, and what automatic rejection criteria look like for security-sensitive areas); and a shared Slack or Teams channel where engineers can share what’s working, what prompts are producing the best results for your specific codebase, and what AI is consistently getting wrong.

The compound value in an organization-wide AI coding deployment isn’t just individual productivity gains. It’s institutional learning — each engineer’s discoveries about how to work effectively with AI feeding back into a shared knowledge base that makes the whole team faster. Organizations that skip governance typically have individual engineers who are power users and everyone else who barely uses the tools. The power users’ knowledge stays siloed, and the organization never achieves the multiplied output that Snap achieved.

Phase 4: Multi-Agent Orchestration and the Senior-Shift (Weeks 17+)

At the maturity end of AI coding adoption, teams stop thinking about AI as a tool individual engineers use and start thinking about AI as a layer of the engineering infrastructure. This is the multi-agent orchestration stage: code generation agents, PR review agents, test coverage agents, and infrastructure configuration agents running in concert, with human engineers serving as orchestrators rather than implementers. This is the operating model Snap is running at scale.

Getting here requires a deliberate organizational shift. Senior engineers need to redirect a meaningful portion of their time toward writing better specifications, improving the prompts and context that AI agents receive, and building the evaluation frameworks that determine whether AI output is acceptable. This is harder to do — it requires a different kind of thinking than implementation-focused engineering — but it’s where the real productivity multiplication lives.

Measuring What Matters: New Metrics for AI-Augmented Engineering Teams

Traditional software engineering metrics break down badly in an AI-augmented environment. Lines of code per engineer is useless when AI can generate a thousand lines of adequate-but-not-great code in minutes. Pull requests per week can skyrocket while actual feature quality declines. Engineering leaders who try to evaluate their AI coding adoption using pre-AI KPIs will either declare false success or miss real problems.

Metrics That Work in 2026

AI code percentage with churn overlay: Track what percentage of merged code is AI-generated, but always view it alongside the churn rate. High AI percentage with low churn (under 5%) indicates effective integration. High AI percentage with high churn (above 7%) indicates quality problems that are generating rework overhead.

PR cycle time: Sub-8-hour PR cycles are the benchmark for elite AI-augmented teams in 2026. If your cycle times aren’t improving meaningfully after 60 days of AI tool adoption, you have an adoption problem or a review-bottleneck problem, not a tool problem.

Feature cycle time, end-to-end: Zoom out from PRs to full features. Track the time from specification finalization to production deployment. AI coding tools should compress this number. If they aren’t, the bottleneck has moved upstream to specification quality or downstream to QA and deployment — and that’s where your next investment should go.

Specification completeness rate: In a spec-driven engineering environment, incomplete specs are the primary cause of poor AI output. Track how often engineering specifications have to be revised after an AI’s first pass at implementation reveals ambiguity. This is an indirect measure of your team’s spec-writing maturity — which is now a core engineering skill.

Developer time-on-high-judgment-work: Survey engineers quarterly on what percentage of their weekly hours they’re spending on high-judgment tasks (system design, architecture decisions, complex debugging, stakeholder communication) versus low-judgment tasks (implementation, documentation, test writing). AI adoption should visibly shift this ratio. If engineers still report spending 60% of their time on implementation work after six months of AI tool deployment, adoption is shallow.

The ROI Benchmark

Industry data in 2026 puts the average ROI for AI coding tool adoption at 2.5–3.5x for well-run deployments, with top-quartile teams achieving 4–6x. At an industry-standard cost of $200–600 per developer per month for a multi-tool stack, a team of 20 engineers spending $4,000–$12,000 per month on AI tools should be returning $10,000–$72,000 per month in productive capacity. The break-even timeline at typical adoption rates runs 12–18 months. Companies that are still treating AI coding tools as a pilot-indefinitely experiment rather than a capital allocation decision are leaving measurable value on the table.

The Talent Reality: Who Benefits and Who Gets Left Behind

The human stakes of Snap’s AI coding shift extend well beyond the 1,000 people who received termination notices in April. The structural change in what makes an engineer valuable is unfolding across the entire industry, and it’s playing out at different speeds for different career stages.

Senior Engineers: The Clear Winners (For Now)

For senior engineers — those with strong system design skills, architectural judgment, and the ability to write precise technical specifications — the AI coding era is unambiguously good. Their comparative advantage over AI grows, not shrinks, as AI gets better at implementation. AI is excellent at writing code from a clear specification. It is not good at knowing whether the specification is the right one, whether the architecture serves the business need in three years, or whether a subtle edge case in a distributed system will cause a production incident. Those are senior-engineer skills, and they’re becoming more valuable as the implementation layer gets cheaper.

Junior and Mid-Level Engineers: A More Complex Picture

The picture is harder for junior and mid-level engineers. Research in 2026 projects 40–60% reductions in routine L0/L1 roles at companies moving aggressively toward AI-augmented teams. These are the roles where a developer primarily writes implementation code from a spec — precisely the function that AI now handles at high volume. The career ladder has a missing rung: the path from junior to senior used to run through years of implementation experience that built the contextual knowledge needed for architectural work. If AI absorbs the implementation work, junior developers get fewer of the repetitive reps that used to build that knowledge.

This is a real and underappreciated problem. Companies that cut their junior pipelines to capture short-term efficiency gains may find themselves without a bench of senior engineers in four to five years. The best engineering organizations in 2026 are actively redesigning their junior developer programs to build architectural thinking and spec-writing skills from the beginning of a career, rather than treating those as skills that emerge naturally after years of implementation work.

Product Managers and Non-Engineering Roles

Snap’s April cuts fell heavily on product managers and partnership roles — not engineers. This tracks with a broader industry pattern: as small engineering squads gain the ability to ship more with less coordination overhead, the demand for intermediate coordination roles declines. The PMs who will thrive are the ones who write precise, testable product specifications that AI agents can act on directly. Those who add value primarily through facilitation and communication may find their role definition shifting under them faster than expected.

Peer Pressure: How Atlassian, Pinterest, Duolingo, and Others Are Adapting

Snap is not operating in isolation. The same forces are reshaping engineering teams across the tech industry, with different companies taking different approaches to the same underlying shift.

Atlassian laid off approximately 1,600 employees — 10% of its workforce — in March 2026. Co-founder Scott Farquhar’s public framing was measured: he explicitly pushed back on the “AI replaces people” narrative, arguing that AI changes the efficiency of work rather than the mix of skills needed. But the financial reality is that improved productivity from AI tools does inherently reduce the number of people needed to accomplish the same output. The framing and the math are in some tension.

Pinterest announced plans to cut 15% of its workforce in 2026, explicitly redirecting the cost savings toward AI product initiatives. Rather than framing the cuts as AI-driven, Pinterest positioned them as investment reallocation — a shift of capital from labor costs to AI tooling and infrastructure. The destination is the same; the narrative architecture is different.

Duolingo has taken the most transparent approach: requiring managers to affirmatively demonstrate that AI cannot perform a function before approving a new hire. This is effectively a hiring-side version of Snap’s layoff-side policy. The headcount impact is the same — fewer people do equivalent work — but it arrives gradually through attrition and hiring restraint rather than through a single restructuring event. For engineering leaders managing organizations that don’t want to absorb the reputational and cultural cost of mass layoffs, Duolingo’s approach may be the more sustainable model.

Across the sector, tech layoffs through mid-April 2026 totaled approximately 99,283 jobs, with nearly half attributed — accurately or not — to AI productivity gains. The pattern is clear: companies are using their AI coding productivity improvements to right-size their engineering organizations, whether they frame it that way or not.

Implementation Risks: Code Quality, Security, and Organizational Debt

A comprehensive assessment of Snap’s AI coding model has to grapple honestly with its risks. Replicating the efficiency gains without a corresponding investment in risk mitigation is how organizations end up with a different, more expensive set of problems.

Code Quality Degradation

The 2026 research on AI-generated code quality is not uniformly positive. Studies measuring bug density and code churn consistently find that AI-generated code — particularly in environments where review processes haven’t been adapted for AI authorship — introduces more defects than well-written human code. The 1.7x major bug rate and 2.74x higher vulnerability rate cited in security research represent worst-case conditions (minimal review, poor specification quality), but they’re not hypothetical. They reflect what happens when organizations adopt AI coding tools without simultaneously upgrading their review infrastructure.

The mitigation is straightforward but requires investment: dedicated AI code review checklists, automated security scanning on AI-generated code, and a culture where engineers are expected to own and understand every line of code in a PR regardless of who — or what — wrote it first. The review burden doesn’t disappear when AI writes the code. It shifts.

Security and Compliance Risks

AI coding tools generate code from training data that includes vast amounts of public code repositories — which means they can inadvertently reproduce patterns from vulnerable, deprecated, or license-restricted code. Organizations in regulated industries (finance, healthcare, enterprise SaaS with complex compliance requirements) need to treat AI-generated code as requiring a separate security review pass, not just a standard code review. This is particularly relevant for authentication logic, data handling, and API integration code — all areas where AI tools are confident but error rates are high.

The Organizational Debt Problem

Perhaps the most underappreciated risk in aggressive AI coding adoption is organizational debt: the long-term consequences of hollowing out your junior engineering pipeline faster than you can build a replacement path to experienced senior engineers. Snap has the scale and resources to absorb this risk in ways that most engineering organizations don’t. A 50-person engineering team that cuts its junior tier to achieve short-term efficiency may find itself in a hiring crisis in 2028 when it needs experienced engineers and has no internal bench to draw from.

The responsible version of the Snap model includes a deliberate investment in reskilling — moving engineers who were doing implementation work into the specification-writing, architecture, and AI orchestration roles that the small-squad model actually needs. This is harder and slower than a layoff announcement, but it’s the approach that builds a sustainable engineering organization rather than a temporarily efficient one.

Beyond the Headlines: Building the AI-Native Engineering Organization

Snap’s April 2026 announcement will be studied in business schools for a decade. But the most important thing it signals isn’t about headcount or cost savings or stock prices. It’s about the pace at which the definition of an effective engineering organization is changing — and the widening gap between organizations that are actively adapting and those that are treating AI coding as an optional efficiency experiment.

The Engineering Org You Need to Build

The AI-native engineering organization isn’t the one that has adopted the most tools or cut the most headcount. It’s the one where:

- Senior engineers spend the majority of their time on specification, architecture, and AI orchestration — not implementation

- AI agents run continuously across the SDLC, not just in the code editor

- Measurement infrastructure tracks AI code quality in real time, flagging churn and vulnerability risks before they reach production

- Junior developers are being trained on spec-driven engineering from their first week, not learning it as a late-career skill

- Infrastructure efficiency — compute, data, pipeline cost — is optimized in parallel with human efficiency, not as a separate initiative

The Timeline That Matters

Snap went from early AI coding adoption to 65% AI-generated code across its entire engineering organization within approximately two years. Given that the tools available in 2026 are substantially better than those available in 2024, the same transition should be achievable in 18 months or less for teams that start today with a deliberate strategy. For teams that haven’t started, the clock is running — and their competitors may already be several phases ahead.

What to Do This Week

If you’re an engineering leader who has read this far and is still uncertain about where to begin, here is the minimum viable action set:

- Pick one team and one tool. Start with GitHub Copilot if your organization needs compliance coverage from day one, or Cursor if you want maximum throughput on a team ready to move fast.

- Establish baseline metrics before launch. You cannot demonstrate ROI without a before picture. Measure PR cycle time, code churn, and developer hours on implementation tasks before the pilot begins.

- Add a code review protocol for AI output. Even if it’s lightweight to start, your team needs a shared understanding of how AI-generated code is evaluated differently from human-generated code.

- Talk to your senior engineers about spec-writing as a core skill. The shift toward specification-driven engineering is the most important cultural and capability change the AI coding era requires. Start that conversation now.

- Measure after 60 days and make a scaling decision. Don’t let a pilot run indefinitely without a decision point. Sixty days is enough time to see whether the productivity gains are real in your environment and whether you should accelerate adoption.

Snap’s crucible moment was dramatic, public, and painful for many of the people involved. But the underlying message it sends to every engineering organization watching is straightforward: the teams that figure out how to work at 65% AI-generated code — or higher — will be operating at a cost and velocity profile that teams stuck at 10% or 20% simply cannot match indefinitely. The question isn’t whether this transition is coming. It’s whether you’re going to lead it or chase it.