There is a fundamental problem baked into every Amazon product listing: the customer cannot pick up the product. They cannot turn it over, peer at the stitching, feel the weight, or hold it up to the light. Every purchase is an act of faith — and the only thing standing between that faith and a click away is your product imagery.

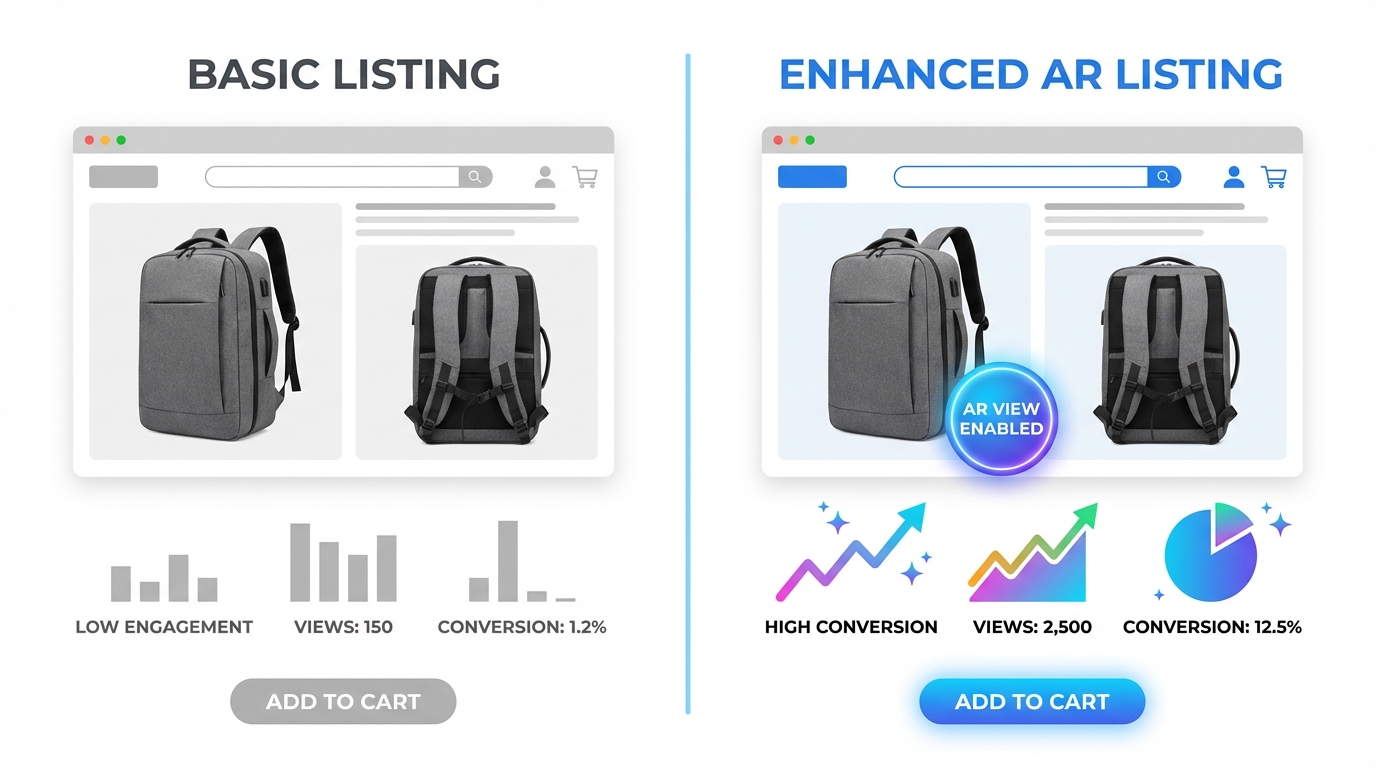

Most sellers know this in theory. In practice, the vast majority of Amazon listings still rely on the same three or four flat, static photographs that haven’t changed since the ASIN was first created. Meanwhile, a growing number of brand-registered sellers are quietly watching their conversion rates climb — not because they rewrote their bullet points, launched another PPC campaign, or chased review velocity — but because they changed how shoppers experience their product visually before buying.

This article is not about making your images “look nicer.” It’s about the specific mechanics of 360-degree spin views, 3D model uploads, and Amazon’s AR features — what the data actually shows, who qualifies, how to execute without a large production budget, and how to build a visual asset stack that does measurable work at every stage of the shopper’s decision process.

If you have already read generic advice about “using high-quality images,” this is something different. What follows is the operational reality of visual commerce on Amazon in 2026 — including a policy shift in early 2024 that most sellers still haven’t caught up with.

The Visual Trust Gap: Why Shoppers Need More Than a Pretty Photo

Before getting tactical, it’s worth understanding the psychological problem that 360° and AR imagery actually solves — because the solution only makes sense when you see how deep the problem runs.

According to the Amazon Shopper Report, which surveyed 1,000 shoppers across the US, UK, Germany, France, Spain, and Italy, 92% of Amazon shoppers cite detailed product images as a key factor in converting their interest into a purchase — second only to price at 95%. That ranking puts imagery ahead of reviews, shipping speed, and brand reputation. Shoppers, in other words, are looking at your images before they read a single word of your listing.

The “imagination gap” in online retail

Neuroscience and consumer behavior research consistently show that buying decisions are driven by the buyer’s ability to mentally simulate ownership of a product. When you pick up a chair in a furniture store, your brain is already placing it in your living room. When you hold a pair of shoes, you’re imagining them on your feet. Online shopping strips out this simulation entirely — and a flat photograph does almost nothing to rebuild it.

This is why static images, no matter how professionally shot, create what researchers call an “imagination gap”: a residual uncertainty about whether the product will actually look, fit, and function as expected in the buyer’s real-world context. That uncertainty is one of the main reasons shoppers add items to carts and never check out. It’s also why 22% of all e-commerce returns are triggered specifically by products not matching their photos — not defects, not sizing issues, but a failure of visual representation.

The mobile multiplier

The problem is compounded by the device most shoppers now use. With 73% of Amazon shoppers regularly browsing via smartphone, the limitations of a 1,200-pixel static JPEG are even more severe. On a small screen, details disappear. Texture becomes indistinguishable from color. Scale becomes guesswork. Research shows mobile shoppers abandon listings 2.1 times faster than desktop shoppers when they encounter visual friction — unclear sizing, missing lifestyle context, or no way to examine product details up close.

Interactive imagery — the kind that lets a shopper spin a product, zoom into a seam, or drop a piece of furniture into a photo of their own living room — collapses the imagination gap. It replaces uncertainty with simulated experience, and simulated experience is far closer to the certainty of holding a physical product than any static shot can achieve.

What Happened When Amazon Killed Traditional 360° Photography in January 2024

In January 2024, Amazon made a policy change that most sellers are still trying to fully understand: the platform formally discontinued support for the traditional 360-degree product photography format — the animated GIF-style spinning images that had become common on many listings. This wasn’t a minor update buried in Seller Central. It was a deliberate architectural shift in how Amazon intends for interactive product views to work going forward.

The reasoning was straightforward. Traditional 360-degree photography — which involves capturing 24 to 72 individual frames and stitching them into a spinning animation — produces large file sizes, loads slowly on mobile, and cannot be adapted for augmented reality features. Amazon’s infrastructure had moved on. The platform is now built around 3D models as the primary vehicle for interactive product visualization.

Why many sellers missed the memo

The discontinuation of 360° photography created a knowledge gap that persists into 2026. Sellers who had invested in 360° photo rigs or paid agencies for spinning images found themselves with assets that couldn’t be uploaded. Many responded by doing nothing — reverting to static images and assuming the feature was simply gone. Others conflated “360° photography” with “interactive spin view” and assumed the entire capability had been removed.

Neither assumption is correct. The interactive spin experience is alive, well, and delivering stronger results than ever. It’s just delivered through a different medium. Instead of a spinning animation built from dozens of photographs, Amazon’s interactive views are now rendered from 3D models — digital objects that can be spun in real time, zoomed, lit from any angle, and placed into an augmented reality environment by the shopper’s own smartphone camera.

What this means for competitive positioning

The transition to 3D models created a short-term competitive gap that still exists today. Because 3D model creation has a steeper learning curve and higher upfront cost than traditional photography, many sellers have opted out entirely. This means that in most product categories, the share of listings with interactive spin views or AR capability is still very low — which means sellers who do make the investment stand out substantially in search results and on listing pages.

The January 2024 policy shift, in other words, didn’t end the opportunity for sellers who embrace interactive imagery. It filtered out the sellers who weren’t willing to adapt, leaving more visible runway for those who are.

The 3D Model Era: How Amazon’s Spin View Actually Works Today

Understanding how Amazon’s current interactive imagery system works is essential before investing time or money into it. The feature is often described loosely as “360-degree views,” but the technical reality is more precise — and more powerful.

From photographs to digital objects

When Amazon displays a “spin view” of a product today, it is rendering a 3D model file in real time inside the browser or app. The shopper can grab and rotate the product with their finger or cursor, zoom in to examine texture and detail at any angle, and in eligible categories, activate the “View in Your Room” AR feature to place the product in their own physical space using their device’s camera.

This is fundamentally different from a spinning animation. A 3D model is not a sequence of photographs — it is a mathematical representation of the product’s geometry, surface materials, and textures. Amazon renders it on the fly, which means the shopper controls the experience rather than watching a pre-set rotation.

File requirements and technical specifications

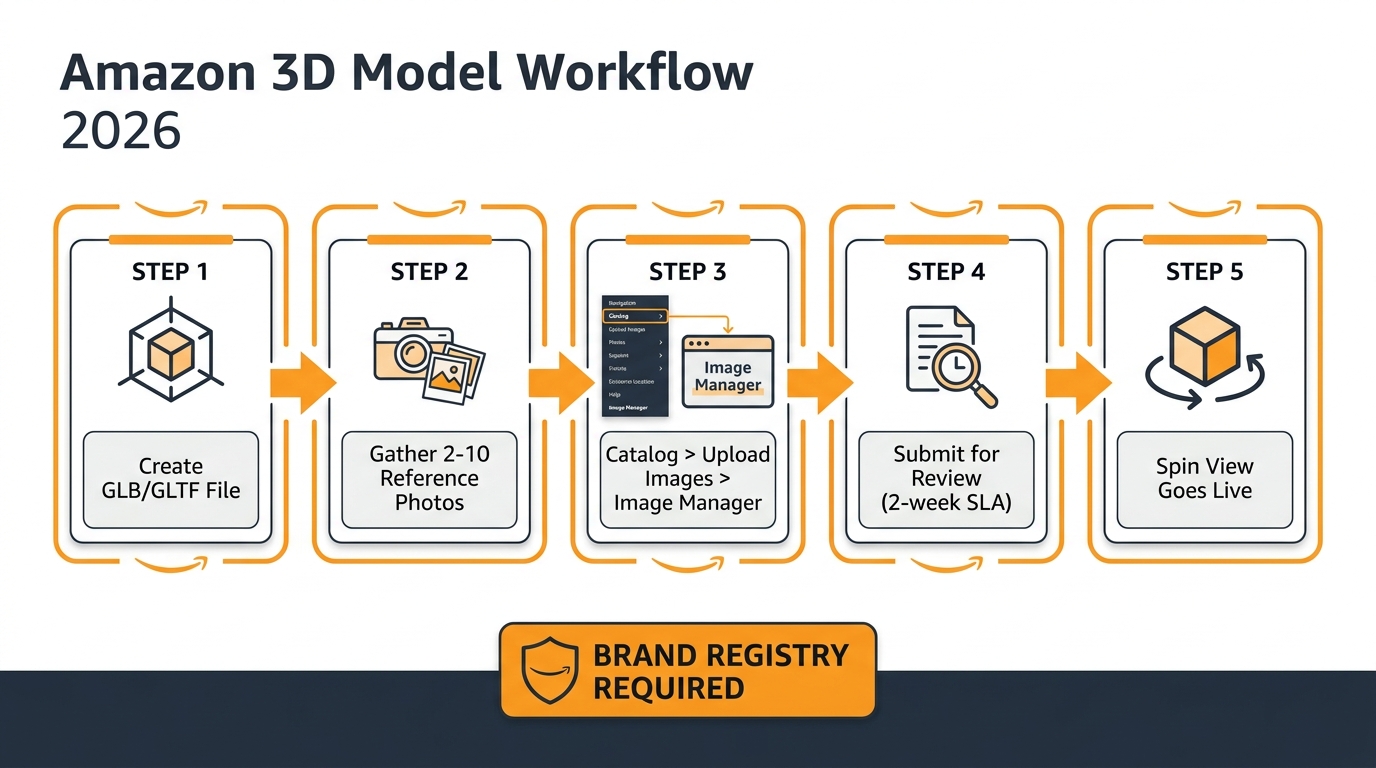

Amazon accepts 3D models in GLB or GLTF format. The GLB format (Binary GL Transmission Format) is generally preferred because it packages all textures and geometry into a single file. Key technical requirements as of 2026 include:

- Polygon count: Maximum 1 million triangles per model; Amazon’s recommended sweet spot is 150,000–200,000 for optimal loading performance

- No cameras attribute: The model must not include embedded camera objects

- No KHR_materials_specular extensions or other incompatible shader types

- Textures: Accurate material textures that represent real-world product appearance — Amazon will reject submissions that appear inaccurate

- Reference photos: 2–10 high-quality photographs of the actual physical product submitted alongside the model to verify accuracy

- Dimensions: Accurate real-world dimensions required for AR placement to work correctly

Files can be validated before submission using the Khronos glTF Validator, a free open-source tool that identifies technical errors before Amazon’s review team sees them — saving the two-week review turnaround on easily fixable mistakes.

The submission process step by step

Upload happens through Seller Central under Catalog → Upload Images → Image Manager tab. Search for the ASIN or SKU, verify that the Registered Brand Owner icon is showing (this step is required), and select 3D Models → Upload 3D Model. Submit the GLB file alongside reference photos and product dimensions. Amazon’s review team typically takes up to two weeks to approve or reject the submission, with feedback provided on rejections. Once approved, the spin view and AR badge appear on the listing automatically.

Brand Registry enrollment is non-negotiable. Sellers without it cannot access the 3D model upload feature at all.

“View in Your Room” and “View in 3D” — Who Qualifies and How to Enable It

Amazon operates two distinct interactive visualization features that are often confused with each other. Understanding the difference — and which one applies to your product — is important for setting the right production and submission expectations.

View in 3D: the spin experience on listing pages

“View in 3D” is the interactive spin capability that appears on the main product detail page. When activated, shoppers see an icon on the image gallery inviting them to rotate and zoom the product in 3D. This feature is available across a wide range of categories including:

- Shoes and footwear

- Eyewear (sunglasses, glasses frames)

- Home and furniture

- Consumer electronics

- Beauty and personal care

- Baby products

- Sports and outdoor equipment

- Toys and games

- Pet supplies

- Automotive accessories

This list is expanding. Amazon has been systematically broadening the eligible categories as 3D model production becomes more widespread and its review infrastructure scales up.

View in Your Room: the full AR experience

“View in Your Room” is a separate, more powerful feature that uses the shopper’s device camera to place the product into their actual physical environment using augmented reality. The shopper points their phone at their floor, table, or wall, and sees a true-to-scale 3D rendering of the product appear in their space — positioned accurately, casting realistic shadows, and viewable from any angle by moving the phone.

Eligibility is more specific: any product that would naturally sit on a floor or table, or be mounted to a wall or vertical surface. Practically, this covers the bulk of the furniture, home décor, lighting, kitchen appliance, and storage categories. Supported marketplaces include amazon.com, amazon.ca, amazon.co.uk, amazon.de, amazon.es, amazon.fr, and amazon.it.

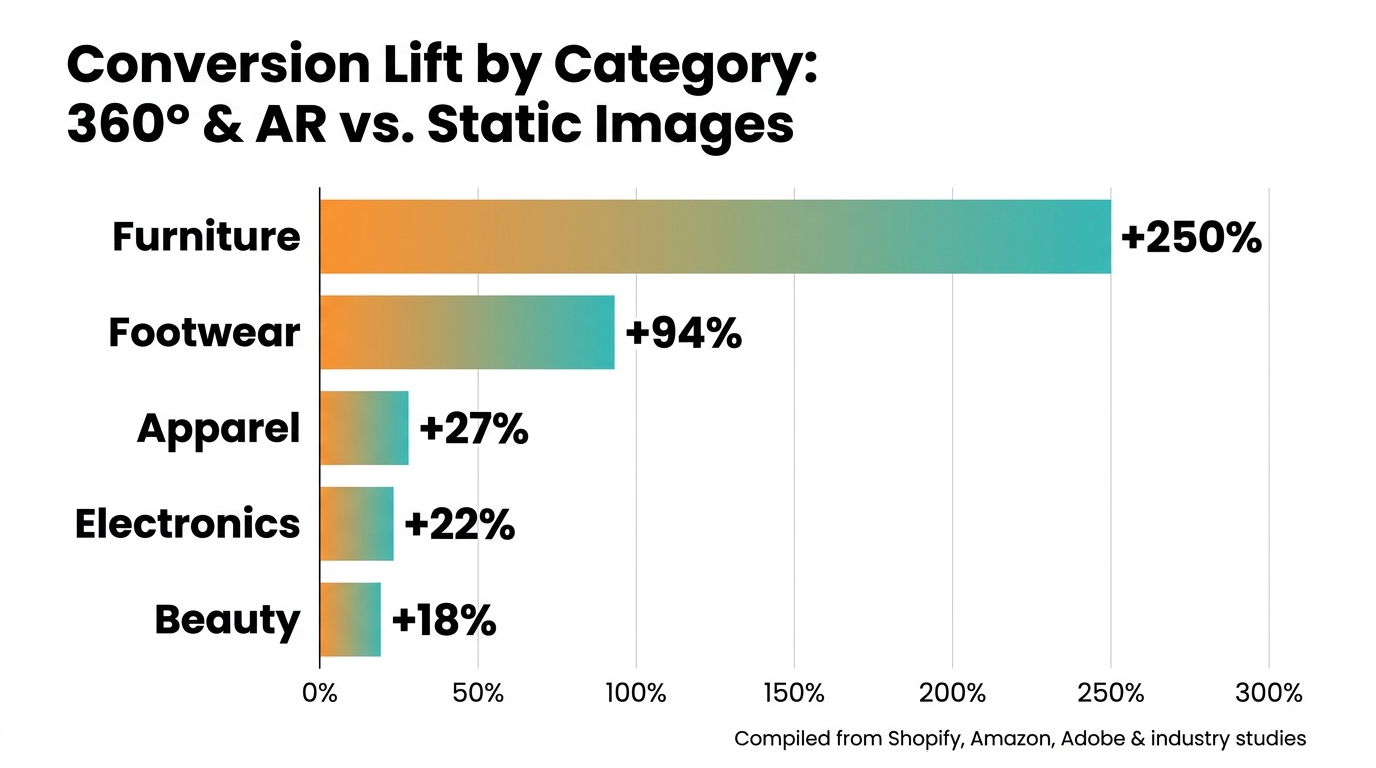

When Amazon analyzed listings using “View in Your Room” in a 2023 study, the feature delivered an average 9% improvement in sales for enrolled products. In high-consideration categories like furniture and home décor, results are considerably more dramatic: AR visualization for furniture has been cited in Adobe and industry research at conversion lift figures as high as 250% over static images, as shoppers who can place a sofa in their living room before buying eliminate virtually all scale and color uncertainty.

The “Virtual Try-On” features for fashion and beauty

Amazon also operates category-specific AR try-on features that sit slightly outside the standard 3D model workflow. Virtual Try-On for Shoes (launched 2022) uses the device camera to overlay shoe imagery onto the shopper’s actual feet. Similar functionality exists for eyewear. These features are managed through Amazon’s fashion and brand programs rather than the standard 3D model upload path, and eligibility is typically connected to brand participation agreements rather than a standard self-service upload process.

Amazon describes all of these AR features as ongoing experiments and does not publish category-level conversion data. What is known from Amazon’s own public statements is that products with 3D views or virtual try-on features saw purchase rates approximately double compared to listings without them in the period following their introduction, and that eight times more customers engaged with AR-viewed products between 2018 and 2022.

The Return Rate Problem That Nobody Talks About (And Why Visuals Are the Fix)

Most sellers think about product imagery purely in terms of conversion. Getting more shoppers to click “Add to Cart” is the obvious goal. But there is a second, equally important dimension to the imagery problem that rarely makes it into the seller conversation: returns.

Returns are expensive in a way that doesn’t always show up cleanly in an advertising dashboard. FBA return fees, restocking costs, the likelihood of returned inventory being graded as unsellable, and the downstream impact on seller metrics — all of this compounds quickly. In categories like apparel, furniture, and electronics, return rates can reach 15–30% of all units sold. A meaningful fraction of those returns is not the product’s fault at all. It’s the listing’s fault.

The data on image-driven returns

Research consistently points to a direct link between image quality and return rates. The key statistics from 2024–2026 data:

- 22% of e-commerce returns are triggered by products not matching their photographs or descriptions — not defects, sizing errors, or buyer’s remorse, but a failure of visual expectation-setting

- Professional multi-angle photography reduces return rates by 23% compared to basic single-angle images

- Adding 360-degree or interactive views on top of multi-angle photography reduces returns by a further 15%

- 3D model and AR visualization tools deliver return reductions of up to 40% in categories where spatial context matters most (furniture, home goods)

- 34% of all product returns across e-commerce are linked directly to poor product presentation

Put simply: every dollar invested in better imagery does double work. It increases the number of buyers who convert, and it decreases the number of buyers who convert and then return. The economics of this compound in a way that makes visual investment one of the highest-return line items in a seller’s budget.

The category-specific return problem

Returns driven by visual mismatch are not distributed evenly across categories. They are most severe in categories where real-world context matters most — where a buyer needs to know how something fits in a space, how a color reads under natural light rather than studio lighting, or how a texture feels relative to other materials in the image. Furniture, rugs, curtains, lighting, apparel, footwear, and electronics accessories are the highest-risk categories. Counterintuitively, these are also the categories where 3D and AR solutions deliver the most dramatic return-rate reductions, because the solution directly addresses the source of the uncertainty.

The Categories Where 360°/AR Has the Biggest Impact — and Where It Doesn’t

Not every product benefits equally from 360-degree and AR imagery. Understanding where the ROI is highest — and where additional visual investment delivers diminishing returns — helps sellers prioritize their production budgets intelligently.

Highest-impact categories

Furniture and home décor is the category where AR delivers the most transformative results. Scale uncertainty — “will this sofa fit in my living room?” — is the single biggest barrier to purchase in this category. AR’s ability to place a true-to-scale rendering of a product in the shopper’s actual room eliminates that barrier entirely. Amazon’s own data shows a 9% average sales improvement from “View in Your Room,” and category-specific research puts the conversion lift from AR visualization in the 200–250% range over static images for high-consideration pieces.

Footwear and apparel benefit enormously from interactive spin views and virtual try-on features. The ability to rotate a shoe 360 degrees to inspect the sole, heel construction, and profile addresses the most common pre-purchase questions. Fashion retailers using 360-degree rotation imagery have documented conversion improvements of up to 27% over static front-and-back shots.

Consumer electronics and gadgets benefit from spin views because buyers want to understand port placement, button locations, connection points, and physical scale before committing. A laptop bag, for example, sells much better when a shopper can rotate it to see every pocket, zipper, and strap attachment point rather than relying on separate flat images of each angle.

Eyewear and accessories are strong candidates for virtual try-on features where available, and for spin views more broadly. The physical shape and profile of a pair of sunglasses from multiple angles is difficult to represent in two or three static images alone.

Lower-impact categories

Commodity consumables — vitamins, cleaning products, batteries, and similar items — see minimal conversion benefit from interactive imagery because purchasing decisions are driven almost entirely by price, reviews, and brand recognition. The product’s shape is largely irrelevant to the purchase decision, and there is no spatial context needed.

Books, digital media, and software are similarly immune to the benefits of interactive visualization for obvious reasons.

Highly standardized components — screws, cables, replacement parts sold by spec number — convert on specification matching, not visual exploration. A buyer purchasing a specific HDMI cable by length and specification does not need to rotate the cable in 3D.

The general rule: the more the purchase decision depends on understanding how a product looks from multiple angles, how it fits in a space, or how it sits on or with the buyer’s body, the more interactive imagery will move the conversion needle.

How to Create 3D Models Without a Studio Budget

The single most common reason sellers cite for not pursuing 3D model uploads is cost. Traditional 3D modeling — commissioning a CAD artist to build a product from reference photographs — can run anywhere from $150 to $1,500+ per model depending on product complexity. For a catalog of 50 SKUs, that math gets uncomfortable quickly. But the production landscape has changed substantially in the last two years.

Photogrammetry: turning a smartphone into a 3D scanner

Photogrammetry is the process of creating a 3D model by photographing an object from dozens of angles and using software to stitch those images into a 3D mesh. What was once a process requiring expensive camera rigs and specialized software is now achievable with a smartphone and accessible software tools.

The workflow is straightforward: place the product on a turntable or clean surface, capture 40–100 photos covering every angle and height, then process those images through software such as RealityCapture, Meshroom (free and open-source), or Polycam (mobile app). The output is a GLB file that can be cleaned up and submitted to Amazon. For products with relatively simple geometry — most consumer goods fall into this category — photogrammetry delivers results that meet Amazon’s accuracy requirements at dramatically lower cost than traditional 3D modeling.

CGI and product visualization agencies

For products that don’t photograph well (highly reflective surfaces, transparent materials, very small or intricate objects), computer-generated 3D models built from product specifications and reference images are often the better path. The market for this service has grown considerably alongside Amazon’s 3D feature rollout, and pricing has become more competitive. Specialist agencies offering Amazon-optimized GLB models now exist at multiple price points, with some offering per-SKU packages starting around $75–$150 for simple products.

Manufacturer files: the overlooked shortcut

Many manufacturers — particularly in electronics, furniture, and consumer goods — already have CAD or 3D model files of their products that were used in the design and tooling process. Private label sellers sourcing from manufacturers, especially larger factories, should ask explicitly whether product 3D files are available. These files often need format conversion and texture cleanup before they meet Amazon’s GLB requirements, but the base geometry is already there — saving significant production time and cost.

Amazon’s own AI generation tools

Amazon has been expanding its internal tools for sellers. In 2026, Amazon’s generative AI capabilities — including the Nova Canvas model — include functionality that can synthesize product imagery, lifestyle images, and virtual try-on composites directly from existing product photos. These AI-generated assets are permitted in secondary images and A+ Content (not in the main product image, where Amazon’s white-background rules still apply). While AI-generated assets don’t yet fully replace professional 3D model uploads for spin views, they represent a growing toolkit for sellers who need to produce high volumes of visual content without per-image photography costs.

A/B Testing Your Visual Assets: The Framework Serious Sellers Use

Investing in 3D models and interactive imagery is a significant decision. The sellers who extract the most value from that investment are the ones who treat it as a controlled experiment rather than a one-time production project. Amazon’s “Manage Your Experiments” tool — available to brand-registered sellers in Seller Central — makes this unusually achievable without external testing platforms.

What you can and cannot test

Manage Your Experiments supports A/B testing on main product images, secondary images, titles, bullet points, and A+ Content. For the purposes of visual testing, the most impactful tests in order of return are:

- Main image variation — This is the highest-leverage test because it directly affects click-through rate from search results. A main image change affects every impression your listing receives. Test angle (3/4 vs. straight-on), background style (pure white vs. contextual lifestyle for categories where it’s permitted), and scale (product filling the frame vs. showing packaging or accessories).

- Secondary image sequence — Once the main image is optimized, test the order and composition of supporting images. Does a lifestyle image as the second image outperform an infographic? Does a size comparison image earlier in the stack reduce returns measurably?

- Spin view vs. no spin view — For sellers who have uploaded a 3D model, testing the before/after impact on unit session percentage (conversion rate) provides clean attribution data for the investment in 3D production.

Test duration and traffic requirements

Amazon recommends running experiments for a minimum of four weeks to achieve statistical significance. Shorter tests — two to three weeks — can provide directional signals on high-traffic ASINs, but should not be treated as conclusive. Manage Your Experiments requires sufficient traffic to generate statistically valid results; low-traffic ASINs may need to run experiments for eight to twelve weeks before the data is reliable. Amazon provides a confidence indicator within the tool that shows when the winning variant has reached statistical significance.

The metrics that matter

When evaluating the results of visual experiments on Amazon, focus on three metrics in descending order of priority:

- Unit Session Percentage (conversion rate): The proportion of page visits that result in a purchase. This is the most direct measure of visual impact on buying behavior.

- Click-Through Rate (CTR) from search: For main image tests, this measures how effectively the image draws shoppers from search results to the listing page. An image that generates 20% more clicks at the same conversion rate produces 20% more sales with no change to anything else.

- Return rate over time: This is not visible in Manage Your Experiments directly, but should be tracked manually against visual changes. A main image that dramatically understates the product’s true appearance may lift short-term conversion while increasing returns — a net negative result that only appears if you’re watching the full picture.

The most common A/B testing mistakes

Sellers who run visual experiments on Amazon tend to make a handful of predictable errors. The most costly is testing multiple elements simultaneously — changing the main image, two secondary images, and the title at the same time. When one variant wins, you have no idea which change drove the result. The second most common mistake is ending experiments early when one variant is trending ahead — Amazon’s confidence indicators exist for a reason, and early results frequently reverse as more data comes in. Third is ignoring segment differences: a main image that converts well for mobile shoppers may underperform for desktop shoppers, and vice versa.

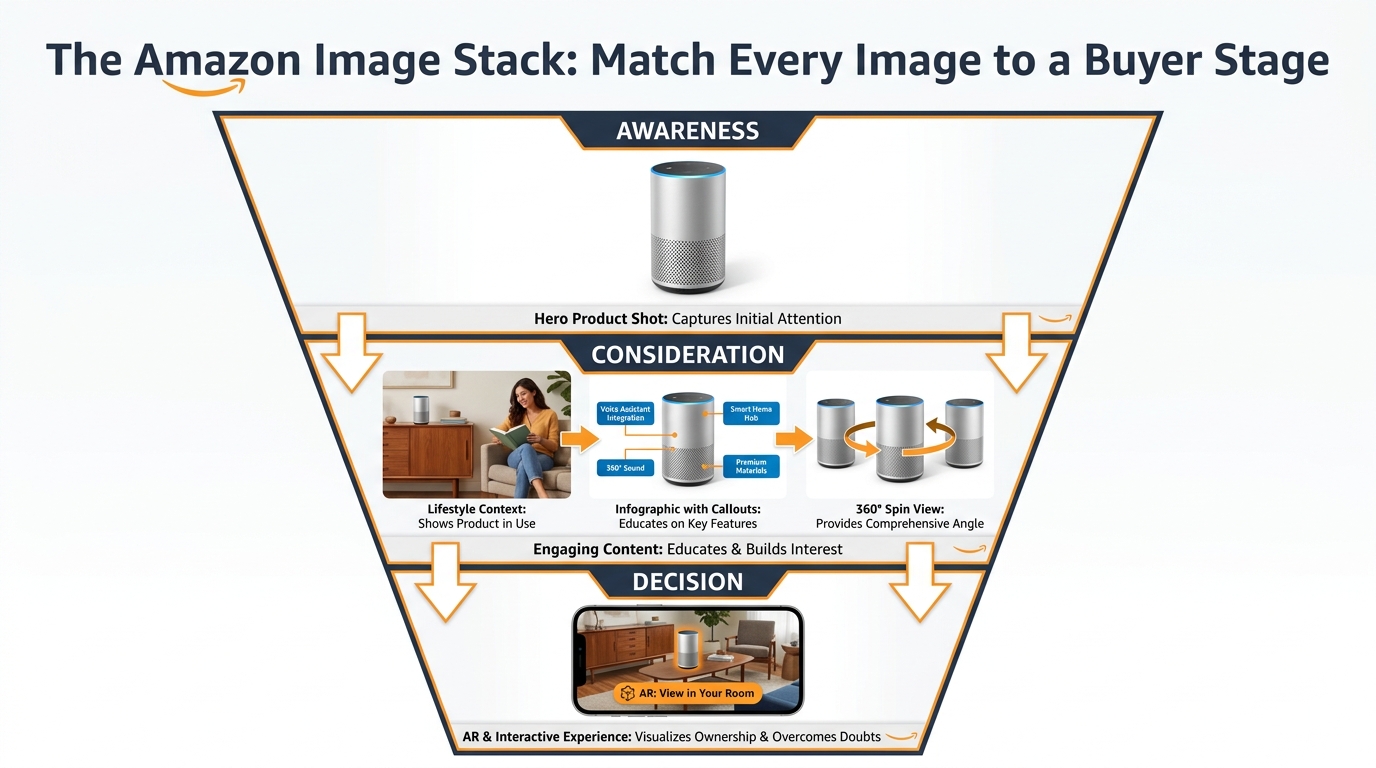

Building an Image Stack That Converts at Every Stage of the Funnel

One of the most useful frameworks for thinking about Amazon product imagery is the “image stack” — the idea that different images in your listing’s gallery serve different functions for shoppers at different stages of their decision process. A listing that treats all nine image slots as equivalent is leaving conversion on the table. A listing built with a deliberate stack converts at every stage.

Image 1 (Main Image): The click-driver

This image has one job: stop the scroll and earn the click from a search results page. Amazon’s rules are strict — pure white background (RGB 255, 255, 255), no text, no graphics, no props, product occupying at least 85% of the frame. Within those constraints, the optimization levers are angle, lighting, and the visual hierarchy of the product itself. Professional lighting that creates depth and dimension consistently outperforms flat studio lighting. A 3/4 angle that shows depth and three-dimensionality typically outperforms a straight-on flat view. Research from eBay Labs found that listings with five to eight high-quality images see conversion lifts of up to 65% over listings with one or two images — and it starts with the main image earning the click.

Images 2–3: The orientation and detail images

Once a shopper clicks through to the listing, they need to build a comprehensive mental picture of the product. Images two and three should systematically cover angles and details that the main image could not. For most products, this means a back/side view, a close-up of the highest-value detail (a zipper, a connector port, a distinctive design element), or a scale reference shot that shows the product next to a hand, a common household object, or a labeled dimension overlay.

Images 4–5: The lifestyle and context images

Lifestyle images serve a different psychological function than product detail images. They don’t answer “what does this look like?” — they answer “can I picture this in my life?” Showing a product in a realistic, aspirational real-world setting gives shoppers permission to project themselves into ownership. A well-executed lifestyle image for a coffee mug is not a photograph of a coffee mug. It is a photograph of a morning — the mug is just in it. These images work particularly hard for home goods, apparel, fitness equipment, and any product with a strong lifestyle association.

Images 6–7: The infographic images

Amazon allows text, callouts, comparison charts, and labeled diagrams in secondary images (not the main image). These slots are best used for information that is difficult to convey in bullet points alone — size charts, compatibility guides, material comparisons, before/after results, or feature callouts with measurements. Mobile shoppers who don’t scroll to read bullet points often do engage with well-designed infographic images. Keeping text mobile-readable (minimum 16pt equivalent when viewed on a phone) is critical.

Images 8–9: The trust and social proof images

The final images in the stack can carry review highlights, certifications, brand story elements, or comparison grids against competing products (where Amazon policies permit). For newer brands or products in a trust-sensitive category (supplements, baby products, safety equipment), images that communicate third-party testing, material sourcing, or manufacturing standards do real conversion work in this position.

Where the spin view fits in the stack

When a 3D model is approved, Amazon adds the interactive spin view as an additional option within the image gallery — typically surfaced as an overlay on the main image or as a separate tab. It doesn’t replace any of the nine standard image slots. Think of it as image 10: a bonus interactive layer that sits on top of the static gallery. Shoppers who engage with the spin view demonstrate significantly higher purchase intent, making the spin view most valuable for mid-funnel shoppers who are seriously considering the product but not yet committed.

What’s Coming Next: Amazon Nova Canvas, AI Try-On, and the 2026 Visual Stack

The landscape of product visualization on Amazon is moving faster in 2026 than at any point in the platform’s history. Understanding where the technology is heading allows sellers to make smarter decisions about where to invest now and what to build toward.

Amazon Nova Canvas and AI-generated product imagery

Amazon’s Nova Canvas generative AI model is available through AWS and increasingly integrated into seller-facing tools. Its capabilities relevant to product sellers include generating lifestyle background images around existing product shots (placing a product into a kitchen scene, a bedroom, or an outdoor setting without a physical photoshoot), creating color and variant images from a single physical product photograph, and — in its most advanced application — generating virtual try-on composites that show apparel or accessories on a model without a live photoshoot.

These AI-generated images are explicitly permitted in Amazon listings as secondary images and in A+ Content, as of 2026 guidelines. They are not permitted as the main product image, which must still represent the actual physical product accurately. For sellers managing large catalogs with many color variants, the ability to generate secondary lifestyle images at scale using Nova Canvas — rather than paying for individual photoshoots per variant — represents a significant operational cost reduction.

The Rufus AI layer and visual search

Amazon’s Rufus AI shopping assistant, which became a significant part of the Amazon shopping experience in 2025, introduces a new dimension to visual content strategy. Data from the holiday quarter of 2025 showed that Rufus-assisted shopping sessions converted at 3.5 times the rate of non-assisted sessions. What this means for visual content: Rufus can engage with product images, A+ Content, and 3D model information when generating responses to shopper queries. Listings with richer visual assets give Rufus more accurate and detailed information to draw from, which translates into more confident and specific recommendations to shoppers asking questions like “show me sofas under $500 that would work in a small living room.”

The trajectory of AR in Amazon’s roadmap

Amazon has been incrementally expanding AR feature eligibility since “View in Your Room” launched in 2017. The pace of that expansion is accelerating. Fashion categories began receiving category-specific virtual try-on features starting in 2022 and have continued to expand. The direction of travel is clear: Amazon intends for AR visualization to be a standard feature across most high-consideration product categories, not a specialty feature for furniture alone.

Sellers who invest in building accurate 3D models today are positioning their catalogs for multiple future feature rollouts, not just the current set of AR capabilities. A 3D model created and approved today becomes the foundation for whatever Amazon’s AR feature set looks like in 2027 and beyond — including features that don’t exist yet.

The competitive window is narrowing

The adoption curve for 3D models on Amazon follows the same pattern as virtually every new seller capability: early adopters gain disproportionate benefits while the feature is underused, then those benefits compress as adoption becomes mainstream and the feature becomes a parity expectation rather than a differentiator. Right now, 3D models and interactive spin views are genuinely differentiating. A listing with a spin view badge in a category where competitors have none stands out visibly. A “View in Your Room” badge on a furniture listing is still unusual enough that shoppers notice and engage with it.

That window will not stay open indefinitely. The sellers who build this capability into their listing infrastructure in 2026 will have the advantage of experience, established workflows, and catalog coverage before it becomes a standard baseline expectation.

The Practical Roadmap: Prioritizing Your Visual Investment

For sellers looking at their catalog and trying to figure out where to start, the decision framework is straightforward. Not every ASIN warrants the investment in a 3D model. The right sequence is to audit, prioritize, produce, and iterate.

Step 1: Audit your current visual assets against the benchmark

Pull your unit session percentage (conversion rate) data from Seller Central for every ASIN in your catalog. Sort by traffic volume (highest-traffic listings first) and identify listings with conversion rates below your category benchmark. Amazon’s average conversion rate across categories runs 10–20%, with high performers exceeding 25%. Listings with significant traffic but below-average conversion are the highest-priority candidates for visual improvement.

For each of those priority ASINs, answer three questions: Does this product have a spatial context problem (scale, fit, placement)? Is it in a category where interactive imagery is eligible? Does it currently have fewer than six substantive images? A “yes” to any two of those three flags an ASIN for immediate visual investment.

Step 2: Fill the static image stack first

Before investing in 3D model production, ensure every priority ASIN has a complete, high-quality static image stack. The data shows that moving from one or two images to six or more high-quality images delivers conversion improvements that rival or exceed the benefit of adding a spin view in isolation. The image stack is the foundation; interactive features are a multiplier on top of it.

Step 3: Prioritize 3D models by category and revenue concentration

Once the static stack is solid, prioritize 3D model production for your top revenue ASINs in categories where AR and spin views have the highest impact. Start with your two or three best-selling products in home goods, furniture, footwear, or electronics accessories — categories where the conversion data is clearest and the ROI is fastest. Use the learnings from those first submissions to refine your production workflow before scaling to a larger portion of your catalog.

Step 4: Run controlled experiments and reinvest

Use Manage Your Experiments to measure the actual conversion impact of new visual assets on each ASIN. Document the results — your unit session percentage before and after, your return rate, and your click-through rate from search. Use that data to build a business case for expanded 3D production across a wider set of ASINs, and to identify which categories and product types in your specific catalog respond most strongly to interactive imagery.

Conclusion: The Sellers Who Win on Imagery Win on the Fundamentals

It is easy to treat product photography as a cost of doing business — a box to check during listing setup, a budget line to minimize. The data tells a different story. In a marketplace where 92% of shoppers cite imagery as a top conversion factor, where a 22% conversion lift from interactive views is a documented and reproducible outcome, and where up to 40% of the return problem traces directly back to visual failures, imagery is not a cost. It is one of the most compounding investments a seller can make.

The specific opportunity in 2026 is sharper than it has ever been. Amazon’s transition away from traditional 360° photography toward 3D models created a knowledge gap that filtered out many sellers who weren’t paying attention. The sellers who do understand how the system works today — the GLB file requirements, the Seller Central upload path, the category eligibility for “View in Your Room,” the A/B testing framework for measuring impact — are operating in a window where this capability is still genuinely differentiating rather than table stakes.

That window will close. The sellers who build these capabilities into their standard listing workflow now will not only capture the conversion benefits today. They will also be positioned for whatever Amazon’s visual commerce infrastructure looks like next year, and the year after that — because the 3D models they create today are the foundation for every AR feature Amazon has not yet launched.

The camera cannot replace the in-store experience entirely. But a well-built 3D model on an Amazon listing comes considerably closer than anything that came before it. The question is not whether your competitors will eventually figure this out. The question is whether you figure it out first.

Key Takeaways

- Amazon discontinued traditional 360° photography in January 2024. The interactive spin view now requires a 3D model in GLB/GLTF format.

- 360°/interactive imagery lifts conversion rates 22–27% on average, with furniture seeing up to 250% in AR-specific studies.

- 3D model and AR visualization reduce return rates by up to 40%, attacking one of the most significant hidden cost drivers for FBA sellers.

- Brand Registry enrollment is required to upload 3D models. The file must be GLB or GLTF format, max 1 million triangles, with 2–10 reference photos submitted alongside.

- “View in Your Room” is available for floor/table/wall-mounted products across major Amazon marketplaces, and averages a 9% sales improvement per Amazon’s own data.

- Use Manage Your Experiments to measure conversion impact before rolling out 3D production across your full catalog.

- AI tools including Amazon Nova Canvas now allow AI-generated lifestyle imagery in secondary slots and A+ Content — a significant catalog-scale cost reduction for variant-heavy listings.

- The competitive window for 3D model differentiation is open now, and will narrow as adoption becomes mainstream.