The data scientist finishes training the model on a Tuesday. Twelve months later, it still hasn’t reached production.

This isn’t a story about a dysfunctional team or a poorly scoped project. It’s one of the most common trajectories in enterprise AI — and it happens at companies with talented engineers, meaningful budgets, and real executive buy-in. The model exists. The results look good. And yet, somewhere between the Jupyter notebook and the production API endpoint, everything stalls.

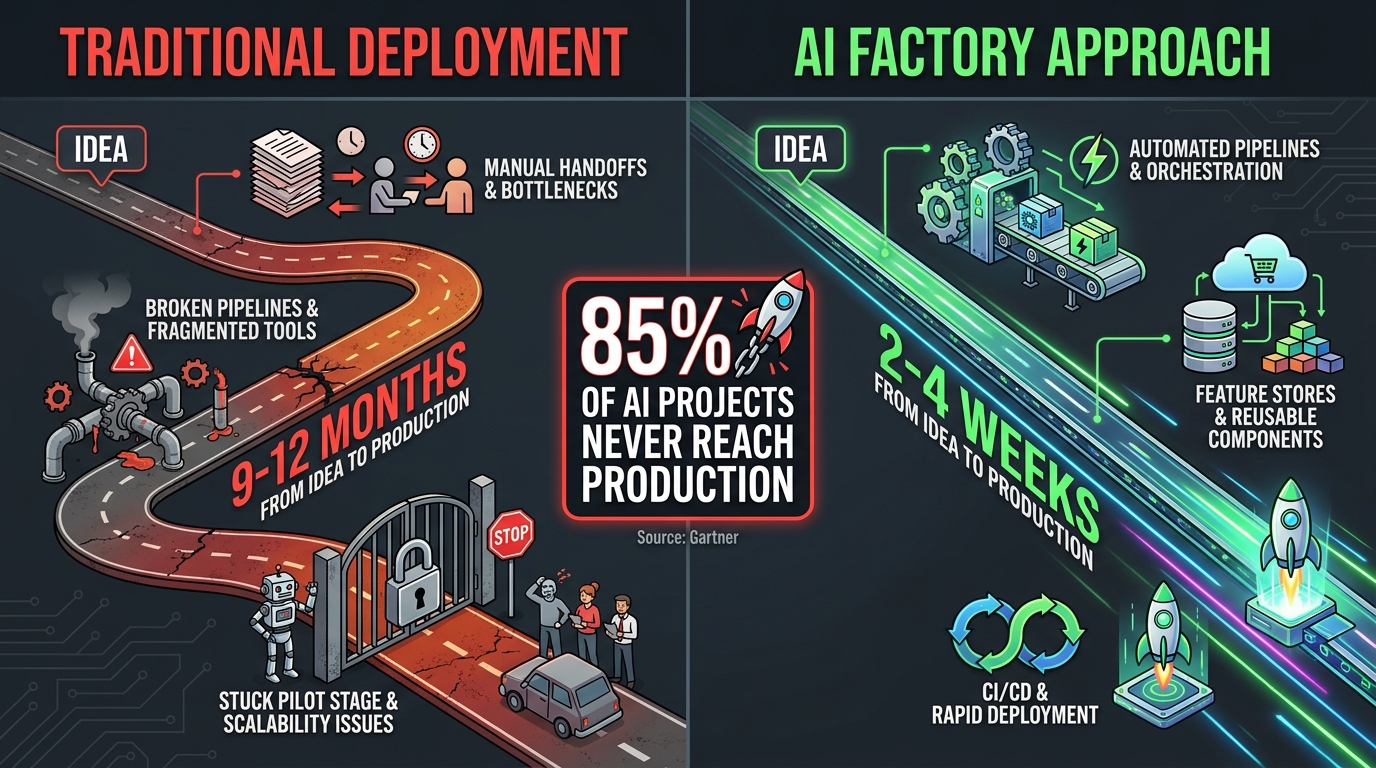

According to Gartner, more than 85% of AI and machine learning projects never make it to production. A separate survey of 650 enterprise leaders found that while 78% are running AI agent pilots, only 14% have successfully scaled those pilots into production systems. The average pilot stalls after 4.7 months — not because the model failed, but because the infrastructure, processes, and organizational structures needed to carry it across the finish line simply didn’t exist.

The companies closing that gap in 2026 aren’t doing it by hiring more data scientists. They’re doing it by building AI factories: purpose-built production systems that treat model deployment the same way a manufacturing plant treats product output — with repeatable processes, standardized tooling, continuous quality control, and the discipline to ship at speed without sacrificing reliability.

This post breaks down exactly how those factories are structured, what each layer of the stack actually does, where most teams go wrong, and what it genuinely takes to get from model training to live inference in days rather than months. No hype, no vague frameworks — just the architecture, the decisions, and the tradeoffs that determine whether your AI investments produce working software or expensive slide decks.

What an AI Factory Actually Is (and What It Isn’t)

The term “AI factory” gets used loosely, which causes real confusion about what you’re actually building. At one end of the spectrum, vendors use it to describe their compute hardware — NVIDIA’s Vera Rubin NVL72 rack systems, for instance, are marketed as AI factories because they produce tokens the way factories produce units. At the other end, consultants use it to describe any structured approach to building AI at scale.

For the purposes of this post, an AI factory is the combination of infrastructure, tooling, processes, and team structures that allows an organization to repeatedly take a trained model from development into production — and then monitor, update, and retire it — without heroic individual effort every time.

The Manufacturing Analogy Is More Literal Than You Think

MIT’s work on the AI factory concept, developed by Thomas Davenport and others, draws a direct parallel to industrial manufacturing. In a traditional factory, you don’t rebuild the assembly line every time you want to produce a new product variant. You have a line, you configure it for the variant, and it runs. The marginal cost of the second product is dramatically lower than the first because the infrastructure already exists.

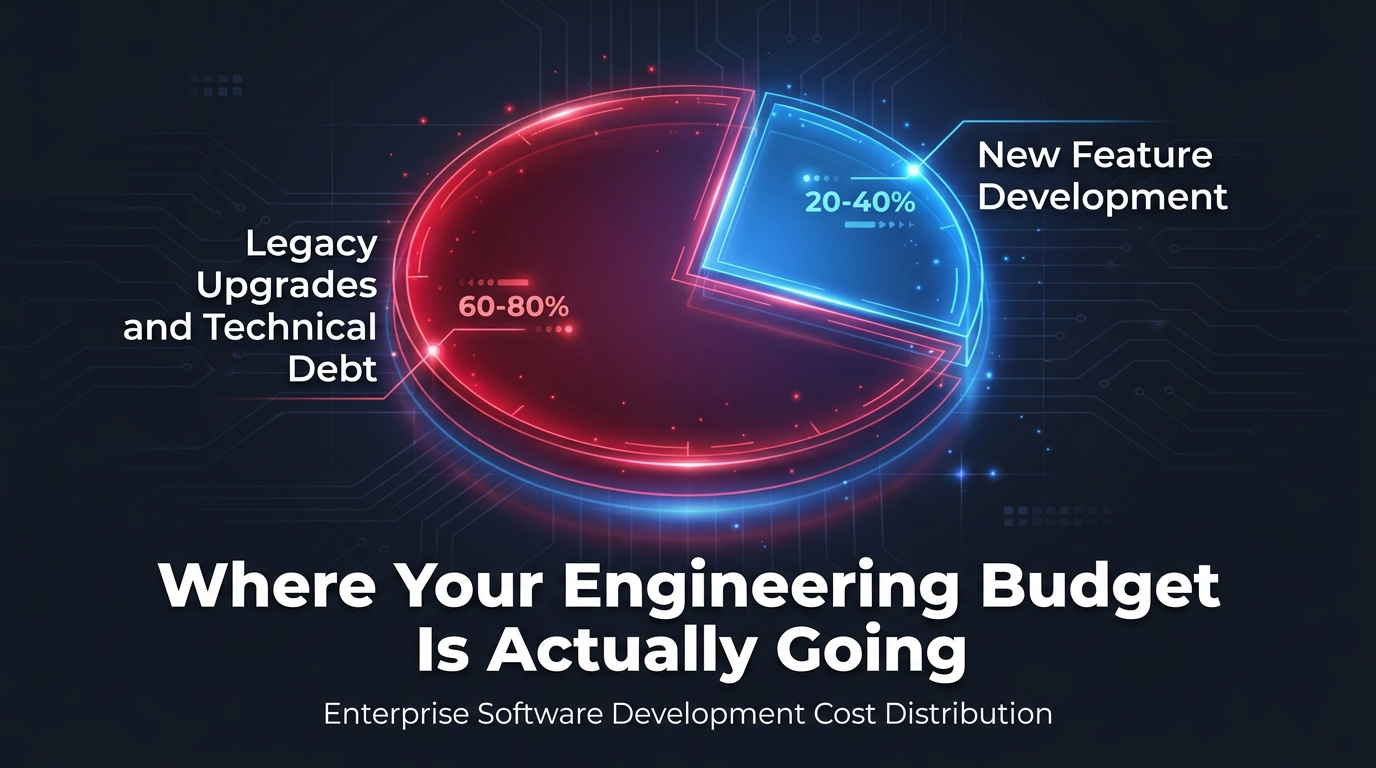

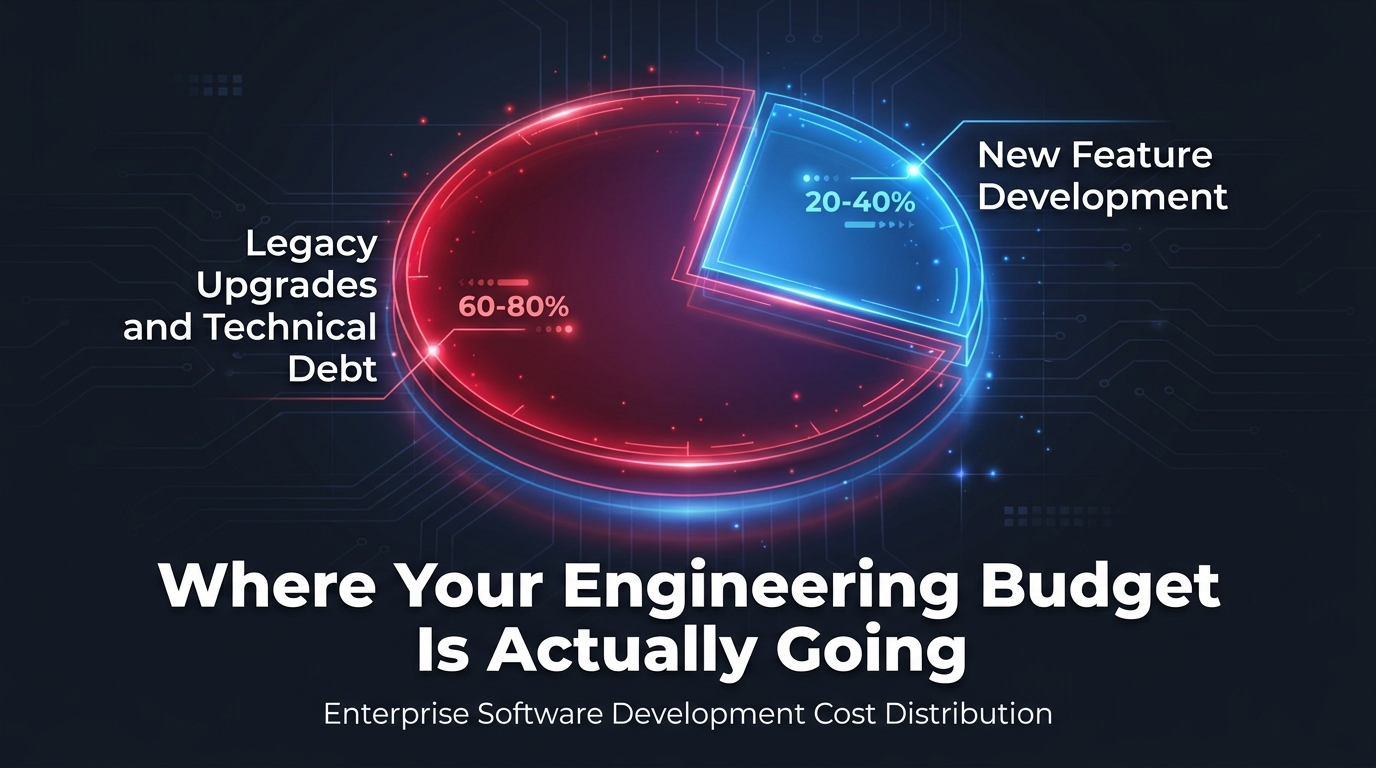

This is exactly what most AI teams are missing. They treat every model deployment as a greenfield project — building new infrastructure, writing new monitoring code, manually coordinating handoffs between data engineering, data science, and DevOps. Each deployment costs roughly the same as the last because nothing is being standardized and reused.

A functioning AI factory flips that equation. The MLOps platform is already there. The feature store is already there. The model registry is already there. The CI/CD pipeline that runs validation checks, pushes artifacts, and handles canary releases is already there. When a new model is ready, the team plugs it into a system that already knows how to handle it.

What “Scale” Actually Means Here

Scale in an AI factory context doesn’t just mean “big compute.” It means managing hundreds or thousands of models simultaneously — each with its own data dependencies, drift monitoring requirements, compliance constraints, and business stakeholders. Organizations like JPMorgan reportedly run thousands of individual AI models across their operations. That number is unmanageable with bespoke deployment processes. It requires industrial-grade tooling with centralized visibility and consistent governance.

The MLOps market reflects this urgency: currently valued at approximately $4.39 billion in 2026, it’s projected to reach $89.91 billion by 2034 — a compound annual growth rate of 45.8%. That’s not a tooling trend; it’s a fundamental shift in how AI gets built.

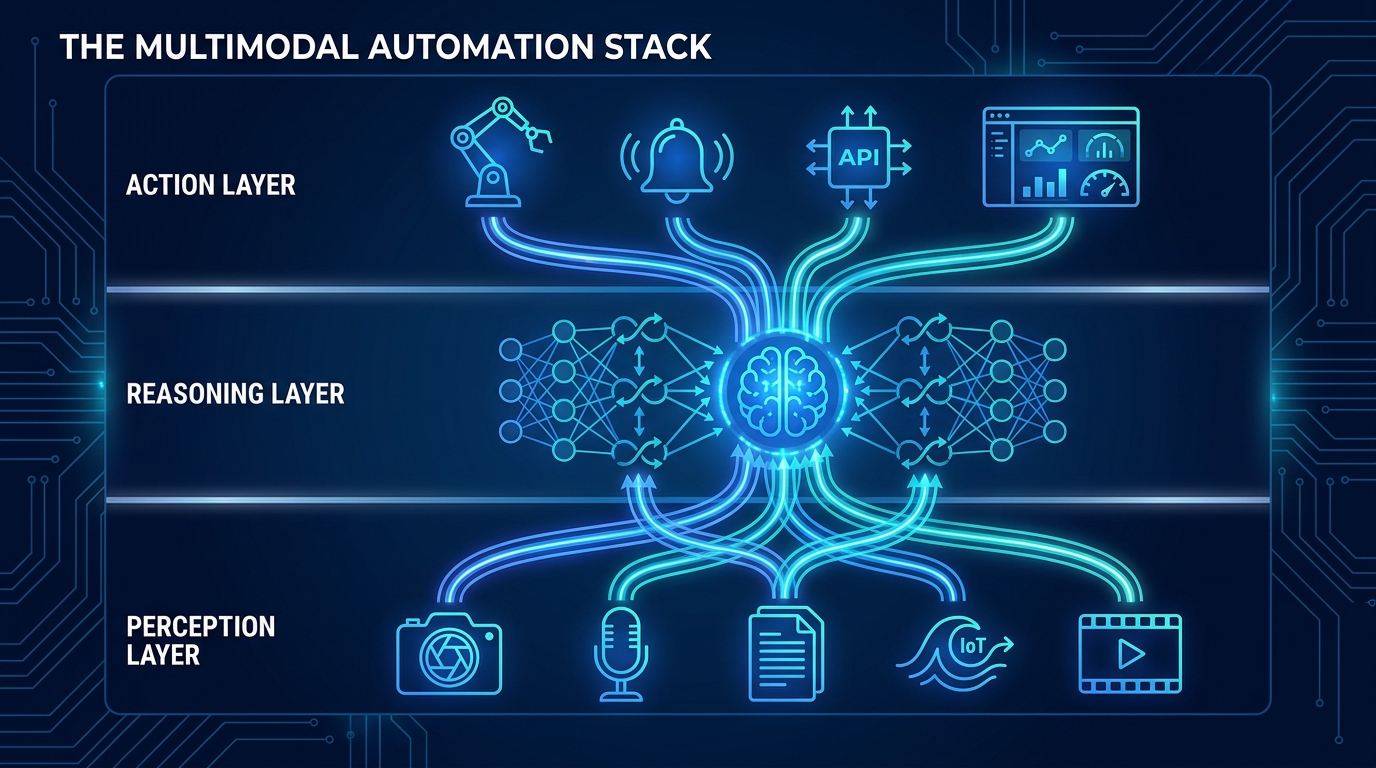

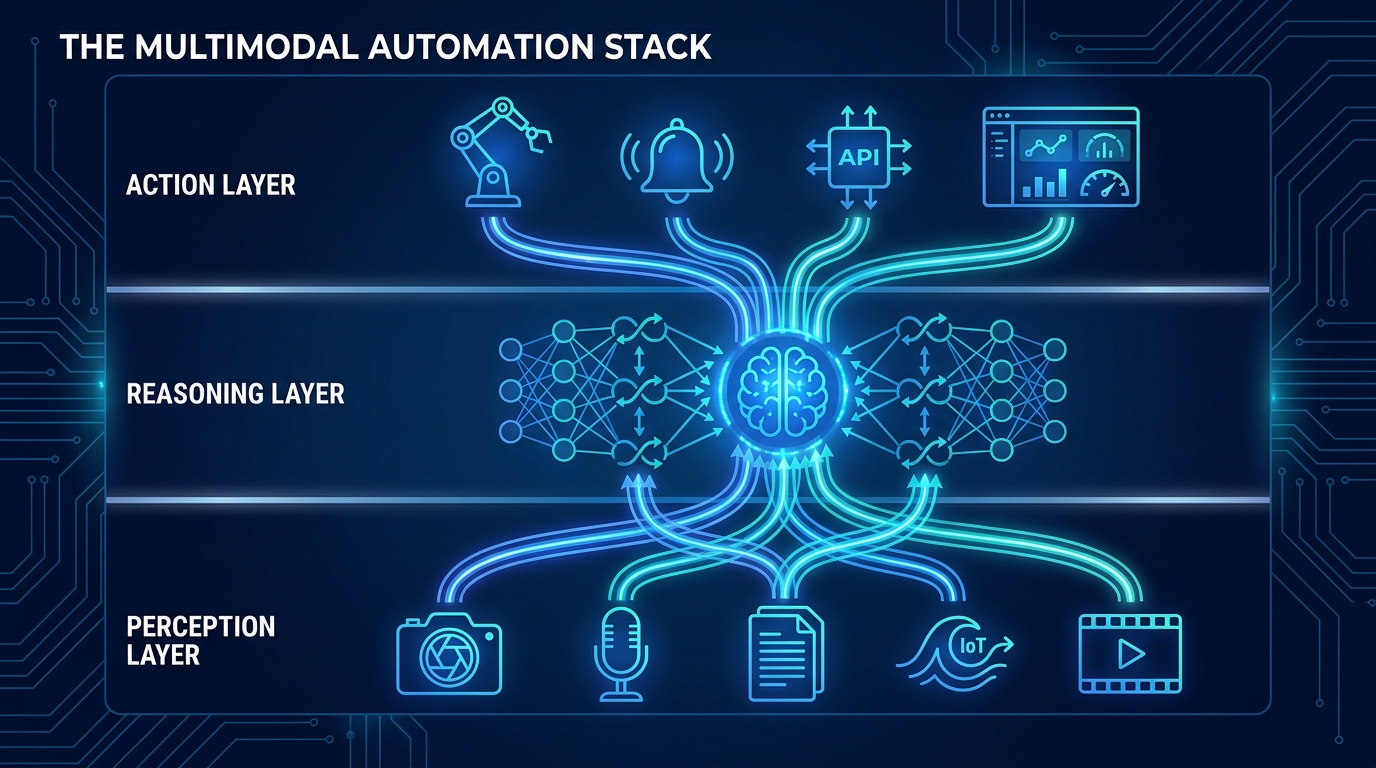

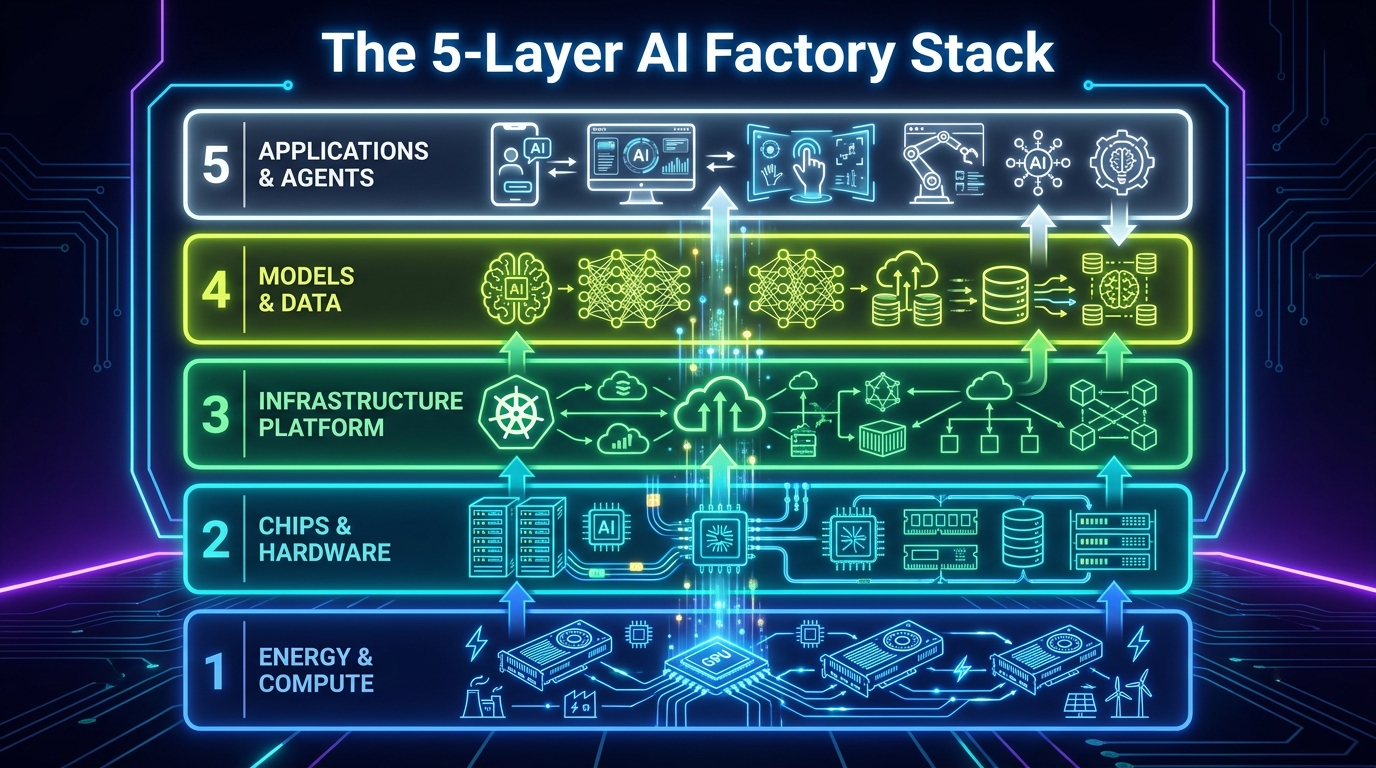

The Five-Layer Stack You Must Build Before Writing Model Code

One of the most persistent mistakes in enterprise AI is treating the model as the primary engineering challenge. The model is often the easiest part. The hard work is building the system around it — and that system has distinct layers that each need to be deliberately designed.

NVIDIA CEO Jensen Huang framed this at Davos in 2026 as a “five-layer cake” — though the layers he described are most applicable to hyperscale compute environments. For enterprise teams building internal AI factories, the layering looks somewhat different in practice, and understanding the distinction matters when scoping what you actually need to build.

Layer 1: Compute and Infrastructure

This is the physical and virtual foundation — the GPU clusters, cloud instances, Kubernetes orchestration, and networking that everything else runs on. For many enterprises, this starts with cloud providers (AWS SageMaker, Google Vertex AI, Azure ML) rather than on-premise hardware. The critical design decision here isn’t which cloud — it’s whether your infrastructure is defined as code.

Infrastructure-as-Code (IaC) using tools like Terraform, Pulumi, or CloudFormation ensures that your compute environment is reproducible, version-controlled, and not dependent on manual configuration steps that vary between environments. Without IaC, the “it works on my machine” problem simply moves from the developer’s laptop to the staging cluster.

Layer 2: Data Infrastructure

The data layer is where most AI factories stall before they’re even built. According to Deloitte’s 2026 manufacturing outlook, 78% of enterprises automate less than half of their critical data transfers. Legacy systems — ERP platforms, operational databases, flat-file exports — operate in isolation from the ML training pipeline, which means every new model project starts with a multi-month data integration project.

A functioning data layer includes not just raw data ingestion but also data validation (automated schema and quality checks using tools like Great Expectations), data versioning (DVC or similar), and lineage tracking so that every model can trace exactly which data version it was trained on. This last point is non-negotiable for compliance — and we’ll return to it when discussing governance.

Layer 3: Feature Engineering and Storage

Feature stores are the underrated backbone of any mature AI factory. A feature store is a centralized repository for computed features — the engineered inputs to your models — that serves both the offline training pipeline and the online serving infrastructure from a single source. This eliminates one of the most common sources of production failures: training-serving skew, where features computed during training differ from features computed at inference time because two separate teams wrote two separate pieces of code.

Uber’s Michelangelo system popularized the feature store concept. Databricks, Feast, Tecton, and several cloud-native options have since made it accessible for enterprise teams without the need to build from scratch. The key benefit isn’t just consistency — it’s reusability. Once a feature has been computed and stored, any team in the organization can use it for their model without rebuilding the computation logic.

Layer 4: Model Training and Experimentation

This is the layer most data scientists already have some version of. Experiment tracking tools — MLflow, Weights & Biases, Neptune — log hyperparameters, metrics, and artifacts so that runs are reproducible and results are comparable. The factory-level discipline here is ensuring that every training run is logged, not just the ones that look promising, and that experiment configuration is version-controlled alongside the code.

Layer 5: Deployment, Serving, and Monitoring

The final layer is where models become products. This includes the model registry, the deployment pipelines, the serving infrastructure (REST endpoints, batch jobs, streaming processors), and the monitoring systems that watch for performance degradation, data drift, and concept drift in production. This layer is where most enterprise AI factories are weakest — and it’s the subject of most of the remaining sections of this post.

The Model Registry: The Piece Most Teams Skip Until It’s Too Late

Ask most data science teams where their production models are, and you’ll get a range of answers: “in the S3 bucket,” “in the repo somewhere,” “ask DevOps,” “I think it’s the file named model_final_v3_ACTUAL_FINAL.pkl.” This is not hyperbole. It is the standard state of model management in organizations that haven’t built a proper model registry.

A model registry is a centralized versioned store for trained model artifacts, including their associated metadata: training data version, hyperparameters, evaluation metrics, who approved deployment, which environment they’re deployed to, and their current status (staging, production, deprecated). Think of it as Git for your models — without it, you have no meaningful version control, no audit trail, and no way to safely roll back when something goes wrong in production.

What a Model Registry Enables

The practical impact of a model registry goes beyond organization. When a model registry is integrated with your CI/CD pipeline and serving infrastructure, several critical capabilities become possible:

- Reproducibility: Any model version can be rebuilt from its stored training configuration and data pointer. This is essential for debugging production incidents and satisfying audit requirements.

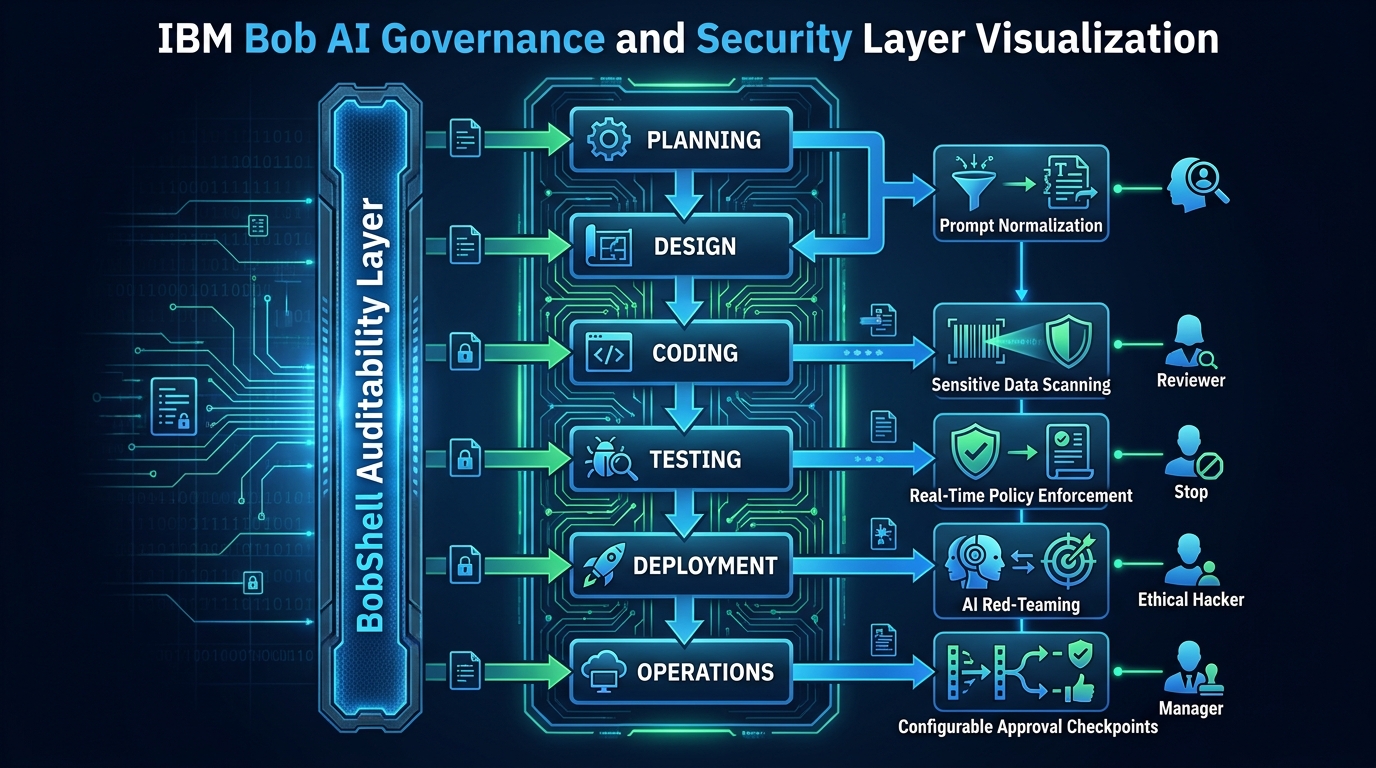

- Approval workflows: High-risk models (credit decisions, healthcare triage, fraud flagging) can require sign-off from model risk management or legal before the registry promotes them to production status. This creates an auditable governance checkpoint without slowing down deployment of lower-risk models.

- Automated canary promotion: Once a model is registered, the deployment pipeline can automatically route a fraction of live traffic to it and monitor business metrics against predefined thresholds before promoting to full production — all without manual intervention.

- Cross-team reuse: A registered model can be reused across multiple applications without different teams deploying separate copies, which reduces infrastructure waste and prevents versioning divergence.

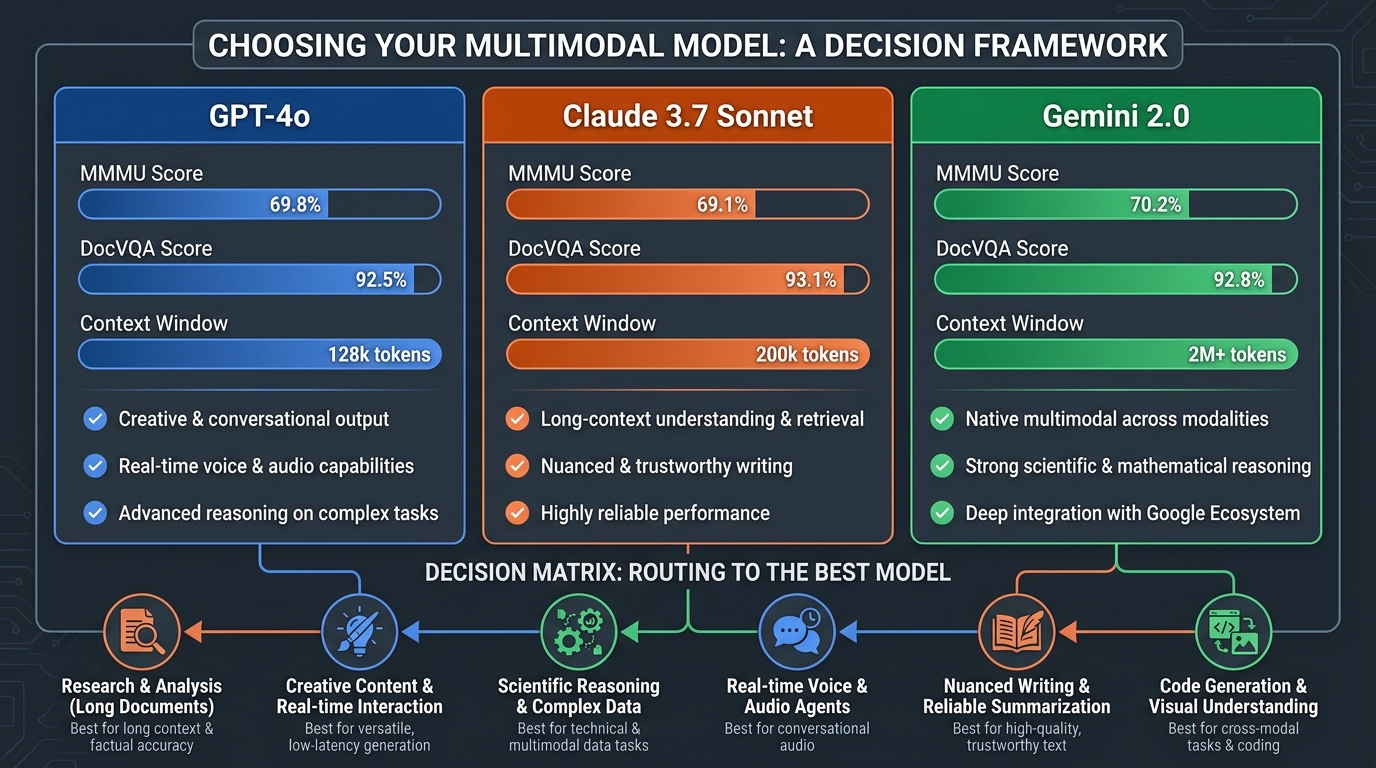

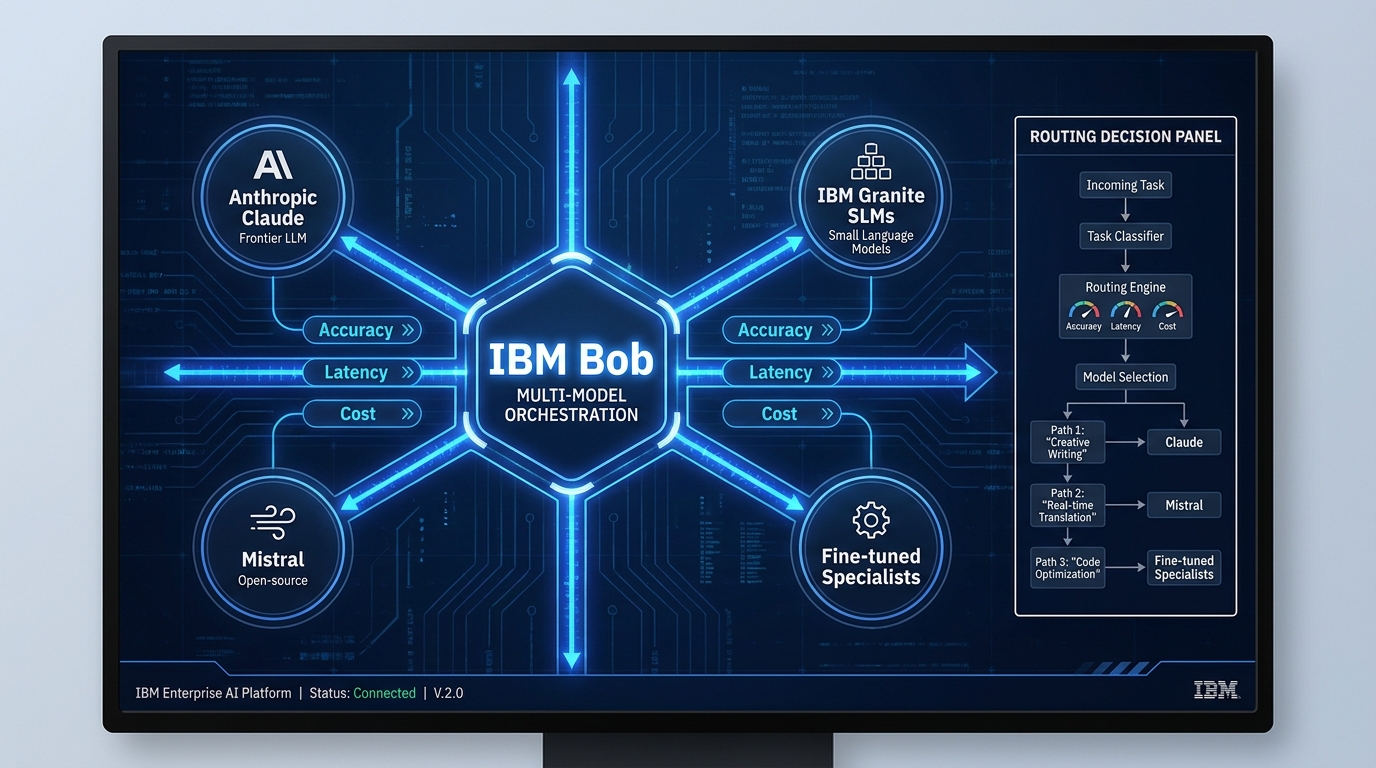

MLflow, SageMaker Model Registry, and Vertex AI — Choosing the Right Tool

MLflow’s model registry is the most commonly used open-source option and integrates cleanly with most experiment tracking setups. AWS SageMaker Model Registry and Google Vertex AI Model Registry are the managed equivalents for teams already committed to those clouds. For organizations running regulated workloads with complex approval requirements, purpose-built platforms like Domino Data Lab or DataRobot provide additional governance features on top of registry fundamentals.

The tooling choice matters less than the discipline of actually using one. Organizations that implement model registries report 60-80% faster deployment cycles and a significant reduction in the “where is the production model?” questions that consume senior engineering time.

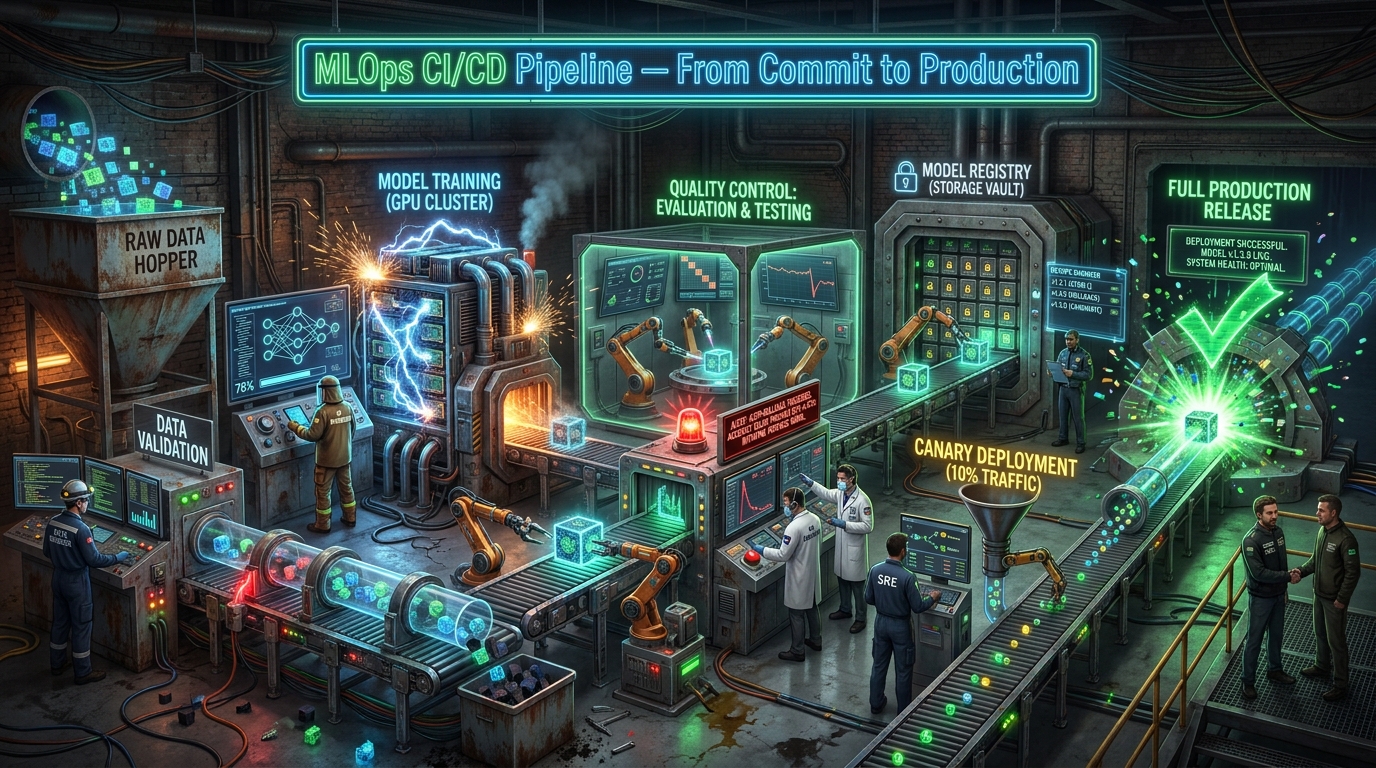

Building the ML CI/CD Pipeline: Not Just Continuous Delivery for Software

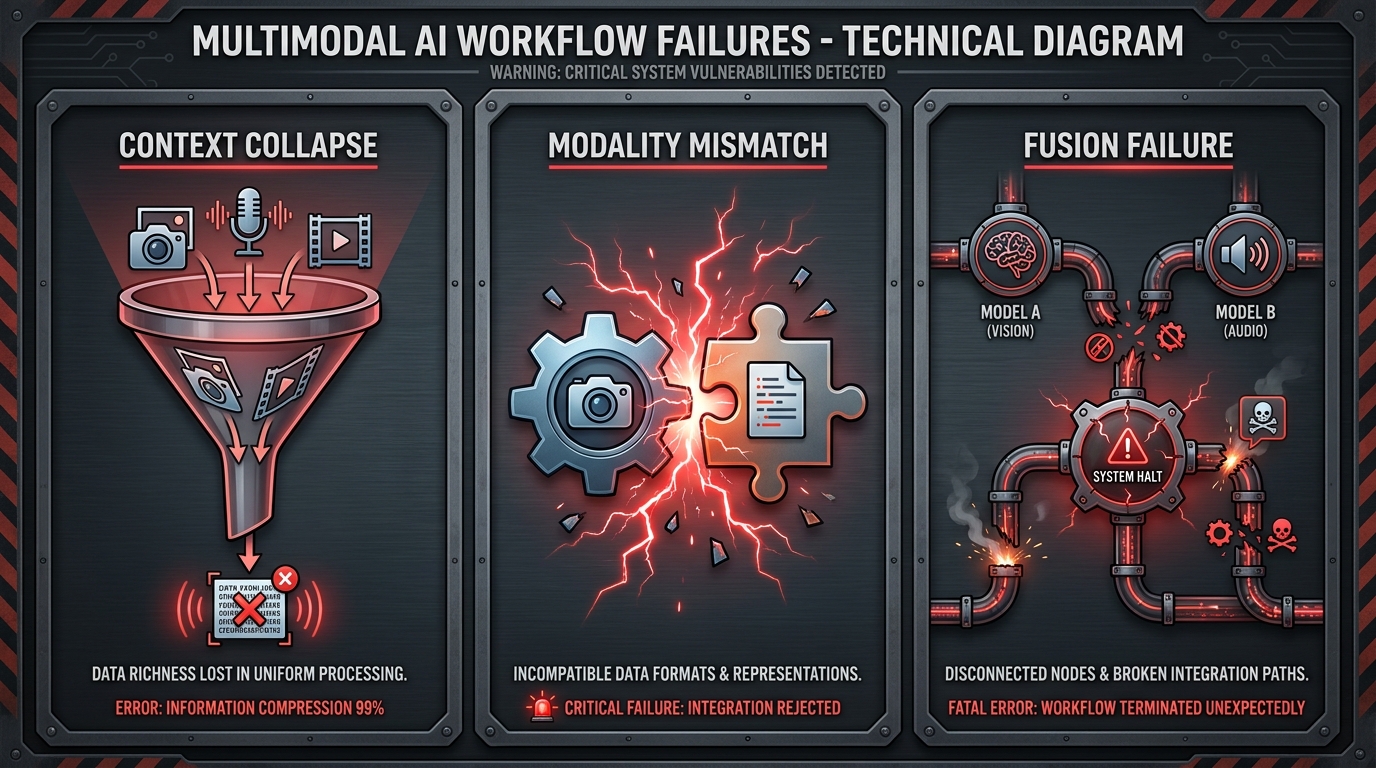

Software CI/CD is well understood. You commit code, tests run automatically, and if they pass, the build is deployed. ML CI/CD follows the same logic but has to account for a fundamental difference: in ML, the code, the data, and the model are all independently versioned artifacts that must all be validated and managed as part of the pipeline.

A change to the training data can break a model just as surely as a change to the model architecture. A change to feature computation logic can silently degrade production performance without triggering any code-level test failures. ML CI/CD must catch all three classes of change — and that requires a different pipeline design than standard software delivery.

The Three Stages of ML Continuous Integration

Stage 1 — Data Validation: Before a training run even begins, the pipeline validates the incoming data. This means checking schema consistency, testing for unexpected null rates or distributional shifts, validating referential integrity for joins, and confirming that the data version being used is the expected one. Tools like Great Expectations or Soda Core automate these checks and fail the pipeline if they detect data quality issues. This single stage prevents the majority of “the model was fine but production data was different” failures.

Stage 2 — Training and Evaluation: The CI system triggers an automated training run and evaluates the resulting model against a suite of tests — not just aggregate accuracy metrics, but slice-based performance checks (how does it perform on the minority class? on this geographic segment? on recent data?), bias detection checks (demographic parity, equalized odds), and regression tests against the current production model’s performance. If the challenger model doesn’t beat the champion by a predefined threshold on all required dimensions, the pipeline fails and the deployment stops.

Stage 3 — Integration and Contract Testing: Once a model passes evaluation, the pipeline tests that it integrates correctly with the serving infrastructure — that the input schema matches what the application will send, that response latency is within acceptable bounds under load, and that the model output conforms to the downstream application’s expected format. Breaking the serving contract silently is one of the most common causes of production incidents that take days to diagnose.

Continuous Training: The Third “C” Most Teams Forget

Standard CI/CD covers continuous integration and continuous delivery. ML requires a third C: Continuous Training (CT). In production, the world keeps changing — user behavior shifts, the distribution of inputs drifts away from the training data, and model performance silently degrades. Without automated retraining triggers, you discover this when the business reports that the predictions “don’t seem to be working anymore.”

Continuous training systems monitor production data distributions against training baselines and trigger automated retraining runs when drift exceeds a defined threshold. The retrained model goes through the same CI/CD pipeline as any other model change — no special handling, no manual bypass. When it works well, models stay fresh without requiring constant human attention. When it detects an anomaly that’s too large to handle automatically, it escalates to a human reviewer rather than silently deploying a potentially degraded model.

Canary Releases, Blue-Green Deployments, and Rollback Discipline

The single biggest risk in ML deployment isn’t the model itself — it’s deploying a change to a system that’s handling live traffic without a safe way to limit blast radius and reverse course quickly. Software teams learned this lesson years ago and developed a set of progressive deployment patterns that have become standard practice. ML deployment is only beginning to adopt them consistently.

Canary Deployments

A canary deployment routes a small percentage of live traffic — typically 5-10% — to the new model version while the remaining traffic continues to the current production model. The system monitors business-level metrics (not just technical health metrics like latency and error rate, but also conversion rates, fraud catch rates, customer satisfaction scores — whatever the model is supposed to move) across both populations. If the new model performs at or above the current model across all monitored metrics, traffic is progressively shifted: 10% → 25% → 50% → 100%. If any metric degrades, traffic is instantly routed back to the current production model and the deployment is paused for investigation.

The key discipline here is defining success criteria before deployment begins, not after. Teams that review metric dashboards retrospectively and debate whether a 0.3% drop in precision is “acceptable” are making governance decisions under pressure and usually get them wrong. Pre-defined rollback thresholds remove the ambiguity.

Blue-Green Deployments

Blue-green deployments maintain two identical production environments — one running the current model (blue), one running the new model (green). Traffic is switched from blue to green all at once, but the blue environment remains live and idle so that traffic can be instantly switched back if a problem is detected post-cutover. This pattern is better suited to models where you need atomic cutover (regulatory requirements, breaking schema changes) rather than gradual rollout. The tradeoff is the cost of running two full production environments simultaneously, which makes it less appropriate for compute-heavy serving infrastructure.

Shadow Mode Testing

Before either canary or blue-green deployment, shadow mode (or “dark launch”) is a powerful validation technique. In shadow mode, the new model receives a copy of every production request and generates predictions — but those predictions are not returned to the user or acted upon by the system. They’re logged and compared against the production model’s predictions. This allows teams to validate model behavior on real production traffic without any risk of affecting users. When shadow mode results are satisfactory, the team has much higher confidence going into a live canary deployment.

Governance, Compliance, and the EU AI Act Reality in 2026

AI governance has moved from optional best practice to legal requirement. The EU AI Act’s enforcement provisions, which take effect in August 2026, require organizations deploying high-risk AI systems to maintain comprehensive documentation: model cards describing architecture, performance, and known limitations; centralized catalogs of deployed AI systems; version tracking with lineage back to training data; and evidence of human oversight mechanisms.

Non-compliance carries fines of up to 7% of global annual revenue — a figure that gets executive attention in a way that “MLOps best practices” typically does not. For enterprise teams building AI factories in 2026, governance infrastructure is no longer a separate workstream to tackle later. It needs to be built into the factory architecture from day one.

What Governance Infrastructure Looks Like in Practice

Model cards: Every model in the registry should have an associated model card — a structured document capturing training data provenance, evaluation results across key demographic and performance slices, known failure modes, intended use cases, and out-of-scope use cases. Generating model cards automatically as part of the training pipeline (rather than asking data scientists to write them manually after the fact) dramatically increases compliance and accuracy.

Audit trails: The factory must log every significant event in a model’s lifecycle — when it was trained, on what data, who approved it, when it was deployed, what traffic it received, when it was updated, and when it was retired. These logs need to be immutable, timestamped, and queryable. Systems like MLflow, with appropriate access controls, handle this reasonably well. For regulated industries like financial services or healthcare, purpose-built model risk management platforms offer additional features.

Bias detection: Automated bias checks should run at multiple points in the pipeline — during training evaluation, during shadow mode, during canary deployment, and continuously in production. The specific metrics depend on the use case (demographic parity for hiring models, equalized odds for lending decisions, calibration for risk scoring), but the principle is the same: bias testing must be systematic and documented, not ad hoc and optional.

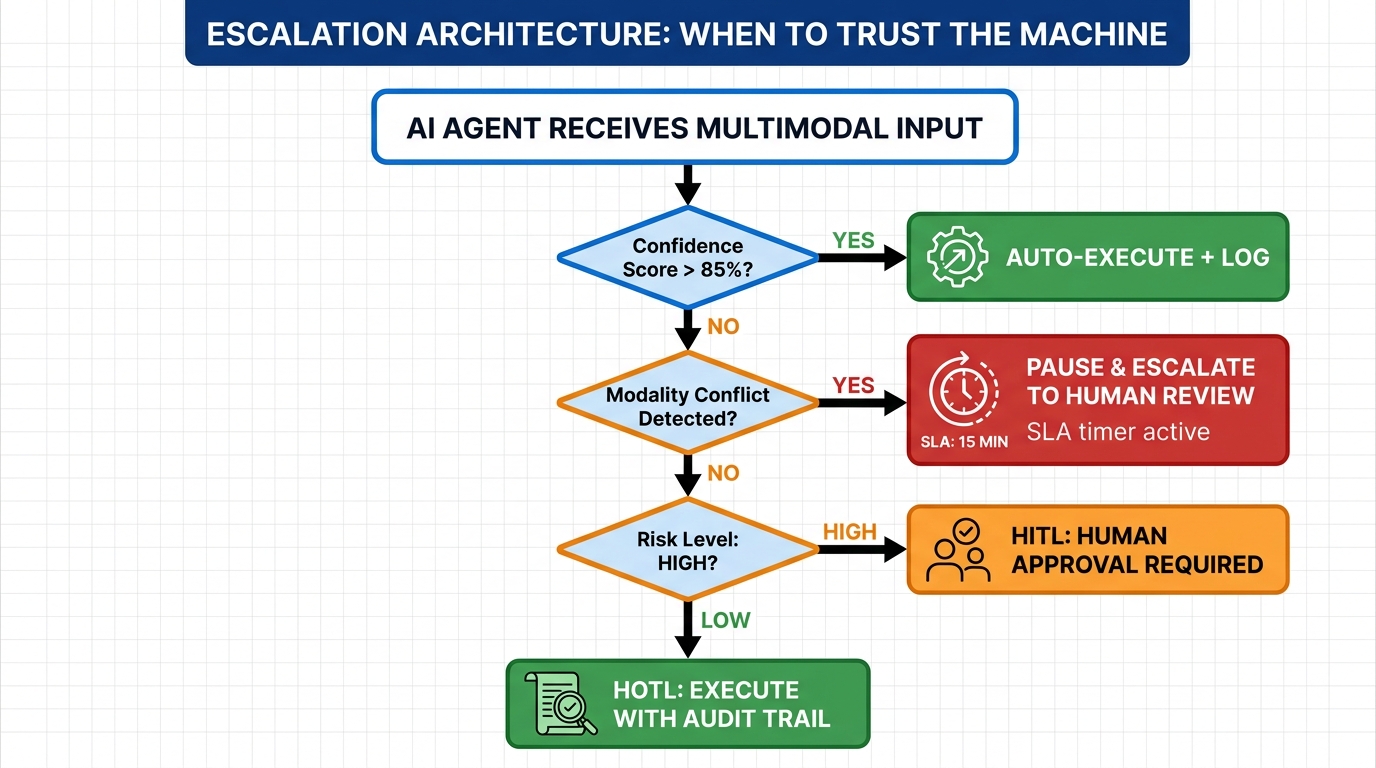

The Human-in-the-Loop Requirement

Agentic AI systems — models that take autonomous actions rather than just returning predictions — face particularly stringent governance requirements. Moody’s reported that human-in-the-loop agentic AI cut production time by 60% by surfacing concise, decision-ready information for human reviewers rather than attempting fully automated decisions in high-stakes contexts. This isn’t a technical limitation; it’s a governance choice that maintains compliance, auditability, and appropriate human accountability for consequential decisions.

Building human oversight checkpoints into automated pipelines — particularly for models that affect credit, healthcare, employment, or law enforcement — is a design requirement, not an afterthought. The factory architecture should make it easy to route model outputs through human review queues for specific decision categories, with clean logging of both the model’s recommendation and the human’s final decision.

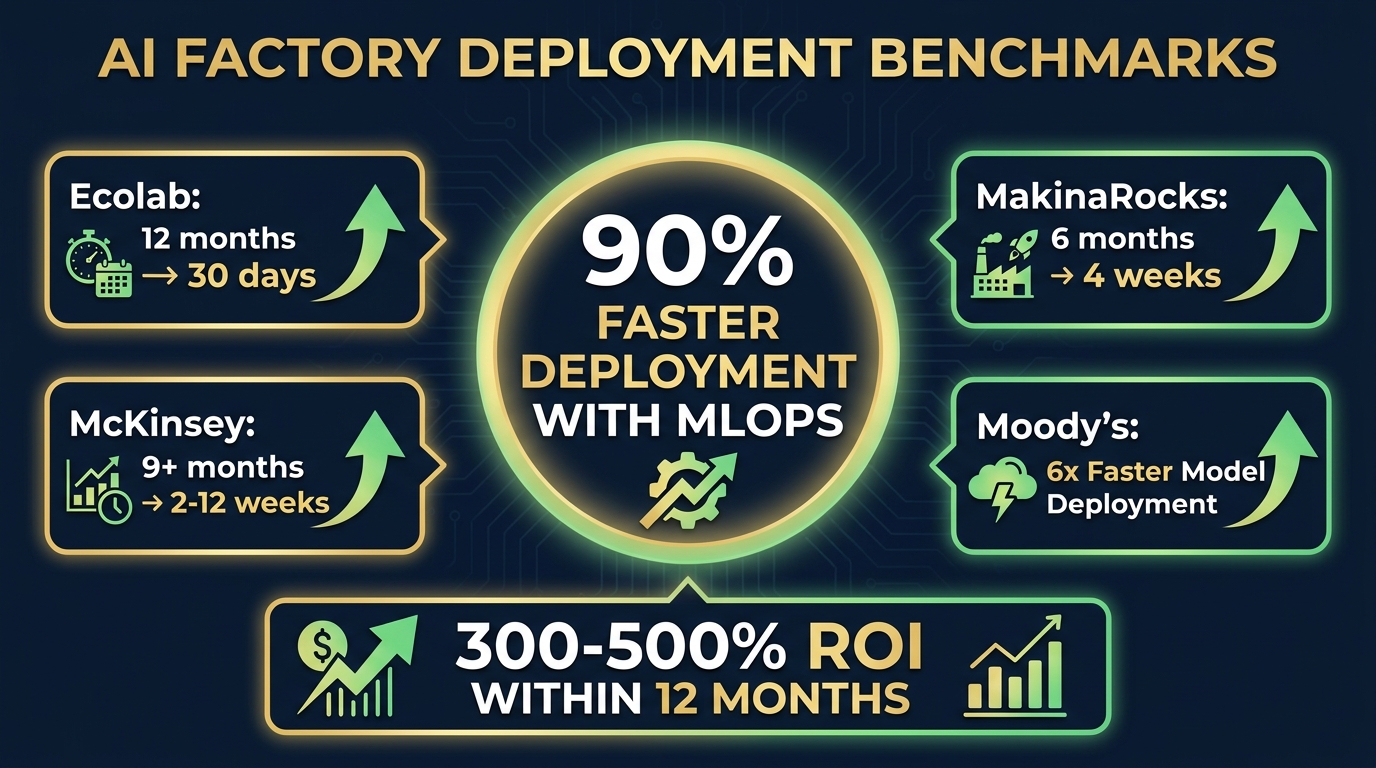

Real Deployment Benchmarks: What’s Actually Achievable

The gap between “what’s theoretically possible with perfect MLOps” and “what organizations actually achieve when they build real AI factories” is significant. Here’s what the documented evidence shows.

Documented Case Results

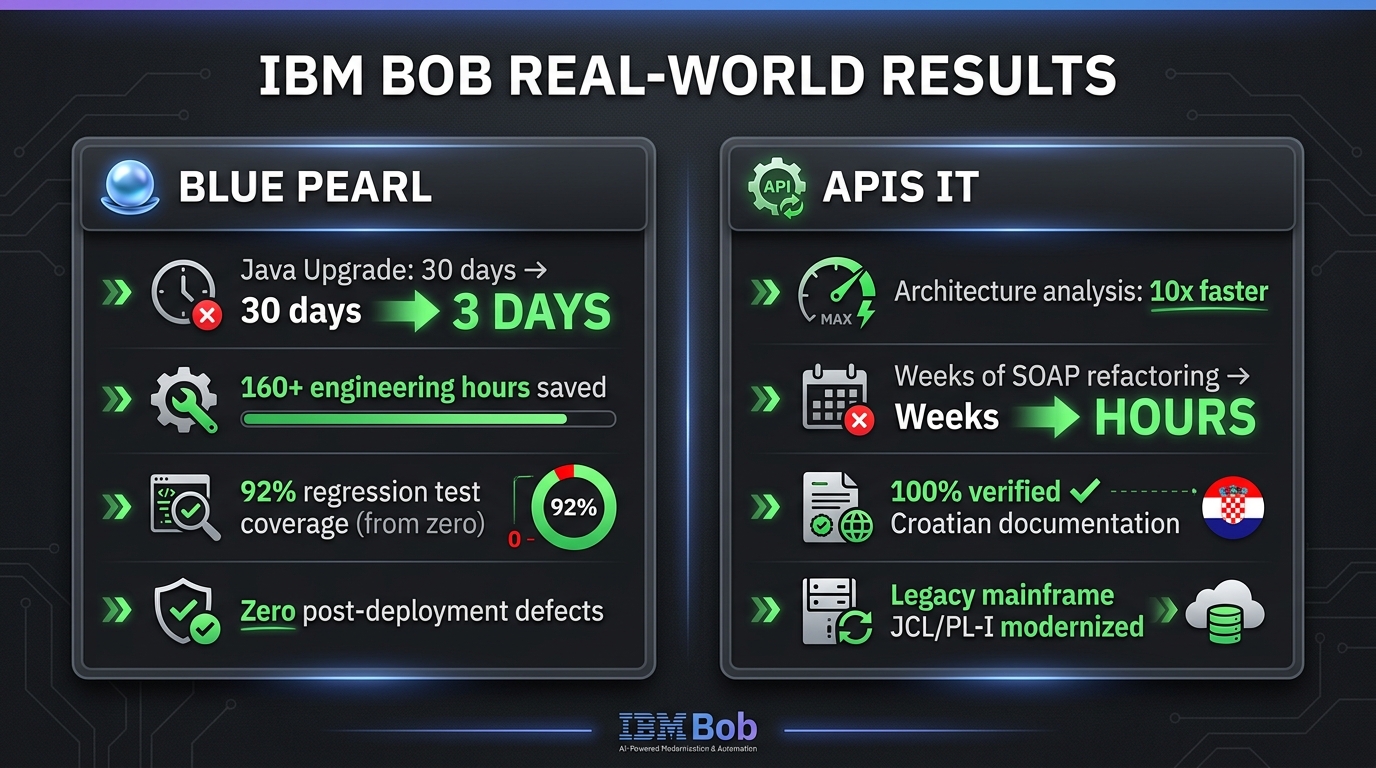

Ecolab: Reduced model deployment time from 12 months to 25-30 days by implementing cloud-based MLOps pipelines, automated service accounts, and systematic monitoring. The key change wasn’t a single technology — it was standardizing the process so that the same pipeline handled every new model rather than each project team building their own deployment approach.

MakinaRocks (manufacturing): Cut deployment from over 6 months to approximately 4 weeks — roughly an 80% reduction — while simultaneously reducing the MLOps setup manpower required by 50%. The efficiency gain came from building reusable pipeline components that manufacturing teams could configure for new use cases without starting from scratch.

Moody’s with Domino Data Lab: Deployed risk models 6x faster (months-long timelines reduced to weeks) using an enterprise MLOps platform that standardized APIs, enabled instant redeployment from beta testing feedback, and centralized model management across teams.

McKinsey’s documented benchmark: Organizations with mature MLOps practices take ideas from concept to live deployment in 2-12 weeks, compared to 9+ months traditionally, without requiring additional headcount. The speed gain is almost entirely from eliminating repetitive manual work and waiting time.

What Mature MLOps Actually Delivers vs. Where Teams Start

Industry data from multiple sources suggests a consistent pattern. Organizations without structured deployment tooling get roughly 20% of trained models into production. Organizations with integrated MLOps infrastructure raise that to 60-70%. The remaining 30-40% of “failures” aren’t technical failures — they’re models that fail evaluation gates, fail business case reviews, or are superseded by better approaches before deployment completes. That’s the system working as intended.

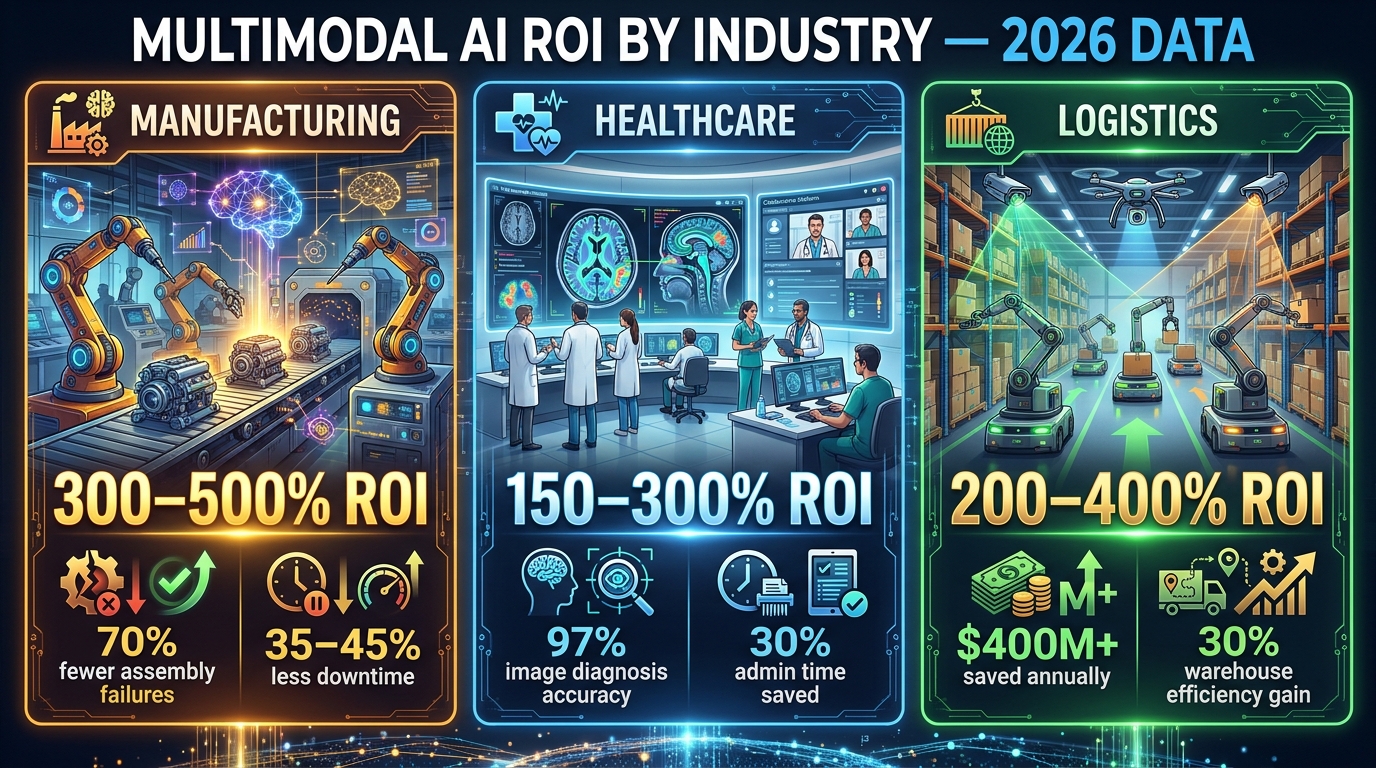

ROI from MLOps investment follows a J-curve pattern: the first 6-12 months require significant infrastructure build cost with limited direct model output benefit. Once the factory is operational, Forrester-cited estimates put realized ROI at 300-500% within the first year of production operation, with individual deployments generating direct productivity and cost savings that compound as more models are added to the factory.

What “Days” Deployment Actually Requires

The headline benchmarks of deploying new models in “days” need context. That timeline is achievable — but it assumes the entire factory infrastructure is already in place and the new model fits within existing patterns (same data sources, same serving requirements, same monitoring approach). Truly novel models requiring new data pipelines, new serving endpoints, or new monitoring logic still require longer timelines. The factory accelerates iteration and deployment of models within established patterns; it doesn’t eliminate infrastructure work for genuinely new use cases.

The Compute Architecture Question: Cloud, On-Premise, and Hybrid

Where you run the compute for your AI factory is increasingly a strategic decision rather than a purely technical one. The answer depends on your regulatory environment, data sovereignty requirements, cost profile, and the nature of your workloads.

Cloud-Native AI Factories

For most enterprises starting from zero, managed cloud platforms — AWS SageMaker, Google Vertex AI, Azure ML — offer the fastest path to a functioning factory. They provide integrated feature stores, experiment tracking, model registries, deployment endpoints, and monitoring in pre-built, managed form. The tradeoff is cost predictability at scale and data residency constraints for regulated industries.

DigitalOcean’s March 2026 AI factory launch in Richmond, powered by NVIDIA B300 HGX systems with 400Gbps RDMA fabric and NVIDIA Dynamo 1.0 (which claims a 3x cost reduction over previous generation Hopper GPUs), shows that competitive managed GPU compute is no longer exclusively the domain of hyperscalers. Mid-market organizations have more options than they did 24 months ago.

On-Premise and Hybrid Architectures

Financial services, healthcare, and government organizations frequently face data residency requirements that preclude full cloud deployment. For these organizations, hybrid architectures — with training and sensitive data processing on-premise and model serving potentially split between on-prem and cloud endpoints — have become the standard answer. The complexity cost is real: hybrid architectures require more sophisticated networking, identity federation, and data movement tooling. The governance benefit justifies that cost for regulated workloads.

NVIDIA’s reference architecture for enterprise AI factories — using Blackwell and Vera Rubin hardware, NIM microservices for model serving, and Run:ai for workload orchestration — provides a structured blueprint for on-premise deployments that mirrors the manageability of cloud platforms. NVIDIA’s own internal deployment reportedly scaled hundreds of isolated AI pilots into a unified, secure workflow using this stack, with 1.1 billion documents ingested via customized RAG architecture.

Rack-Scale Systems and What They Change

The shift to rack-scale AI systems — NVIDIA’s NVL72 (72 GPUs and 36 CPUs in a single rack, delivering 35x token throughput over the previous Hopper generation at equivalent power), Groq’s LPX rack with 256 Language Processing Units — fundamentally changes the economics of inference at the infrastructure layer. When a single rack can serve that volume of model requests, the per-token cost of inference drops significantly, and the case for running high-volume inference workloads on-premise vs. paying per-call cloud API rates shifts. For organizations with high inference volume (millions of model calls per day), this is a meaningful cost calculus change in 2026.

The Team Structure That Actually Ships Models

Technology alone doesn’t build a functioning AI factory. The team structure and ownership model determines whether the infrastructure gets used or becomes another internal platform that everyone ignores because it’s too complex to navigate without help.

The Platform Team Model

The most effective structure in large organizations is a dedicated ML Platform team — separate from the data science teams that build models — whose job is to build and maintain the factory itself. This team owns the feature store, the model registry, the CI/CD pipelines, the serving infrastructure, and the monitoring systems. They provide these as internal services that domain-specific data science teams consume through self-service tooling.

This separation solves a persistent organizational problem: without a dedicated platform team, infrastructure work gets neglected because data scientists are incentivized to build models (the visible output), not pipelines (the invisible plumbing). When the platform team exists and is measured on platform adoption and deployment velocity rather than model performance, the incentives align correctly.

Self-Service Is the Goal, Not the Starting Point

True self-service — where a data scientist can take a trained model and deploy it to production without requiring assistance from the platform team or DevOps — is the target state for a mature AI factory. But it typically takes 12-18 months of platform investment to get there. Teams that try to build self-service platforms before they have operational experience with what data scientists actually need end up building the wrong abstractions.

The better path is starting with high-touch support (the platform team helps each team deploy their first model), building reusable components from that experience, and progressively automating the handholding until the platform genuinely serves itself. Addepto’s documented experience with enterprise MLOps platforms shows this trajectory clearly: the first deployment with platform support takes weeks; by the tenth deployment on the same platform, teams that understand the system can move in days.

Ownership After Deployment

One of the most consistent failure modes in enterprise AI is the “who owns it in production?” problem. The data scientist who built the model has moved on to the next project. The DevOps team doesn’t understand the model well enough to triage business-logic failures. The application team assumes the model team handles retraining. Nobody is watching the drift metrics. The model slowly degrades over months until a business stakeholder notices that “the predictions seem off.”

AI factories need explicit ownership assignment for every production model — a named team or individual who is accountable for production performance, drift responses, scheduled retraining, and eventual retirement. This is organizational policy, not technology. But without it, even the best technical infrastructure produces models that aren’t actually maintained.

Common Failure Modes — and How to Avoid Each One

After examining dozens of enterprise AI deployment efforts, several recurring failure patterns stand out. These aren’t obscure edge cases. They’re the dominant reasons that well-resourced teams fail to build functioning AI factories.

Failure Mode 1: Building the Factory After the Models

Many organizations start deploying individual models ad hoc — manually, bespoke, one at a time — with the intention of “building proper infrastructure later.” The factory never gets built because by the time the team returns to it, they’re already committed to maintaining all the bespoke deployments they created. Start with the factory. Deploy your first production model through it, even if that means the first deployment takes longer than a manual approach would have. The discipline of building the infrastructure first pays off from the second model onward.

Failure Mode 2: Monitoring Only Technical Metrics

Latency, error rates, and throughput are necessary monitoring signals — but they’re insufficient. A model can be technically healthy (fast, low error rate, high uptime) while performing terribly on the business metric it was deployed to move. Production monitoring must include business KPIs: conversion rate impact, fraud detection rate, recommendation click-through, risk score accuracy against realized outcomes. Teams that monitor only technical health discover model drift from business stakeholder complaints rather than automated alerts.

Failure Mode 3: Treating Generative AI Differently

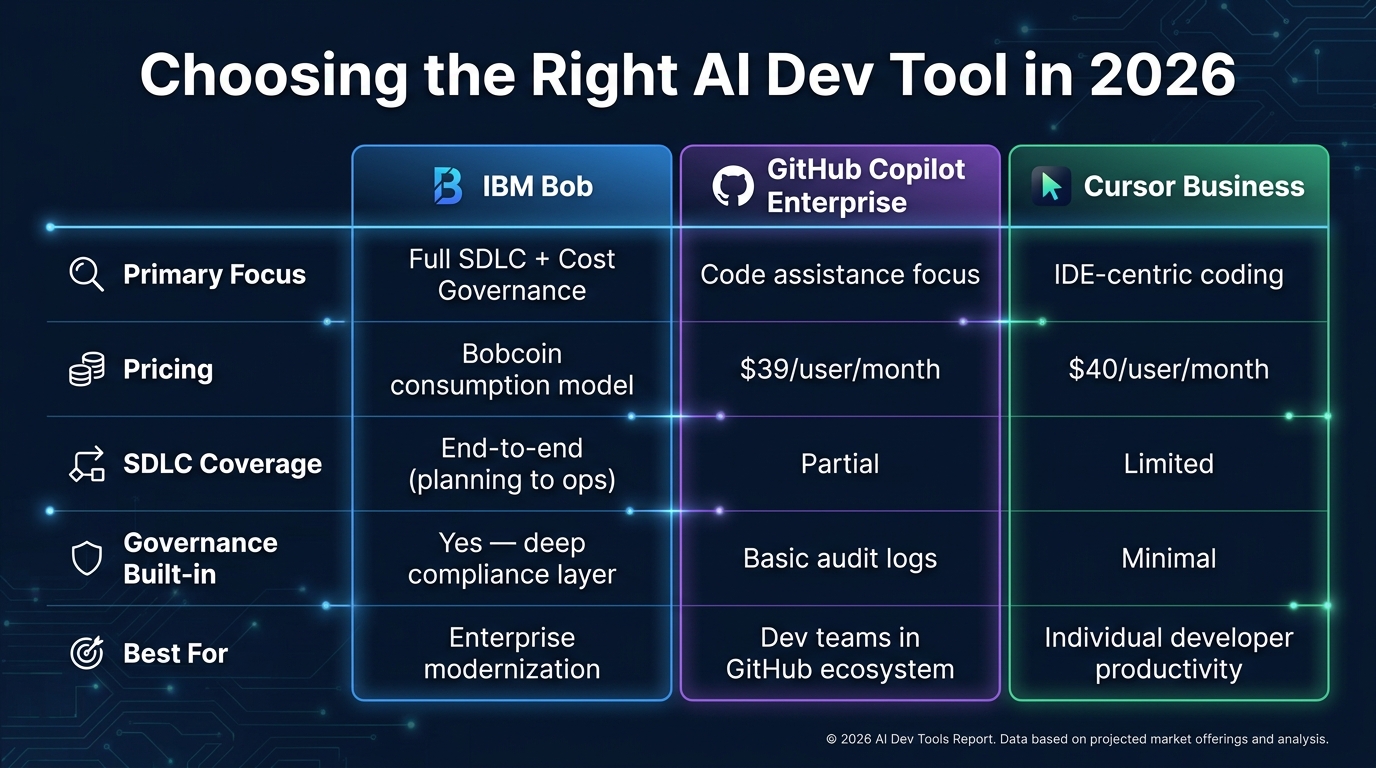

Many organizations have separate, informal deployment processes for LLMs and generative AI models because “they’re different from traditional ML.” The functional requirements are different in some ways — prompt versioning, response quality evaluation, and hallucination monitoring require different tooling — but the governance and operational requirements are the same or stricter. Generative AI models in production need model registries, version control, drift monitoring, approval workflows, and rollback capability just as much as any classification or regression model.

Failure Mode 4: Skipping Staging Environments

The number of organizations that push ML model updates directly to production because “it passed unit tests in dev” is striking. Production data almost always differs from training and dev data in ways that can’t be fully anticipated. A staging environment that receives a continuous feed of production-representative traffic — with production-grade monitoring and load — catches the majority of “it worked in dev but broke in prod” failures before they reach users. The cost of running a staging environment is trivially small compared to the cost of a production model incident.

Failure Mode 5: Data Fragmentation Without a Resolution Plan

Only 20% of organizations feel fully prepared to scale AI despite 98% exploring it. The #1 reason is data fragmentation — ERP systems, CRMs, data warehouses, and operational databases that don’t integrate cleanly with the ML training pipeline. No factory architecture can overcome fundamentally broken data infrastructure. Before investing in MLOps tooling, organizations need an honest assessment of whether their data layer can reliably feed the models they’re trying to build. If it can’t, the first investment needs to be data infrastructure, not model deployment.

What Building It Actually Looks Like: A Phased Approach

For teams starting from minimal MLOps infrastructure, building a full AI factory isn’t a single project — it’s a phased investment that spans 12-24 months. Here’s a realistic sequence based on documented enterprise implementations.

Phase 1 (Months 1-3): Foundations

Focus entirely on the basics that every subsequent capability depends on. Stand up experiment tracking (MLflow is the lowest-friction start). Implement version control for training code and data. Deploy your first model through a manual but documented process. Create a simple model registry spreadsheet if nothing else — get into the habit of tracking what’s in production before automating it. Identify and fix the three worst data quality issues in your highest-priority use case.

Phase 2 (Months 4-9): Automation

Build the CI/CD pipeline around the process you documented in Phase 1. Automate data validation. Automate training runs triggered by data updates. Add the model registry as a real system. Set up basic drift monitoring for production models. Get your second and third model deployed through the pipeline — the automation pays dividends immediately. Establish the platform team or assign clear ownership for factory maintenance.

Phase 3 (Months 10-18): Scale and Governance

Implement the feature store. Add canary deployment and automated rollback. Build the model card and audit trail infrastructure. Begin migrating existing bespoke model deployments onto the factory. Develop self-service documentation. Add business metric monitoring alongside technical monitoring. Address the governance requirements your compliance and legal teams need for the EU AI Act or equivalent regulations in your jurisdiction.

Phase 4 (Month 18+): Optimization and Self-Service

By this point the factory is operational and the focus shifts to reducing friction. Streamline onboarding so a new data scientist can deploy their first model through the factory in a single day rather than a week. Add automated capacity management. Build feedback loops from production performance back to training pipeline improvements. Begin exploring more advanced capabilities: online learning, multi-armed bandit frameworks for model comparison, automated hyperparameter optimization triggered by drift detection.

Conclusion: The Factory Mindset Is the Strategy

The organizations producing measurable AI value in 2026 share a common characteristic: they stopped treating model deployment as an engineering task and started treating it as a manufacturing capability. The question isn’t “can our team deploy a model?” — it’s “how many models can our infrastructure deploy per quarter, with what average lead time, at what confidence level that each one meets quality and compliance standards?”

That shift in framing changes everything: what you invest in, how you staff, what metrics you track, and how you explain AI ROI to the business. A data scientist who can train better models is valuable. A platform that can systematically convert trained models into production systems is an enterprise capability with compounding returns.

The benchmarks are clear and consistent across industries: organizations with mature AI factory infrastructure deploy in days rather than months, get 60-70% of trained models into production rather than 20%, and document ROI of 300-500% on MLOps investment within 12 months of operation. None of those numbers are marketing figures — they come from documented case studies at real companies that built the plumbing before they built the models.

Actionable Takeaways

- Start with a model registry today. Even a simple, structured tracking system for what models are in production, what data they were trained on, and who owns them changes the operational maturity of your AI practice immediately.

- Define rollback criteria before every deployment. Know exactly which metric dropping by exactly how much triggers an automatic rollback. Remove the discretion — it’s slower and less reliable under pressure.

- Invest in data validation before MLOps tooling. No deployment pipeline makes up for training and serving on different data distributions. Fix the data layer first.

- Assign explicit production owners. Every model in production needs a named person or team accountable for its ongoing health. Without that, even the best factory degrades into an unmaintained graveyard of slowly rotting models.

- Build governance in, not on. Model cards, audit trails, and bias checks added retroactively are painful and incomplete. Architect them into the pipeline from the beginning — especially in light of EU AI Act requirements taking effect in 2026.

- Measure the factory, not just the models. Track deployment lead time, production success rate, and time-to-rollback alongside model accuracy. The factory metrics tell you whether you’re building a capability or just accumulating technical debt in a new location.

Building an AI factory is not glamorous work. It’s infrastructure work — the kind that nobody celebrates when it’s running well but that everyone feels acutely when it isn’t. But it is the work that determines whether the next twelve months of AI investment produces working software or another collection of promising-but-undeployed experiments. The technology exists. The patterns are proven. The only variable left is whether your organization chooses to build the factory or keep wondering why the models never seem to make it out.