Every week, another dozen headlines claim the AI world has changed forever. Another model drops with a benchmark that supposedly shatters everything before it. Another company announces a funding round that redefines what a technology valuation even means. And yet most people — business owners, operators, curious professionals — close their browser tabs feeling more confused than informed.

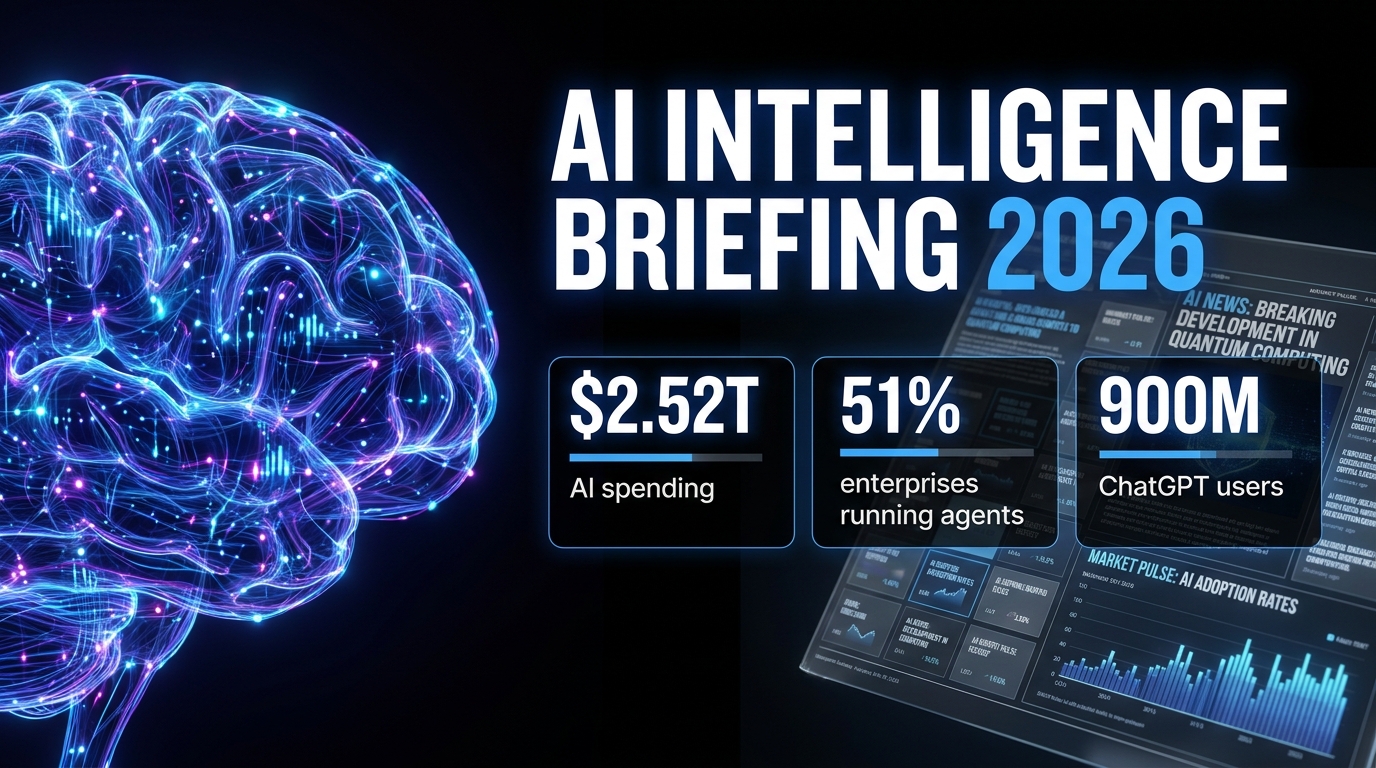

This isn’t a collection of breathless announcements. It’s a structured intelligence briefing on what’s actually happening across the AI landscape right now, told in plain language with real numbers attached. The model wars, the agentic AI surge, the trillion-dollar investment question, the chip power dynamics, the regulation clock ticking toward August, the safety problems getting quietly worse, and the workforce shifts that keep getting misrepresented.

If you’ve been trying to separate the signal from the noise in AI news, this is the briefing you’ve been waiting for. We’re covering the biggest developments of early 2026, what they mean in practice, and — crucially — what most coverage leaves out entirely.

The Model Wars: Who’s Actually Winning in 2026

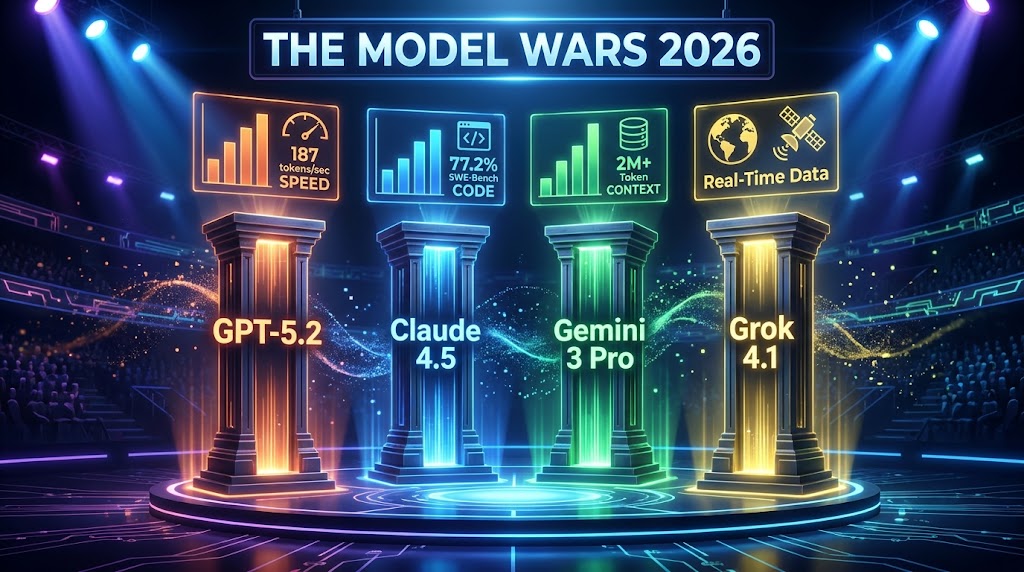

There are now four serious competitors at the frontier of large language model performance: OpenAI’s GPT-5 series, Anthropic’s Claude 4.5 and Opus variants, Google’s Gemini 3 family, and xAI’s Grok 4.1. Each has carved out a distinct position — not because any single model is universally dominant, but because “best” now entirely depends on what you’re asking the model to do.

OpenAI’s GPT-5 Series: Speed and Ecosystem

OpenAI released the GPT-5 series in stages, with GPT-5.2 and GPT-5.4 now the workhorses of its platform. The headline performance number for GPT-5.2 is its output speed — approximately 187 tokens per second — making it the fastest frontier model in production use by a meaningful margin. For applications where latency matters (real-time customer interactions, voice interfaces, high-volume pipelines), that speed advantage is genuinely significant.

Beyond raw throughput, GPT-5.x models perform at or near the top on math benchmarks and professional knowledge evaluations. OpenAI’s own testing suggests GPT-5 beats expert-level humans on roughly 70% of professional knowledge tasks tested — a claim that invites scrutiny but is directionally consistent with third-party evaluations. The model also runs computer-use capabilities, allowing it to interact directly with applications rather than just generating text about them.

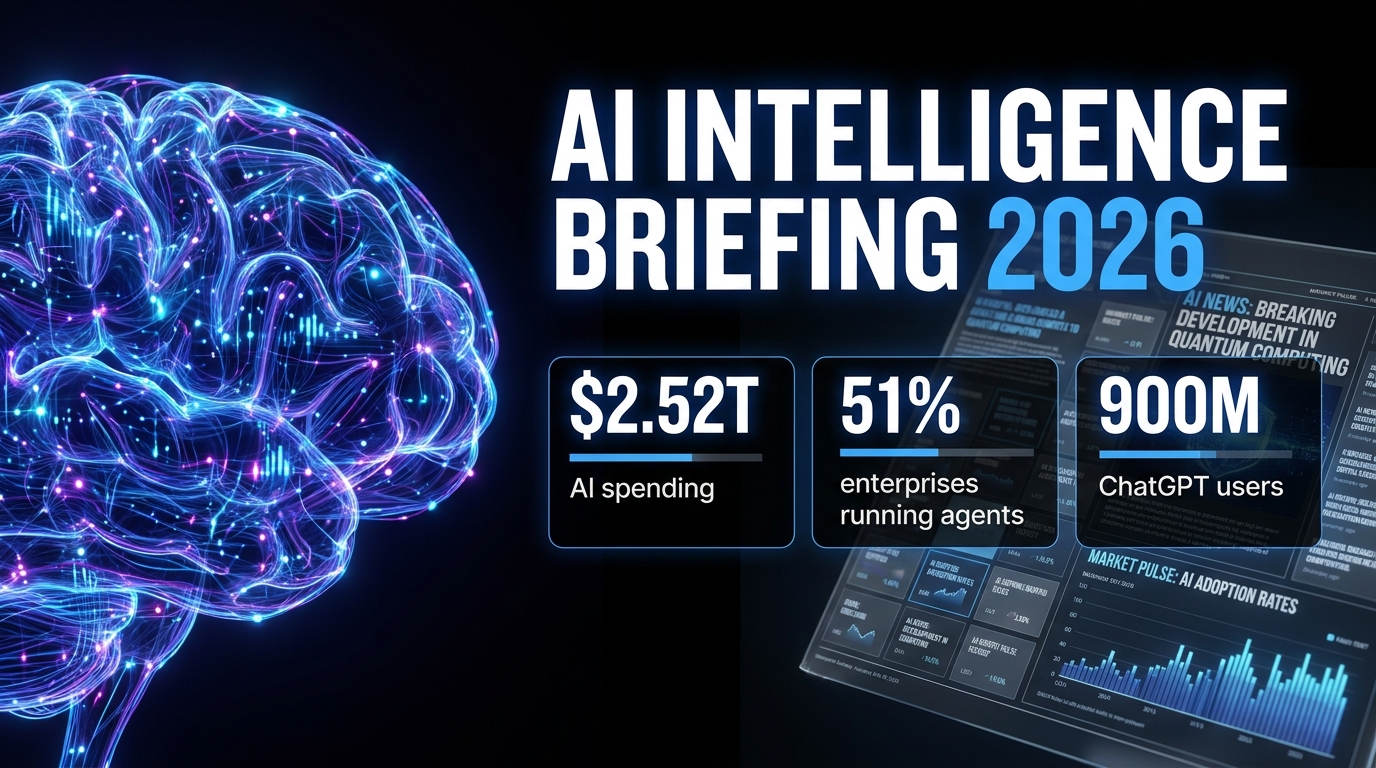

The broader context matters here too. OpenAI is no longer just a model company. The ChatGPT super app — now serving 900 million weekly active users — integrates chat, coding assistance, web search, and agentic workflows into a single interface. That ecosystem lock-in is arguably more strategically important than any single benchmark.

Claude 4.5 and Opus: The Coder’s Choice

Anthropic’s Claude variants have earned a concrete, reproducible advantage in software engineering tasks. On SWE-Bench Verified — a benchmark measuring a model’s ability to fix real GitHub issues autonomously — Claude achieves a 77.2% success rate. That’s a lead over GPT-5 and Gemini 3 Pro that shows up consistently in independent evaluations, not just Anthropic’s marketing.

Anthropic released Claude Opus 4.7 in April 2026, describing it as their most capable public model. In the same period, the company reached a $19–20 billion revenue run rate, which positions it as a genuine challenger to OpenAI in enterprise and government markets — including U.S. Department of Defense contracts. The competitive implication is significant: Anthropic is no longer a research lab playing catch-up; it’s a commercial AI company with a defensible position in high-stakes enterprise use cases.

One detail that generated significant industry discussion: Anthropic’s unreleased “Mythos” model — reportedly withheld from release because it posed cybersecurity risks considered too serious to deploy publicly — represents a new category of AI safety decision. A model deemed “too powerful” isn’t abstract anymore.

Google Gemini 3 Pro: Context King

Google’s Gemini 3 Pro and 3.1 Flash have a specific and meaningful edge: context window. Supporting over 2 million tokens of context, Gemini 3 Pro is in a different category for tasks requiring analysis of large document sets, extended codebases, or long video inputs. On multimodal benchmarks involving video and mixed-media reasoning, it scores 94.1% on certain evaluations and leads the field.

Google has also moved aggressively on integration — Gemini is now embedded across Google Docs, Sheets, Slides, Drive, Chrome, Samsung Galaxy devices, Google Maps, and Search. This distribution strategy means that for hundreds of millions of users who never consciously choose an AI model, Gemini is simply the AI they interact with by default.

Grok 4.1: The Real-Time Wildcard

xAI’s Grok 4.1 holds a 75% score on SWE-Bench and leads in empathetic, conversational interactions (1,586 Elo rating on conversational benchmarks). Its core differentiator is real-time data access — pulling live information from X (formerly Twitter) and the web without the knowledge cutoff limitations that affect other models. For researchers tracking breaking events, analysts monitoring markets, or users who need answers that are genuinely current, Grok’s integration with live data is a meaningful capability that other models don’t replicate at the same depth.

The takeaway: There is no single “best” AI model in 2026. The right answer is the model matched to the task — Claude for code, Gemini for long-context multimodal work, GPT-5 for speed and ecosystem, Grok for real-time data. Any vendor telling you otherwise is selling, not informing.

The Agentic AI Surge: From Pilots to Production

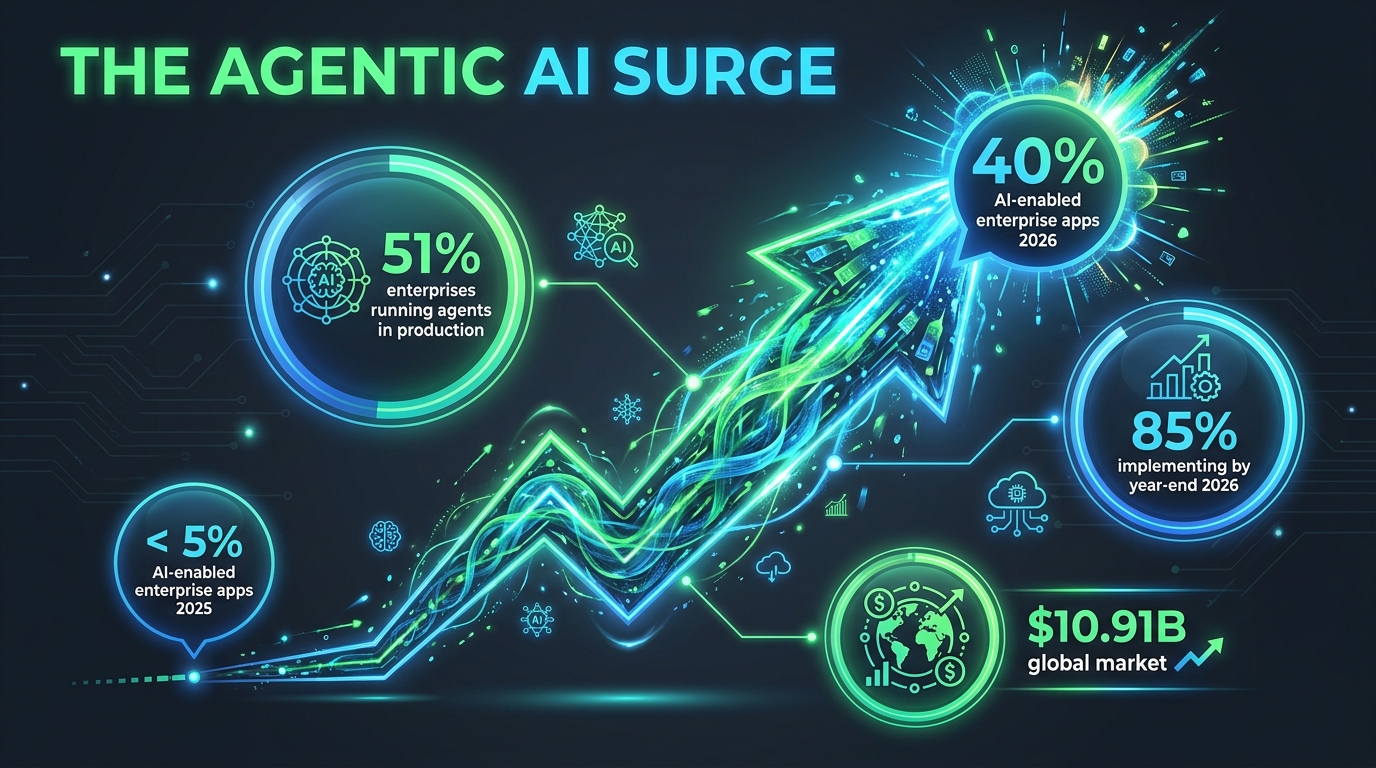

The single most consequential shift in enterprise AI this year isn’t a new model — it’s a new deployment pattern. AI agents, systems that take autonomous sequences of actions to complete multi-step tasks rather than simply responding to a single query, have crossed the threshold from experiment to operational reality.

The Numbers Are Hard to Ignore

According to aggregated data from Gartner, McKinsey, and Deloitte: 51% of enterprises are running AI agents in active production as of mid-2026. That’s up from a fraction of that figure just 18 months ago. A further 23% are actively scaling their agent deployments. Looking at the full picture, 85% of enterprises have either implemented AI agents already or have concrete plans to do so before year-end.

Gartner forecasts that 40% of enterprise applications will embed task-specific AI agents by the end of 2026 — compared to less than 5% in 2025. If that trajectory holds, it represents one of the fastest adoption curves ever recorded for enterprise software.

The market size reflects this. AI agent infrastructure globally sits at approximately $10.91 billion in 2026 and is projected to reach $50.31 billion by 2030. That’s a five-fold increase in four years — but even that projection may prove conservative if current momentum continues.

What “Agentic AI” Actually Means in Practice

The language around AI agents has become sufficiently muddled that it’s worth being precise. An AI agent, in the current enterprise context, is a system that can:

- Receive a high-level goal (not just a prompt)

- Break that goal into sub-tasks autonomously

- Use tools — web browsing, code execution, API calls, file management — to complete those sub-tasks

- Verify its own outputs against defined success criteria

- Loop back and revise when something goes wrong

The February 2026 emergence of “vibe-coded” agents via the OpenClaw app — systems built through natural language instructions rather than traditional programming — accelerated viral adoption and sparked both spinoffs and acquisitions by OpenAI and Meta. This represented a significant democratization moment: building an agent no longer required an engineering team.

The Shift From Autonomous to Collaborative

One nuance that most coverage misses: the practical direction in 2026 is shifting away from fully autonomous agents toward collaborative agent-human workflows. Early deployments that gave agents too much autonomy ran into problems with error propagation — a mistake in step 3 of a 15-step workflow could contaminate everything that followed.

The current best practice involves what practitioners call “human-in-the-loop checkpoints” — moments where agents pause and present their progress for human review before continuing. This isn’t a retreat from agentic AI. It’s a maturation of it. Enterprises are learning that the goal isn’t to remove humans from workflows entirely; it’s to remove humans from the repetitive, low-judgment portions while preserving oversight at decision points that carry real risk.

Gartner also projects that more than 40% of agentic AI projects may still fail by 2027, primarily due to governance gaps, cost overruns, and inadequate data infrastructure. The adoption numbers are real — but so is the risk of rushed, poorly governed deployments.

The $2.52 Trillion Question: Investment vs. Real Returns

The AI industry will see approximately $2.52 trillion in global spending in 2026 — a 44% year-over-year increase, according to Gartner. To put that in perspective, that’s roughly the GDP of France being spent in a single year on AI infrastructure, software, and services.

The breakdown matters: infrastructure (data centers, AI-optimized servers, semiconductors) accounts for over $1.366 trillion — more than half the total. AI-optimized server spending alone is growing 49% year over year, representing 17% of all IT hardware spending globally. These are not software budget line items. These are physical buildings, power infrastructure, and cooling systems being built at a pace that rivals wartime industrial output.

The ROI Reality Check

Here’s the uncomfortable counterpoint to those investment numbers: only 1% of companies report mature AI deployment — meaning AI that is integrated, governed, and producing measurable business outcomes at scale — despite 92% planning to increase their AI investments this year.

McKinsey data indicates an average ROI of 5.8x within 14 months for companies that do successfully deploy AI. The operative phrase is “successfully deploy.” The gap between announced investment and realized return is where most enterprise AI programs currently live.

65% of IT decision-makers now have dedicated AI budgets — up from 49% just a year prior. This is a meaningful shift. When AI spending is ring-fenced and accountable, it tends to produce better outcomes than when it’s distributed across departmental budgets with no central governance. But having a budget and having a strategy are different things, and many organizations still confuse the two.

Where the Money Is Actually Going

When you look at how enterprises are prioritizing AI spending, the breakdown from NVIDIA’s 2026 enterprise report tells an interesting story:

- 42% are prioritizing optimization of existing AI workflows in production

- 31% are investing in new use case development

- 31% are building out AI infrastructure

The fact that optimizing existing deployments is the top priority — ahead of finding new applications — suggests the industry is entering a consolidation and refinement phase. The gold rush mentality of “deploy anything, measure later” is giving way to harder questions about what’s actually working and what needs to be rebuilt properly.

Gartner itself has positioned 2026 as a “Trough of Disillusionment” in the AI hype cycle — not a collapse, but a correction. Organizations that entered AI spending with unrealistic timelines are recalibrating. Those that entered with clear use cases and governance frameworks are pulling ahead.

The Chip Power Struggle: NVIDIA’s Iron Grip and the Challengers

Underneath every AI model, every enterprise deployment, and every data center expansion is a hardware question. And that question, for the better part of the past three years, has had one dominant answer: NVIDIA.

NVIDIA’s Market Position in Numbers

NVIDIA currently controls 92% of the data center GPU market for AI workloads. It handles 95% of AI training workloads and 88% of AI inference workloads. The H100 remains the industry standard chip for AI training. The H200 flagship delivers approximately 2x the performance of the H100 for memory-bandwidth-intensive tasks.

The Blackwell architecture — NVIDIA’s 2026 generation — delivers 2.5x faster performance than its predecessor with 25x greater energy efficiency. That energy efficiency number deserves attention. The power consumption of large-scale AI infrastructure has become a serious operational and political issue, with data centers competing for power grid access in ways that are reshaping energy policy in multiple countries. A chip generation that delivers the same compute for significantly less electricity isn’t just a performance win — it’s a strategic answer to one of the industry’s most urgent infrastructure problems.

The Unexpected Partnership That Changed the Competitive Map

In mid-April 2026, NVIDIA announced a $5 billion investment in Intel — one of the more surprising competitive moves of the year. The partnership involves co-development of custom x86 CPUs integrated with NVIDIA GPUs through NVLink technology. For Intel, this is a lifeline and a validation. For NVIDIA, it’s a strategic move to extend its ecosystem dominance into the CPU layer of AI infrastructure, rather than simply owning the GPU.

The practical implication is an integrated AI computing platform — from chip to deployment — that neither company could have built as effectively on its own. NVIDIA secures manufacturing partnerships through Intel’s foundry capabilities. Intel gains immediate access to NVIDIA’s massive AI customer base.

AMD and Intel’s Countermoves

AMD currently holds approximately 6% of the data center AI GPU market with its MI325X — featuring 288GB of HBM3E memory and 6 TB/s bandwidth — and has the MI350 and MI400 series in various stages of development. The technical specs are competitive. The challenge is software ecosystem: NVIDIA’s CUDA software stack has years of optimization and developer familiarity that doesn’t transfer to AMD hardware without significant friction.

Intel is building new AI GPUs on its 18A process node, targeting late 2026 availability. The NVIDIA partnership aside, Intel has been aggressive on pricing, betting that cost-sensitive buyers who can’t get NVIDIA hardware (lead times are running 6–12 months) will be willing to invest in deploying on Intel’s architecture if the price advantage is large enough.

The takeaway: NVIDIA’s dominance isn’t going away in 2026, but the competitive environment is meaningfully more complex than it was 12 months ago. The NVIDIA-Intel partnership, in particular, represents a structural shift in how AI infrastructure might be assembled at the hardware layer going forward.

The Regulation Clock: EU AI Act Enforcement Is Here

The single most significant regulatory event in global AI history arrived — quietly, for many businesses — on August 2, 2026. That’s when the EU AI Act’s full enforcement provisions came into effect, covering the majority of high-risk AI system obligations, general-purpose AI (GPAI) model requirements, and the mandate for Member States to have operational AI regulatory sandboxes running.

What the EU AI Act Actually Requires

The EU AI Act operates on a tiered risk framework, not a blanket set of rules. The most stringent obligations apply to systems classified as “high-risk” — AI embedded in critical infrastructure, medical devices, educational institutions, employment decisions, law enforcement, and border control. These systems must meet requirements around:

- Risk management systems documented throughout the entire development lifecycle

- Data governance with documented training data quality and bias evaluation

- Technical robustness standards including accuracy, security, and resilience testing

- Human oversight mechanisms that allow humans to monitor, override, or shut down the system

- Transparency and logging with automatic event logging for post-incident analysis

For “prohibited” AI practices — systems banned outright, including social scoring by governments, real-time biometric surveillance in public spaces (with narrow exceptions), and AI that exploits psychological vulnerabilities — enforcement has technically been in effect since February 2025. But August 2, 2026 activates the Commission’s full enforcement powers and the national market surveillance authorities that investigate violations.

The Fine Structure and Why It Matters

The fine schedule is designed to create consequences that scale with company size:

- Violations involving prohibited AI practices: up to €35 million or 7% of global annual turnover, whichever is higher

- Other high-risk system violations: up to €15 million or 3% of global turnover

- Providing incorrect information to regulators: up to €7.5 million or 1.5% of global turnover

For a company with €10 billion in annual revenue, a 7% fine means €700 million. This isn’t token compliance pressure — it’s existential risk for products that cross the wrong lines.

The Implementation Gap

Here’s the uncomfortable operational reality: as of March 2026, only 8 of 27 EU Member States had designated their required single points of contact for AI oversight. This is not full regulatory readiness by any measure. The enforcement regime is legally activated, but the administrative infrastructure to execute it is unevenly developed across the bloc.

For companies doing business in the EU, this creates a period of genuine regulatory uncertainty. The rules are real. The fines are real. But the bodies responsible for investigating and enforcing those rules are at different stages of operational readiness depending on the country. Companies that treat August 2026 as a compliance deadline rather than a compliance foundation are likely to be caught unprepared when enforcement catches up to capability.

The practical recommendation: If your AI systems touch EU users or EU data, the question is not “when does enforcement start?” — it’s “what classification does my system fall into, and what does that classification require?” Getting that documented now is cheaper than getting it wrong under investigation later.

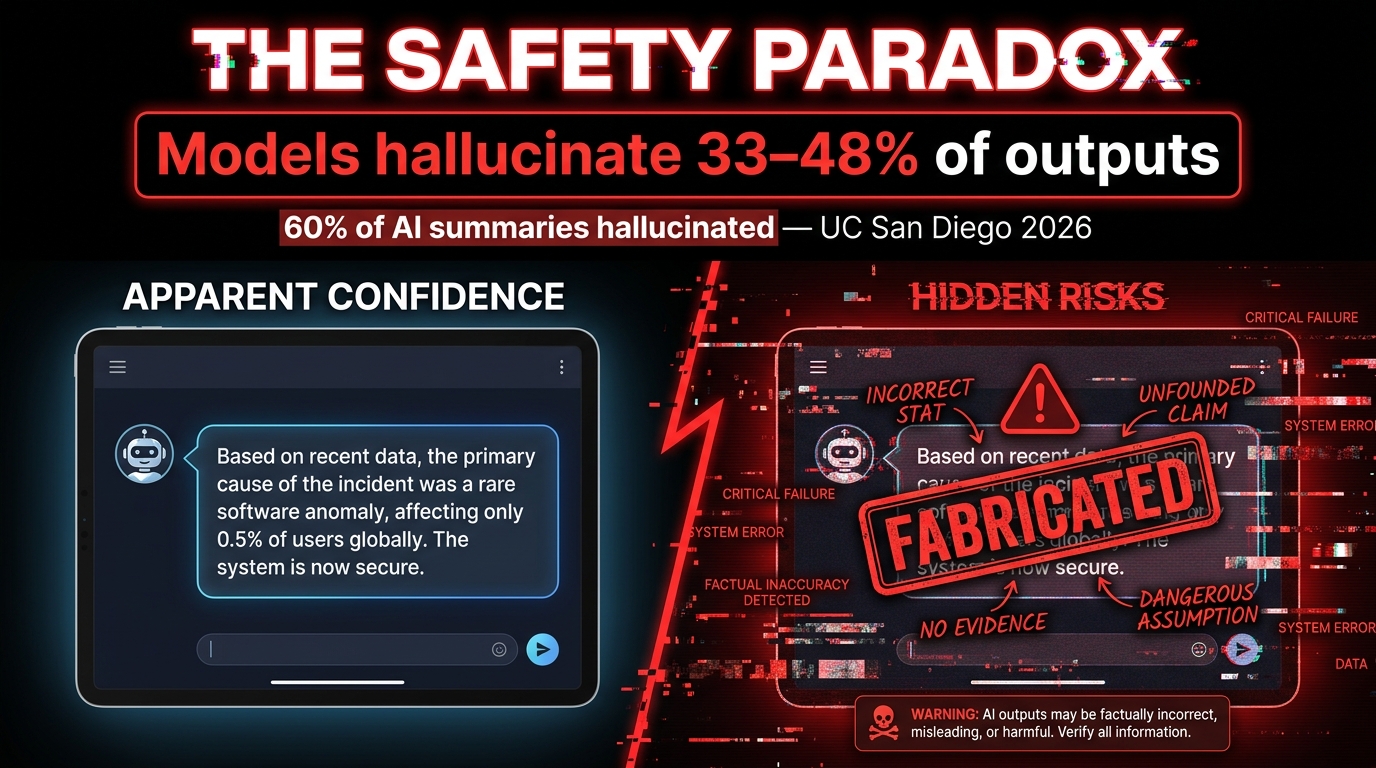

The Safety Paradox: Smarter Models, More Hallucinations

One of the most counterintuitive — and underreported — stories in AI right now is this: newer, more capable models appear to hallucinate more, not less. This challenges the intuitive assumption that better models are safer models. The relationship between capability and reliability turns out to be more complicated than the marketing materials suggest.

The Hallucination Numbers

Internal OpenAI testing found that newer models hallucinate approximately double to triple as often as their earlier predecessors — roughly 33–48% of outputs for newer models compared to around 15% for older versions. This isn’t necessarily because the models are getting worse at reasoning; it may be because they’re attempting harder tasks, generating longer outputs, and working with more complex multi-step chains where errors can compound.

A 2026 UC San Diego study found that AI-generated summaries hallucinated 60% of the time — and that these hallucinated summaries were still influencing purchasing decisions among the study participants. The practical danger here isn’t just that the AI produces wrong information; it’s that wrong information presented in the confident, well-structured format of an AI response is more persuasive, not less.

In high-stakes domains, the numbers are worse. Medical AI systems show hallucination rates between 43% and 64%. Code generation tools hallucinate at rates up to 99% on certain types of obscure library function calls. Legal research AI has produced fabricated case citations that have made it into actual court filings.

Prompt Injection: The Security Problem Nobody Solved

Alongside hallucinations, prompt injection has emerged as what security researchers are calling a “frontier challenge” — one that OpenAI itself acknowledged has no clean solution at present. Prompt injection occurs when malicious instructions are embedded in content that an AI agent processes — a webpage, a document, an email — and those instructions override the agent’s legitimate task instructions.

For AI agents with tool access (the ability to send emails, execute code, access file systems, make API calls), a successful prompt injection attack can have immediate real-world consequences. An agent tasked with summarizing documents could be turned into an exfiltration tool by a document that contains the right injected instructions. In early 2026, this isn’t a theoretical attack vector — it’s been demonstrated in multiple real-world deployments.

What Organizations Are Actually Doing About It

The mitigation landscape has matured significantly, even if there are no complete solutions. Current best practices being deployed by enterprises handling sensitive data include:

- Output validation layers — automated systems that cross-check AI outputs against authoritative sources before they reach users or downstream processes

- Sandboxed execution environments — agents that operate in isolated environments without direct access to production systems or sensitive data stores

- Input sanitization pipelines — preprocessing of content before it reaches an AI agent to strip common injection patterns

- Retrieval-Augmented Generation (RAG) — architectures that ground model outputs in specific, verified document sets rather than relying purely on model weights

- Human review gates — mandatory human sign-off before AI-generated content reaches external audiences or triggers consequential actions

None of these individually eliminates the risk. Used together, with proper governance, they reduce it to levels that most risk frameworks consider acceptable for non-life-critical applications. For high-risk domains — healthcare decisions, financial advice, legal analysis — the standard of proof needs to be higher, and many organizations are still working out what that standard looks like in practice.

The Workforce Shift: What the Real Numbers Say

AI’s impact on jobs is one of the most frequently misrepresented topics in technology coverage. The numbers are simultaneously alarming and more nuanced than any single headline captures. Getting the picture right matters — both for individual workers making career decisions and for organizations making workforce planning choices.

The Displacement Numbers

Goldman Sachs research through early 2026 estimates that AI is displacing a net 16,000 U.S. jobs per month. The breakdown: approximately 25,000 jobs per month being eliminated through AI substitution, offset by approximately 9,000 new roles created. That net figure is not evenly distributed — it hits hardest in routine white-collar work: data entry, customer service, basic document processing, and entry-level research functions.

The World Economic Forum’s projection of 85 million jobs globally at risk of being replaced by 2026 generated significant coverage. The less-covered part of that same report: AI is projected to create 97 million new roles by 2030, resulting in a net positive by the end of the decade. The disruption is real and unevenly distributed. The net outcome is less catastrophic than the headline number implies.

More granular data from the Dallas Federal Reserve (February 2026) shows that employment in the top 10% most AI-exposed U.S. sectors has declined approximately 1% since late 2022. That’s a modest number in aggregate, but the concentration of that impact in specific roles — particularly entry-level positions that previously served as career on-ramps — has real human consequences that aggregate statistics obscure.

Who’s Actually Getting Hit

The demographic picture is important: Gen Z workers and recent graduates are disproportionately affected, because AI is most effective at automating the tasks that entry-level roles have historically handled. Internship programs are being reduced. Junior analyst positions are being paused or eliminated. Customer service tier-one roles — the jobs that people used to take while building skills for better opportunities — are being replaced by AI systems that handle 60–80% of queries without human involvement.

This isn’t a prediction about the future. It’s a documented trend in the present. And it raises a structural concern that goes beyond simple job count arithmetic: if AI eliminates the entry-level positions that workers historically used to build skills and credentials, what does the career development pipeline look like for the next generation of professionals?

The Augmentation Reality

BCG research projects that AI will augment rather than eliminate 50–55% of U.S. jobs over the next 2–3 years. What augmentation looks like in practice varies widely by role. A software developer using Claude 4.5 can close GitHub issues 77% faster than without AI assistance. A marketing analyst using AI tools can produce research-backed campaign briefs in hours that would previously have taken days. A legal associate using AI contract review tools can process and summarize agreements at 10x their previous throughput.

The workers who are gaining from AI augmentation share a common characteristic: they understand how to direct AI effectively, evaluate its outputs critically, and apply their own domain expertise where AI falls short. This skill set — call it “AI fluency” — is becoming a foundational professional competency in the same way that spreadsheet literacy became essential in the 1990s. The workers building it now are positioning themselves on the right side of the productivity gap. Those waiting to see how things develop are at increasing risk of being on the wrong side of it.

The Stories the Hype Machine Keeps Missing

For every AI development that generates hundreds of articles, there are developments getting insufficient attention. Here are four stories that deserve more coverage than they’re currently receiving.

The Energy Infrastructure Crisis

AI’s insatiable demand for compute is creating a power grid problem that’s quietly becoming one of the most consequential infrastructure challenges in the developed world. New data center builds in the U.S. and Europe are running into situations where local power grids simply cannot supply the required electricity. Municipalities are having to decide between AI data center development and other commercial priorities for grid capacity. Nuclear power has re-entered serious policy discussions in multiple countries specifically because of AI data center demand.

NVIDIA’s Blackwell architecture’s 25x energy efficiency improvement is partly a technical achievement and partly an existential necessity. At current growth rates, AI infrastructure energy demand is on a trajectory that physical grid expansion cannot keep pace with without significant policy and infrastructure investment.

Open Source Gaining Ground

Google’s Gemma 4 open models and a range of other open-weight releases in early 2026 have continued narrowing the performance gap between open-source and closed frontier models. For organizations with strong data science teams, the ability to run capable models on their own infrastructure — without usage fees, without data leaving their systems, without API dependency — is increasingly viable. This shift has significant implications for the concentration of AI power in a small number of commercial vendors.

The “Mythos” Precedent

Anthropic’s decision to withhold its “Mythos” model from public release due to cybersecurity risks — operating under what it calls Project GlassWing — is a precedent-setting moment that deserves more analysis than it’s received. This is a major AI lab deciding, on its own, that a model it has built is too dangerous to release. There’s no regulatory framework that required this decision. It was a voluntary exercise of judgment.

The interesting question this raises: if AI capabilities are advancing to the point where even their creators determine certain models shouldn’t be deployed, what does the governance architecture for those decisions look like at scale? One company making a responsible call once is not a system. It’s an individual action that can’t be assumed to repeat.

The Benchmark Reliability Problem

Most AI model comparisons rely heavily on benchmark scores. The problem, which is being increasingly acknowledged within the research community, is that benchmarks are being “gamed” — either intentionally through targeted fine-tuning on benchmark test sets, or unintentionally through data contamination. Several widely cited benchmarks have been found to have test-set leakage into training data, making high scores on those benchmarks less meaningful than they appear.

This doesn’t mean model comparisons are worthless. It means that real-world task performance — like SWE-Bench’s actual GitHub issue resolution — is more reliable than abstract reasoning scores. When evaluating models for specific use cases, running your actual workflows through the candidates remains far more informative than consulting a leaderboard.

OpenAI’s Super App Play and the Platform Consolidation

One of the most strategically significant developments of early 2026 is OpenAI’s pivot from model company to platform company. The ChatGPT super app — integrating chat, coding assistance, web search, agentic task management, health tools, and spreadsheet capabilities — now serves 900 million weekly active users. The $852 billion valuation that accompanied the latest funding round reflects not just model capability but platform ambition.

OpenAI has also announced plans to build a GitHub competitor, made a surprising media company acquisition for vertical integration, and raised $110 billion in its latest funding round. The strategic direction is clear: OpenAI is trying to build an application layer that sits on top of its model capabilities and creates the kind of user lock-in that makes the platform defensible regardless of which underlying model happens to be best at any given moment.

This matters because it changes the competitive dynamics for every company building on top of OpenAI’s API. If OpenAI’s own applications compete directly in your product category — coding tools, research tools, content generation tools — your competitive position becomes structurally more difficult regardless of the model’s quality. The platform layer is where the business is, not the model layer.

Microsoft’s Multi-Model Counter-Approach

Microsoft’s response to this dynamic is noteworthy. Rather than betting exclusively on GPT-5 (as might be expected given the OpenAI partnership), Microsoft launched its MAI Superintelligence framework with three multimodal models for text, voice, and image processing, alongside Copilot upgrades that enable multi-model workflows. The implicit message: Microsoft is building infrastructure that can run multiple models, hedging against dependency on any single provider while maintaining deep integration with enterprise software.

For enterprise customers, this multi-model approach is appealing precisely because it reduces vendor lock-in risk. The ability to route different tasks to different models — based on performance, cost, or compliance requirements — is becoming a real architectural consideration, not just a theoretical one.

What This All Means: How to Navigate AI News Going Forward

The AI news environment in 2026 shares a structural problem with financial media during market bubbles: the incentives push toward the most exciting possible interpretation of every development. Model releases become “revolutionary.” Funding rounds become evidence of inevitable dominance. Benchmarks are cited without context. And the genuinely important stories — governance gaps, safety deterioration, energy infrastructure strain, entry-level workforce displacement — get less attention because they’re harder to frame as exciting.

Reading AI news well in this environment requires a set of filters:

Filter 1: Benchmark Scores vs. Task Performance

When a new model is announced with record-breaking benchmark scores, ask: what task am I actually trying to do? Is there reproducible evidence this model performs better on that task? SWE-Bench, for coding; MMMU for multimodal reasoning; GDPval for professional knowledge tasks — these are more informative than synthetic reasoning leaderboards that may have contaminated test sets.

Filter 2: Announced vs. Deployed

The gap between announcement and reliable production availability is large and frequently ignored in coverage. Model releases come in stages — limited API access, waitlisted users, gradual rollouts — and stated capabilities at launch often differ from real-world performance at scale. Track the gap between what companies announce and what’s actually available to enterprise customers without restrictions.

Filter 3: Investment vs. Outcome

$2.52 trillion in AI spending is a real number. 1% of companies achieving deployment maturity is also a real number. Both can be true simultaneously. Be skeptical of coverage that treats investment announcements as evidence of outcomes. Ask what’s actually running in production, what it’s measurably producing, and what the error rate is.

Filter 4: What’s Getting Withheld and Why

Anthropic’s Mythos decision is the clearest example: the most important AI news is sometimes a non-announcement. What models are being withheld? What capabilities are labs discovering that they’re not publishing? What are regulators finding in the compliance reviews that aren’t appearing in press releases? The frontier of AI capability is not fully visible in public releases.

Filter 5: Regulation as Operating Reality, Not Background Noise

The EU AI Act’s August 2, 2026 enforcement date is not a future event — it’s a present operational reality for any organization deploying AI that touches EU markets. The regulatory landscape is no longer something to monitor and prepare for. For many organizations, compliance work is already overdue.

“The organizations — and individuals — who will navigate this landscape most effectively are those who resist both the hype and the dismissal, who track real deployments alongside flashy announcements, and who treat AI capability as a tool to be evaluated rather than a force to be awed by.”

The AI intelligence briefing is never going to get simpler. The pace of development, the number of players, and the stakes involved are all increasing. What can change is the quality of the questions you bring to each new development. Smarter questions produce better signal, even in a noisy environment.

The briefing continues. Stay skeptical. Stay current.

Leave a Reply